|

Pope Guilty posted:The alt-right likes to claim that they're better for LGBT people than the left because all Muslims want nothing but to murder LGBT people, and therefore since the alt-right wants to murder all Muslims, they are better than the left on LGBT issues. That's all there is to it. Yeah, it's this. It extends to some other right wing groups too.

|

|

|

|

|

| # ? May 9, 2024 02:24 |

|

Space Poodle posted:Can confirm.

|

|

|

|

mister-mean-spirited.blogspot.com/2017/03/sabotaging-future.html In which MMS hopes the world is worse for future generations. In about two weeks, he will resume moaning about how the SJeW liberal illuminati are making things worse and this is bad, and none of his fans will realize the contradiction.

|

|

|

|

The Vosgian Beast posted:and none of his five fans will realize the contradiction. ftfy

|

|

|

|

divabot posted:ftfy It used to be six, but one left to become a youtube MGTOW guru

|

|

|

|

The Vosgian Beast posted:It used to be six, but one left to become a youtube MGTOW guru  yeah, probably a fuzzy circle

|

|

|

|

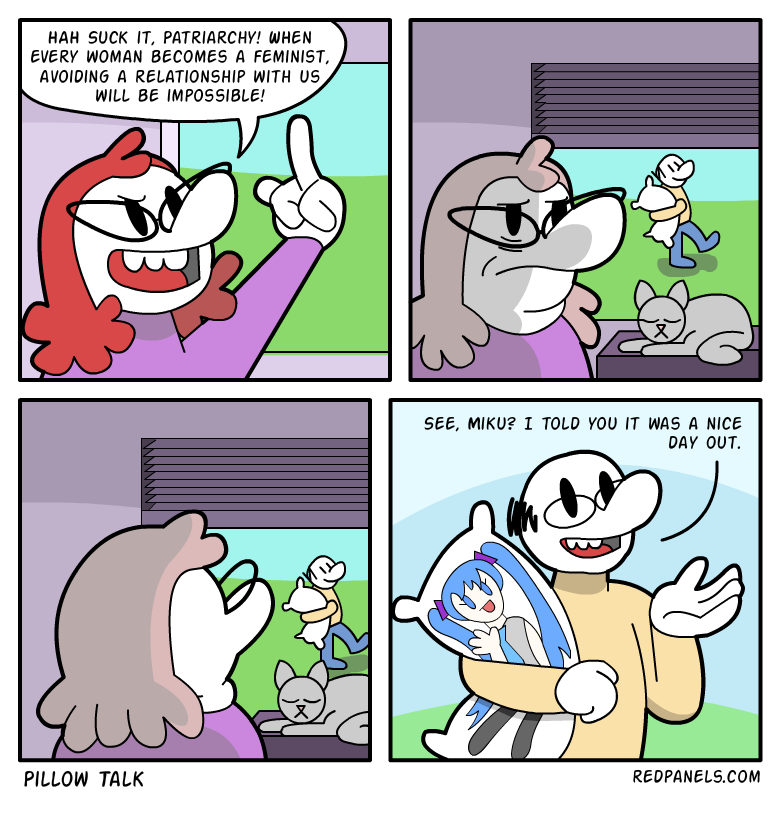

Nice self own, Red Panels.

|

|

|

|

|

Further on the alt-right/MRA fuzzy circle, from David Futrelle (We Hunted The Mammoth) in New York Magazine: Inside the Dangerous Convergence of Men�s-Rights Activists and the Extreme Alt-Right - specifically about James Jackson, the dapper young neonazi who went to New York to stab a black guy.

|

|

|

|

The Vosgian Beast posted:mister-mean-spirited.blogspot.com/2017/03/sabotaging-future.html You drat kids get off my lawn posted:Young people, loud, obnoxious, joyful, optimistic, hopeful, and these days most likely "liberal", "tolerant" and in favour of "diversity" are the most despicable people on the planet.

|

|

|

|

chitoryu12 posted:Nice self own, Red Panels. why can't bigots draw for poo poo?...

|

|

|

|

chitoryu12 posted:Nice self own, Red Panels. Yeah, "The KKKlurf" was pretty good at those.

|

|

|

|

Ezra Klein's podcast posted:Ezra Klein: The rationality community. From Ezra Klein�s latest podcast, 1:31:03 on. (Klein�s transcript does not quite match the audio.) Tyler Cowen chose this particular segment to quote on his own blog; wonder if he will be cast out into the rationalist never-again-quoted outer darkness with Greg Egan. (good lord, the comments on Cowen�s blog are a toilet to rival those on SSC.) Scott Alexander contributes this gem (archive): Scott posted:One day I�m going to break past Senate security, run up to Bernie Sanders, and start yelling �You call yourself a socialist. But do you think you and your friends are the only people who are social? Do you think literally everyone else is a total 100% introvert? Huh? HUH?!� yeah that�s great Scott and totally not inane flailing at the hated outgroup Oh, Yudkowsky's Arbital team has finally given up on it, except Yudkowsky's still using it as his serious writing platform. So the front page is now a "coming soon" for the planned microblogging platform.

|

|

|

|

btw, the quality MIRI sneer culture fodder is now at /r/ControlProblem. in which we see rationalists(tm) expound upon the AI safety implications of how those vile transgenders will PAPERCLIP US ALL!!!! and the rationalists were doing so well with transgender issues up to now (or at least the tumblr ones)

|

|

|

|

You know, calling themselves rationalists would be a lot less irritating if they just switched it to "Bayesian Rationalist" or something. It'd be like if people tried to take a term that meant a particular kind of socialism and turned it into a term that meant an-cap oh wait that happened with "Libertarian" nm

|

|

|

|

divabot posted:Oh, Yudkowsky's Arbital team has finally given up on it, except Yudkowsky's still using it as his serious writing platform. So the front page is now a "coming soon" for the planned microblogging platform. From the comments: quote:It seems that while you haven't achieved your full goals, you have created a system that Eliezer is happy with, which is of non-zero value in itself (or, depending on what you think of MIRI, the AI alignment problem etc., of very large value). It was a failure, but it was fine because it made Leader happy. (Cultishness? In my Less Wrong?) Elsewhere, it looks like the Thiel Foundation stopped giving to MIRI a few years back. Guess you don't need to bother buying the favor of weird cranks when instead you can buy the favor of a weird crank that's also the president.

|

|

|

|

Big Yud defending Thiel's honor on the Trump thing honestly makes more sense if you assume he doesn't want to prevent Thiel from donating to him again. That said I'm not sure he's even capable of that level of strategizing and diplomacy, so

|

|

|

|

The Vosgian Beast posted:You know, calling themselves rationalists would be a lot less irritating if they just switched it to "Bayesian Rationalist" or something. Eh, it feels more like those twits who tried to get the term 'Brights' to be a thing.

|

|

|

|

divabot posted:in which we see rationalists(tm) expound upon the AI safety implications of how those vile transgenders will PAPERCLIP US ALL!!!! Oh cool, I haven't read something that loving hateful in a while. (The entire argument is that only MTF trans people exist and also that they're all secret perverts that get off on dressing like women and that somehow that's a massive danger if they're involved in AI because all trans people are wealthy Silicon Valley programmers)

|

|

|

|

There�s a novella by Michael Blackbourn called Roko�s Basilisk on Kindle. Its themes are what you�d expect from that title - strong AI, the end of civilisation as we know it. It�s up for free on Kindle (UK link, US link) today (Sunday 2 April 2017) only. Just finished it (it�s very short and a quick and easy read). It�s a quick psychological horror short. Basically it takes the concepts behind roko�s basilisk and puts them into story form. The character �Roko� plays both Yudkowsky and real-life Roko and explains the killing meme to his not-as-brilliant friend. In this world �friendly AI� is a term used in real AI research (rather than something that gets real AI researchers punching walls harder than chemists do at �nanobots�). �Roko� has solved Coherent Extrapolated Volition or something close enough for a scifi handwave and worked out the basilisk, and frightened the poo poo out of himself. I � literally can�t judge how effectively it does this, because having written and exhaustively footnoted most of the RW article on the subject it is impossible for me to judge how well it gets it all across to the previously-unaware reader. It seems to end abruptly, but the next episode is available (though not for free). It�s not terrible even if the editing is sloppy and it�s well worth $0.00 for a quick read and might even be worth its usual 99p/$1.23. I would be interested to hear what others made of it. edit: he also has AI Box Experiment the Short Story. I'd love to see fans of rationalfic reacting to this stuff. divabot has a new favorite as of 20:38 on Apr 2, 2017 |

|

|

|

I've kinda wanted to write a short story where Yud slowly realizes that his only accomplishment in life is writing Harry Potter and the Smugness of Atheists. And maybe, just maybe, the reason he can't use his overwhelming genius to actually help society is because he's in Robot Hell, being tortured for all eternity. Oh well.

|

|

|

|

quote:I am a doctoral student in population genetics at an R1 institution, and most of my friends in my cohort are also in population genetics. Every single one of them was horrified by the comments full of what they considered to be racist crackpots in a place that is supposed to be a bastion of rationality. HBD (and associated race/IQ stuff) is seen as a perversion of our field for the most part, and we are overall deeply suspicious of people who fixate on the heritability of IQ, for obvious reasons. I now feel like I cannot introduce anyone in my social circles to this community, who are exactly the kind of people who would get the best use out of it and would be great contributors here. The association of this community with the HBD community needs to be discussed, because it reveals a blindspot for rationality in a community devoted to it. This could also have devastating effects, because ideas introduced on the blog that could be useful to most people could never be picked up in the larger population in the future, through being stained by association with the HBD community, which is largely discredited among the entirely of academia. This warrants discussion, because I believe the community is kind of at a crossroads, and the current set-up is largely unsustainable if these ideas are to reach the wider population. Outside view from population geneticist: Slate Star Codex and its subreddit are mostly about being huge loving racists. Gosh, etc. quote:TL;DR: Love the blog, far-right commentators here are starting to ruin my experience, cannot show this to other people I know now, and the association with HBD could severely circumscribe the influence of Scott�s ideas in the future. Discuss. Mod asks posters to be civil. Immediate response from one commenter: "It seems ill advised to let an OP attack the community and muzzle the incredibly predictable response." BECAUSE WE KNOW WHAT TO DO ABOUT THE HATED OUTGROUP DON'T WE. (Credit to the mod in question for proceeding to hand out bans like much-in-demand candy.) Most of the comments follow in similar tone. Hits a remarkable selection of notes on the kazoo of Dark Enlightenment audodidacticism. Particular credit for filibusting to user alpsgolden, whose Reddit comment history seems to substantially consist of spreading the Good Word of The Bell Curve to unrelated subs.

|

|

|

|

Improbable Lobster posted:The entire argument is that only MTF trans people exist and also that they're all secret perverts that get off on dressing like women and that somehow that's a massive danger if they're involved in AI because all trans people are wealthy Silicon Valley programmers I mean if the only transwoman who will talk to you is Justine Tunney I can sort of understand how you could get such, uh, skewed ideas.

|

|

|

|

divabot posted:btw, the quality MIRI sneer culture fodder is now at /r/ControlProblem. Mind you, the autogynephilia theory was one of the things the proto-alt-right were pushing for back in the days of VDARE and HBI being their thought-leaders, so there's form there.

|

|

|

|

TinTower posted:Mind you, the autogynephilia theory was one of the things the proto-alt-right were pushing for back in the days of VDARE and HBI being their thought-leaders, so there's form there. It's been coming back in a big way, the trans /pol/-dwelling person I know was very worried because people told her she just had AGP and wasn't "really" trans, whatever the hell that means, and I'm like "uhh maybe you should hang out with some less garbage people" "well I'm going to a trans meetup but they're all SJW's so I'll have to filter myself for those cucks" She has, uh, issues.

|

|

|

|

ate all the Oreos posted:It's been coming back in a big way, the trans /pol/-dwelling person I know was very worried because people told her she just had AGP and wasn't "really" trans, whatever the hell that means, and I'm like "uhh maybe you should hang out with some less garbage people" "well I'm going to a trans meetup but they're all SJW's so I'll have to filter myself for those cucks" Based on other posts on this person's blog, they might be in the same boat. This sort of person is becoming distressingly common.

|

|

|

|

divabot posted:Outside view from population geneticist: Slate Star Codex and its subreddit are mostly about being huge loving racists. Gosh, etc. Gotta love that their concern isn't that the racism is bad, but that it makes evangelizing the other stuff harder.

|

|

|

|

divabot posted:Outside view from population geneticist: Slate Star Codex and its subreddit are mostly about being huge loving racists. Gosh, etc. Speaking of HBD bullshit, do we have a good source for debunking it? The third result I got while searching for debunks had this to say: Robert Lindsay posted:Most HBD is directed at Blacks, and the whole movement bristles with contempt for them.

|

|

|

|

divabot posted:There�s a novella by Michael Blackbourn called Roko�s Basilisk on Kindle. Its themes are what you�d expect from that title - strong AI, the end of civilisation as we know it. It was the worst loving plot twist.

|

|

|

|

Pitch posted:It was the worst loving plot twist. Wow, I almost read that book. I feel spared.

|

|

|

|

McGlockenshire posted:Speaking of HBD bullshit, do we have a good source for debunking it? I'll again namedrop the RW article on racialism, which is literally for this intended purpose. It's a Cover Article so should be any good for this sort of thing. If there are any particular tropes it doesn't seem to address, please bring them up on the talk page! (The talk page is less of a garbage fire than it looks - pretty much 100% of the HBD advocacy is sockpuppets of incredibly banned user Mikemikev, who is quietly famous in the world of single-issue shitheads banned from every forum.)

|

|

|

|

That racialism page is going to be getting a good workout over the next several years I think. Scientistic racism is dangerous because it's not inherently implausible (compare lizard people), it just happens to be empirically false. I'm expecting it to make some quiet headway among geek circles that enjoy learning little bits of 'science' and might even consider themselves well-meaning. But spreading an empirical inoculation is a countermeasure to that.

|

|

|

|

Racism is like the worst mind-virus ever. It's so hard to eradicate. All of our human vulnerabilities play into it -- people look different, so that means there are categories of people. Plus in the U.S. we all grow up with a level of historical background radiation that makes racism seem like the reasonable default. It takes SO MUCH EDUCATION to counteract. It's just the loving common cold of memes.

|

|

|

|

Peel posted:That racialism page is going to be getting a good workout over the next several years I think. and is thus a hazard for autodidacts. alpsgolden brags that he knows HBD is true because he did his research, someone answers reasonably: quote:I'm writing an essay to post to this sub right now about this, but basically, if you're not an expert or guided by one, then it's very easy to read extensively with curiosity and open-mindedness and end up more wrong than when you started. I can very easily understand, for example, how a nonexpert could find Emil Kirkegaard's research worth discussing and considering, but someone who knows can tell at a glance. and alpsgolden responds with a "well what is knowledge anyway" like his original text isn't right above. And further down that it's actually the left's fault, because edit: AHAHAAHA kirkegaard shows up personally and says IT'S ALL RATIONALWIKI'S FAULT

|

|

|

|

Neon Noodle posted:Racism is like the worst mind-virus ever. It's so hard to eradicate. All of our human vulnerabilities play into it -- people look different, so that means there are categories of people. Plus in the U.S. we all grow up with a level of historical background radiation that makes racism seem like the reasonable default. It takes SO MUCH EDUCATION to counteract. It's just the loving common cold of memes. It's almost as durable a pseudo-science as memetics! Seriously in Selfish Gene, Dawkins mostly does the responsible "i mean, this is just an idea, it needs evidence" kind of thing. And then there was no evidence and existing sociologists ignored it and people, including him, barreled ahead with the garbage anyways

|

|

|

|

Oh I know, I don't think of "meme" as anything other than a good word for a persistent, hard to eradicate idea. I think the concept has value as a metaphor, but I don't think of memes in the way Dawkins does. There are few people I loathe more than Dick Dorkins.

|

|

|

|

It's almost like dick dorkins is a bad scientist whose only respected by nerds because he panders to them and has an authoritative English accent that makes him sound smart to dumb Americans who have been culturally trained that English accents mean sophistication. (Completely tangential: My favorite thing I learned about the whole subconscious accent thing is when I was watching a British gameshow and they used an American accent narrator to emphasize how HARDCORE EXTREME SERIOUS something was, like the exact opposite of what we do

|

|

|

|

I like the thing where they swap Dawkins and dril tweets

|

|

|

|

divabot posted:I'll again namedrop the RW article on racialism, which is literally for this intended purpose. It's a Cover Article so should be any good for this sort of thing. If there are any particular tropes it doesn't seem to address, please bring them up on the talk page! Thank you, that's a great resource. I hadn't thought to look at RW, as it's still classified in my brain as a resource in fighting fundies only. How times have changed...

|

|

|

|

I have my issues with some of it, but it's like one of the last few holdouts of old internet atheism that haven't gone super racist or intolerant, so it's nice to have that. I mean for some of those guys, the bigotry was always there, but it was less noticeable back when everyone had Bush to rally around hating.

|

|

|

|

|

| # ? May 9, 2024 02:24 |

|

Since we've got Big Yud on the first page we should have the song about him on there as well. https://www.youtube.com/watch?v=nXARrMadTKk

|

|

|