|

VelociBacon posted:Anyone else having issues with Precision X1 not applying overclocks on startup? I have to manually apply them every startup (with an EVGA 2080ti XC Ultra). Kind of a pain in the rear end, is this fixed with the new version or has anyone heard anything? most OC utilities default to manually doing it on startup so that if its unstable and causes issues it doesnt gently caress you when windows boots. you can set presets and save them like you would a radio station on a car radio and then press them. i think MSI's tool allows you to override the default setting and load up with whatever you want, too as for voltage, you checked off the voltage box on this screen?

|

|

|

|

|

| # ? Jun 3, 2024 21:31 |

|

Statutory Ape posted:most OC utilities default to manually doing it on startup so that if its unstable and causes issues it doesnt gently caress you when windows boots. you can set presets and save them like you would a radio station on a car radio and then press them. i think MSI's tool allows you to override the default setting and load up with whatever you want, too Yeah I did check that and swapped around between the different options - reading online lots of people are having the same problem with 20xx EVGA cards on Afterburner. The thing is with precision there's this box:  That is checked off but doesn't do anything which is super frustrating.

|

|

|

|

I use Afterburner over Precision, but they're similar enough I wonder if this is the issue : in Afterburner I'm pretty sure clocks won't stick after a restart unless they're saved to a profile slot.

|

|

|

|

K8.0 posted:I use Afterburner over Precision, but they're similar enough I wonder if this is the issue : in Afterburner I'm pretty sure clocks won't stick after a restart unless they're saved to a profile slot. I saved my profile to all the slots just to see if maybe it was trying to load from a different one than that which I most recently used - no luck! I would really prefer afterburner as well if voltage control was unlocked for me.

|

|

|

|

My toddler poured a beer into the top of my PC and it killed my GeForce GTX 1060 3GB. Anyone got a good lead on a deal that will be an equivalent replacement?

|

|

|

|

tactlessbastard posted:My toddler poured a beer into the top of my PC and it killed my GeForce GTX 1060 3GB. Anyone got a good lead on a deal that will be an equivalent replacement? Replacing the toddler might be a little harsh, no?

|

|

|

|

tactlessbastard posted:My toddler poured a beer into the top of my PC and it killed my GeForce GTX 1060 3GB. Anyone got a good lead on a deal that will be an equivalent replacement? To answer seriously, I got some questions: do you play on full hd still? 1440p transition in plan? What�s your budget? Do you use a Gsync Monitor? What games are you playing? Could you imagine loving Raytracing? Won�t you bother at all with that RTX stuff?

|

|

|

|

Mr.PayDay posted:Replacing the toddler might be a little harsh, no? Lol Mr.PayDay posted:To answer seriously, do you play on full hd still? 1440p transition in plan? Yeah, I'd like to get about the same performance. No upgrade in sight, just want to keep on where I'm at for now. My monitor is a plane jane Acer, somewhere around 20". I paid $200 for that card two years ago and just looked at the same one again and it's....$200. After two years!

|

|

|

|

tactlessbastard posted:Lol 570/580.

|

|

|

|

tactlessbastard posted:My toddler poured a beer into the top of my PC and it killed my GeForce GTX 1060 3GB. Anyone got a good lead on a deal that will be an equivalent replacement? It might be a blower, but it's a trade-up and comes with free games. Just be sure to use Display Driver Uninstaller to clear out all traces of nVidia drivers first: https://www.newegg.com/Product/Product.aspx?Item=14-137-244&Ignorebbr=true

|

|

|

|

Thanks dudes!

|

|

|

|

Truga posted:knowing amd, they'll make a weird trick nvidia hates that makes their gpus raytrace like hell at almost no performance penalty. hopefully they also make a weird trick that makes antialias perform real well so DLSS gets thrown out You might be interested to know that Logitech is re-issuing the MX518 with the guts updated to their HERO sensor. I've got one coming in on Tuesday to see if it holds up against my OG MX518 and the G502 I eventually settled on to replace it after going through five G400/G400s.

|

|

|

|

SwissArmyDruid posted:You might be interested to know that Logitech is re-issuing the MX518 with the guts updated to their HERO sensor. I've got one coming in on Tuesday to see if it holds up against my OG MX518 and the G502 I eventually settled on to replace it after going through five G400/G400s. Oh poo poo I didn't know that. With the Razor software being super irritating but I think the only way to rebind the deathadder buttons I'm super tempted to go back to the MX518.

|

|

|

|

SwissArmyDruid posted:You might be interested to know that Logitech is re-issuing the MX518 with the guts updated to their HERO sensor. I've got one coming in on Tuesday to see if it holds up against my OG MX518 and the G502 I eventually settled on to replace it after going through five G400/G400s. That's awesome! My G500 is still going pretty strong, but I've worn through most of the non-slip grip and have to clean the contacts every year or so. I kept considering the G502, but it's really nice to have a decently professional-looking gaming mouse instead of a pointy leet monstrosity, no matter how comfortable.

|

|

|

|

https://www.logitechg.com/en-us/products/gaming-mice/mx518-gaming-mouse.html Yes, it says "preorders" on that page, but AFAICT, it is actually out there and shipping. After getting some confirmation from Goo, our resident goon on the inside who works at Logitech: Goo posted:I understand why you'd be suspicious, and I'm not going to try to change your mind about whether to purchase, but for future reference it honestly is our official Amazon storefront. I went and put in an order. Mine should be here Tuesday. SwissArmyDruid fucked around with this message at 07:41 on Feb 25, 2019 |

|

|

|

So how important is 8 GB of RAM compared to 6 for a GPU? I likely wouldn't be upgrading again for several years so I really want to get the best bang for my buck but preferably at $400 or lower. If 6 will be fine for 1080p/144hz, at least without running everything on low, I'll just buck up and get the 1660 Ti. Otherwise I guess I'll wait for a sale on a 2070.

|

|

|

|

|

You would be turning textures down. Performance otherwise isn't impacted until you run out of video memory, which is dependent upon the title you are playing.

|

|

|

|

Admiral Joeslop posted:So how important is 8 GB of RAM compared to 6 for a GPU? I likely wouldn't be upgrading again for several years so I really want to get the best bang for my buck but preferably at $400 or lower. If 6 will be fine for 1080p/144hz, at least without running everything on low, I'll just buck up and get the 1660 Ti. Otherwise I guess I'll wait for a sale on a 2070. At 1080p probably not that much of a difference but if you are planning on going to 1440p and planning on using the card for a few years then the extra ram might be more needed Is nvidia going to be doing 1670-1680s? I'd loving love a 1680Ti

|

|

|

|

At 1080p I wouldn't worry about it, it's at higher resolutions where it's potentially a problem.

|

|

|

|

I don't have any plans to upgrade past 1080p at this time. I suppose I'll look at 1660s then. What are the odds of getting some bundled with games this early? Getting The Division 2 included would certainly help.

|

|

|

|

|

Admiral Joeslop posted:So how important is 8 GB of RAM compared to 6 for a GPU? I likely wouldn't be upgrading again for several years so I really want to get the best bang for my buck but preferably at $400 or lower. If 6 will be fine for 1080p/144hz, at least without running everything on low, I'll just buck up and get the 1660 Ti. Otherwise I guess I'll wait for a sale on a 2070. https://www.techspot.com/article/1785-nvidia-geforce-rtx-2060-vram-enough/

|

|

|

|

Admiral Joeslop posted:I don't have any plans to upgrade past 1080p at this time. I suppose I'll look at 1660s then. What are the odds of getting some bundled with games this early? Getting The Division 2 included would certainly help. If you're not tied to Nvidia, 590s are great for 1080p and come with 8GB and The Division 2 https://www.newegg.com/Product/Product.aspx?Item=N82E16814150817&Description=RX%20590&cm_re=RX_590-_-14-150-817-_-Product

|

|

|

|

Cao Ni Ma posted:

Seems unlikely they would want to release a product to complete directly with the 2060, and something faster than the 1660 ti would. Also, according to the anandtech review, the 1660ti is a fully uncut tu116, so it seems like they'd have to either make a whole new gpu or laser off tensor and rtx cores from tu106 to make a higher end sku? I don't see why they would given the 2060 is currently the best price/performance in its segment outside of catching vega 56/64 on a good sale. There's no real competitive pressure right now. But, stranger things have happened I guess...

|

|

|

|

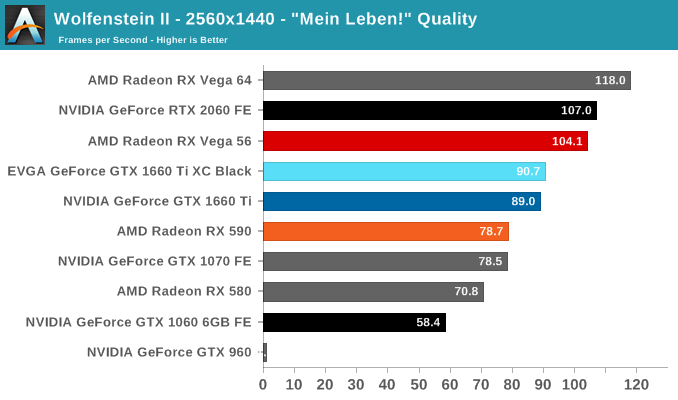

I think y'all overestimate how much more vram higher resolutions actually use. A typical forward rendering pipeline at 1080p uses tens of megabytes on various framebuffers. 4K is only going to multiply that by 4. So that'd be something like 60MB to 240MB. I think some people have inaccurate impressions here because higher resolutions do place much higher demands on memory bandwidth. But then GDDR6 comes with more bandwidth than 5... So, yeah, data:

|

|

|

|

If I'm reading this right, 6GB for 1080p won't be very different at all, like the other posters have said. Arivia posted:If you're not tied to Nvidia, 590s are great for 1080p and come with 8GB and The Division 2 https://www.newegg.com/Product/Product.aspx?Item=N82E16814150817&Description=RX%20590&cm_re=RX_590-_-14-150-817-_-Product This is a good price, but I'm not sure if that card is as much of an upgrade over my 970 according to GPU Benchmark. Are they still reliable for comparisons?

|

|

|

|

|

There's nothing wrong with that data, but I wouldn't put a lot to stock in it because the drivers keep everything around in case it might be reused. It's not a great metric for determining actual memory use. You can even make Unreal 1 engine games report they're using over 7GB on a 1080 (You could probably make it 10GB on a 11GB card too).

|

|

|

|

SwissArmyDruid posted:You might be interested to know that Logitech is re-issuing the MX518 with the guts updated to their HERO sensor. I've got one coming in on Tuesday to see if it holds up against my OG MX518 and the G502 I eventually settled on to replace it after going through five G400/G400s. that's great, but i'm not going to use a mouse that doesn't have 3 buttons again if I can avoid it.

|

|

|

|

A 1660ti is ≈ a 1070. A 590 is a 580 pushed too far which is 1060 class performance. I think the 590 outperforms somewhat, at the cost of being off the deep end on the efficiency curve. For hrr gaming I'd stick with the 1660.

|

|

|

|

Admiral Joeslop posted:If I'm reading this right, 6GB for 1080p won't be very different at all, like the other posters have said. I don't know about GPU Benchmark, but you're right that its not a significant upgrade. It's a 30% jump in performance, which for 259 dollars doesn't seem great. Honestly the RX 590 is a pretty bad value card outside of the game promotion, the 1660 Ti is 20 bucks more, but also 20% faster. A 50% leap for 279 dollars is far more compelling. With how quickly modern AAA games get discounted, I'd get the 1660 Ti at this price range and look for some cheap codes around launch.

|

|

|

|

Truga posted:that's great, but i'm not going to use a mouse that doesn't have 3 buttons again if I can avoid it. Here you go?

|

|

|

|

In many threads of tech forums I read that people seriously suggested 1070 over a 1660Ti. lol https://mobile.twitter.com/SebAaltonen/status/1099378407186542592 I will quote someone who actually works with that tech. The anti 6 GB Vram circlejerk gets boring fast.

|

|

|

|

Mr.PayDay posted:I will quote someone who actually works with that tech. The anti 6 GB Vram circlejerk gets boring fast. Yeah, I mean, in a perfect world 8GB would be great, but the reality is that you'll run out of performance before you run out of meaningful VRAM at 6GB. The extra RAM sure as poo poo isn't making the difference for a 580/90, after all.

|

|

|

|

I was told 4gb of vram was plenty as long as its HBM (fury owners)

|

|

|

|

Don Lapre posted:I was told 4gb of vram was plenty as long as its HBM (fury owners) i read that as "furry owners" and was like wtf have they gotten into now

|

|

|

|

DrDork posted:Yeah, I mean, in a perfect world 8GB would be great, but the reality is that you'll run out of performance before you run out of meaningful VRAM at 6GB. The extra RAM sure as poo poo isn't making the difference for a 580/90, after all. It does, game-dependent. My understanding is that the 4GB and 8GB versions actually have the same memory bandwidth, too, so unless I'm missing something the performance difference should be purely down to quantity limitations.

|

|

|

|

Don Lapre posted:I was told 4gb of vram was plenty as long as its HBM (fury owners) Yeah, no. Back in 2015 only AMD fans in denial and claiming that HBM RAM and it 4096 Bit interface and 512 GB/s would dunk on GDDR5 (980Ti had 384 Bit Interface and 336 GB/s). On sheet, AMD looked superb again, the reality check (Radeon VII Deja Vu, anyone?) with game fps and benchmarks made many wake up from their dreams. 4 GB VRAM was a big discussion back in the day because AMD sold the card as a 4K card that beats the 980:  I played on a 6 GB VRAM 980Ti until Octobre 2018 on 1440p, everything Ultra. VRAM was never an issue, performance was eventually. The Radeon VII desaster now has a completely "different" result, because that card does not lose against Nvidias lineup because too few HBM VRAM overall that time, because of "too much" now in a sense as it is a CC GPU that has a pricetag that makes it dead on arrival (not literally, but it won't harm Nvidia, how could it) unless you want to avoid nvidia and support/buy the weaker AMD GPU and avoid RTX stuff as well. Thats sadly hilarious. And bad for us Gamers in every way. Mr.PayDay fucked around with this message at 21:54 on Feb 25, 2019 |

|

|

|

Stickman posted:It does, game-dependent. My understanding is that the 4GB and 8GB versions actually have the same memory bandwidth, too, so unless I'm missing something the performance difference should be purely down to quantity limitations. GamersNexus posted:GDDR5 speed, for instance, operates at 7Gbps on the reference 4GB model, as opposed to 8Gbps on the reference 8GB model That said, in some cases the differences seem bigger than the memory bandwidth differences alone would imply.

|

|

|

|

TheFluff posted:That said, in some cases the differences seem bigger than the memory bandwidth differences alone would imply. Thanks! That's what I get for skimming. I'm a little disappointed that they didn't take the opportunity to test the pure effect of RAM quantity. Stickman fucked around with this message at 22:02 on Feb 25, 2019 |

|

|

|

TheFluff posted:That said, in some cases the differences seem bigger than the memory bandwidth differences alone would imply. Yeah, I mean, I'm not saying it's impossible for the RAM difference to ever make an impact. But the most substantial impacts are in situations where the performance is already atrocious (<30FPS), so it's more academic than anything at that point. The real point is that giving up the demonstrated 10-20% performance increase of the 1660Ti over the 1070 in actual today-games based on a theoretical future where 2GB of VRAM matters is almost certainly a bad trade (at retail prices, of course; if you can get a used 1070 for $200, that's a solid deal).

|

|

|

|

|

| # ? Jun 3, 2024 21:31 |

|

But there's no demonstrated 20% advantage, or even 10% really. This is literally the biggest case I've seen and mostly it's indistinguishable or the 1070 is faster. https://www.anandtech.com/show/13973/nvidia-gtx-1660-ti-review-feat-evga-xc-gaming/5 Still, assuming similar prices there's no reason to get a 1070 at this point, the 2 extra gigs of ram don't do poo poo on mine.

|

|

|