|

spacemang_spliff posted:It's wrong to hide from your just desserts?

|

|

|

|

|

| # ? Jun 10, 2024 10:34 |

|

The bad algorithm defender has logged on (it's me, I'm the technogoon). While there are plenty of great examples of racism in ML and racist algorithms, I don't feel like Twitter's image cropping algorithm is a good example of this. In the case of the Obama/McConnell images, can you, as a critic of how the algorithm selected McConnell in both cases, articulate which face *should* have been selected in each case? Should it have been the top one or the bottom one? What if the images were oriented left/right instead? If you're an English speaker, you might say the left one should be selected, but if you're Japanese, you might say the right. Would either of you be "correct"? Some people rightfully pointed out that the images could be modified in relatively small ways to reverse the selection: https://twitter.com/SergioSemJ/status/1307493041742254080 Or large changes in unaltered images could reverse the selection as well: https://twitter.com/BySaadGhamdi/status/1307661970750009344 I'm not trying to minimize the impact of bad algorithms. Quite the opposite: I "deleted"* my FB account when it came to light that FB allowed ads targeted to users who had an interest in "white genocide", which quite clearly leads to further marginalization of vulnerable groups, and FB just fuckin shrugged and was like "whaddya gonna do? It's da algorithms!" I also don't want to downplay how algorithms, even in a relatively low-impact scenario as this, can shape human discourse. If it was a case of individual images of faces and McConnell was identified as a face and Obama wasn't, I would absolutely agree that the algorithm desperately needed work. In this case though? I don't know about you, but as I can't really say how a single face should be selected out of several faces, I don't feel I have grounds to criticize what actual selections are being made. *nothing on FB is ever truly deleted

|

|

|

|

|

Lol

|

|

|

StashAugustine posted:i think im now mentally prepared that if i don't get a job when i graduate i can just become the unabomber are you gonna mail pipe bombs to random cows

|

|

|

|

|

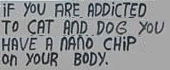

All watched over by machines of lovely whiteness

|

|

|

|

now that I understand the joke, the same post with Justin Trudeau is extremely funny.

|

|

|

|

I can't stop laughing at this I remembered it while driving and started laughing

|

|

|

|

https://twitter.com/nopinkspandex/status/1309982262973394944?s=21 very good

|

|

|

|

Spaced God posted:As someone who works extensively with ~the algorithm~ and someone who is fortunate enough to work with some pretty cool folks in my current gig, I had a chat about this kinda stuff a while ago. One of the points I found interesting is that a really easy and really big way to shift the narrative from "the beep boop computer decided that black people are inferior" is to tear down the concept of ~the algorithm~ entirely during explanations and instead shift the phrasing to "the people who programmed this." It sort of takes the passive self-determination of the computer out of it. coming from a background in the ~~humanities~~ before studying computer-fu, it is fascinating how hermetic the discipline is in regards to such things like, for example, the basic brickheaded attitude found even in design and analysis about sitting next to the person who you are going to develop a system for to make their work easier, and letting them tell you what are the problems and what could make it better, then the coder goes "pffft I know better" and makes a very good piece of software that works well but delivers very little in the actual intended purpose, and a lot of people don't get it (I had a colleague realizing that problem while working at a credit services software systems and he went loving ballistic - he figured out that his code, his labor, was helping people getting in debt and he left asap)

|

|

|

|

A Buttery Pastry posted:Congratulations, you might just be the next pussy-recognizer goon. Call your local porn empire and ask them if they need someone to identify all the performers in their archive footage. A job I was destined for.

|

|

|

|

SubnormalityStairs posted:The bad algorithm defender has logged on (it's me, I'm the technogoon). While there are plenty of great examples of racism in ML and racist algorithms, I don't feel like Twitter's image cropping algorithm is a good example of this. Well that's just dumb

|

|

|

|

StashAugustine posted:butlerian jihad now

|

|

|

|

lol

|

|

|

|

|

| # ? Jun 10, 2024 10:34 |

|

i programmed my computer to tell me if it's racist and it said no. case closed

|

|

|