|

TrueNAS seems to have fumbled their SCALE release update. The Dashboard appears broken and it pretends that there's still an upgrade to RELEASE. And the apt tools don't have an execute flag either. I should stop updating my poo poo ASAP.

|

|

|

|

|

| # ? Jun 3, 2024 22:42 |

|

Klyith posted:Seagate isn't a very big player in SSDs, and they don't make their own controllers so it might be hard to blame them in particular. I think the most obvious candidates would be Micron/Crucial or Intel (even though Intel SSDs aren't really Intel anymore). I got the feeling that seagate Barracudas in nvme were pretty bare bones. It�s a Phison controller on there so it would make sense to me if those were just a lot less tolerant of power loss with no capacitors. Although the fact the data was flushed is really weird how was that not written?? It�d be the that would surprise me the least anyway, micron or intel seem less likely just based on running a lot of nvme ssds in test setups (we didn�t check for data loss on powering off the drives though, not something of interest).

|

|

|

|

There were reports of flush failures specifically on M1 Macs too, which I think is mostly Kioxia ATM... Not validating this functionality on consumer-destined flash at the bottom of the bin is one thing, which is why it caused ruffled feathers in Apple land; if this is a much wider problem than a firmware-specific macOS problem...  If its industry wide, haha

|

|

|

|

priznat posted:It�s a Phison controller on there so it would make sense to me if those were just a lot less tolerant of power loss with no capacitors. Although the fact the data was flushed is really weird how was that not written?? Consumer SSDs have a minimal amount of capacitors. They have enough juice to finish the active write/erase, not enough to flush a full cache to disk. That's why SSDs don't corrupt or kill themselves from a power-out and SD cards do. With zero power protection if you cut power during a write or erase it can leave pages in a Phantom Zone where they become permanently unreliable. If you look at enterprise SSDs with real data protection they're usually m.2 110 because they need room for a bunch of kinda big tantalum super-caps. That is the type of juice you need to fully write cache to disk. The WD Red SN700 he tested isn't that type of enterprise SSD, it's just a consumer SN750 with some tweaks. OTOH all of that has little or no bearing on what he was testing, because if the drive says a flush is complete it should be done writing.

|

|

|

|

Klyith posted:OTOH all of that has little or no bearing on what he was testing, because if the drive says a flush is complete it should be done writing. Yeah this is exactly it, I wouldn�t be surprised if a consumer drive lost data in cache on a power loss because I am very aware they don�t have the caps that enterprise ones do, but losing it after a cache flush is really weird. When would it be written to flash if it isn�t doing it then? Was the drive just saying yeah yeah it�s done if not being saturated just to boost performance numbers? You�d think the performance would crater if there are sustained writes going on.

|

|

|

Crunchy Black posted:If its industry wide, haha

|

|

|

|

|

Buy an external lead acid based capacitor.

|

|

|

|

BlankSystemDaemon posted:Anything that doesn't have a capacitor is likely subject to this, so yes it's an industry-wide problem because the majority of SSDs don't have capacitors. I�m aware of that but controllers failing to properly communicate to the OS that a flush is complete when it is not is worse than an outright power failure right? I�m mobile posting so I can elaborate on this more later.

|

|

|

|

Crunchy Black posted:I�m aware of that but controllers failing to properly communicate to the OS that a flush is complete when it is not is worse than an outright power failure right? I�m mobile posting so I can elaborate on this more later. That's what I'm taking away from this. Unless I don't know what that message is supposed to mean (my understanding is it's supposed to mean it's committed to whatever the underlying storage media is in a way that the media no longer needs power) the controller are lying to the host. Other than juking performance figures or a flat out bug I can't imagine why else this would be happening.

|

|

|

|

Oooooooh this twitter response thread makes a ton of sense: https://twitter.com/LebanonJon/status/1496152838979743744 When I save CatPic.jpg to disk the OS might send the data to the drive and call for a flush to be sure it's written. On a HDD that is pretty simple and straightforward: write the data. But on SSDs that involves 2 things: 1. the data for Catpic.jpg 2. the FTL map, which is a map table from the conventional LBA sector numbers of HDDs that the PC and OS understand to the much more complex, and ever-changing, layout of flash sectors The data for Catpic.jpg can get written to flash, but without the map so the drive knows the location it is useless. And the whole FTL is tens or 100s of MB. Writing that whole thing every flush would be a real problem. It would destroy performance and it would vastly accelerate write wear because you're adding a ton of data to even tiny writes. So given that the original guy's testing has consistent results, but is likely doing the same thing every time, I'd expect there would be different write+flush patterns with different results. Maybe you could produce correct behavior on the 2 "bad" drives and data loss on the 2 "good" ones. It'll depend a lot on the firmware. tl;dr if you need enterprise data integrity, buy enterprise SSDs

|

|

|

|

What's loving dumb is that even NVMe SSDs still rely on behaving like disks, when they fundamentally speaking aren't disks, and even the disks we have nowadays aren't the kind of disks we had when the specifications we're using were designed. Not only do modern disks not rely on cylinders or tracks, SSDs need an even bigger translation layer than the disks do, because at least modern disks still have rotating platters where SSDs have to be able to report a rotational speed of zero, and also have to implement a log-structured data structure with garbage collection. Meanwhile, open-channel SSD implementations are far and few between, and the biggest one (LightNVM, which inspired the above work) seems to be largely ignored with industry-backed researchers having moved onto Zoned NameSpaces (which is linked directly from the lightnvm.org site).

|

|

|

|

|

Crunchy Black posted:TrueNAS Scale is out. I was so hype for Corral but I dutifully waited a bit. Finally broke down, installed it, got everything migrated and happy, and then within a week it was canned. Ran that until 11 was well baked after a few months of patches, and wanting to keep using my containers I moved things into their RancherOS VM integration. Then that thing was canned and left to rot. So I finally I did what I should have done from the start but didn't want to janitor, and stood up my own VM for containers. And now it's done and I know what's in there and how I made it janitor itself and you can't touch it. That said, I'd like to move to SCALE someday, have one less layer to manage, and maybe even enable some of the weird hardware passthrough stuff I'd like to do (let me have my Headless Automatic Ripping Machine). I'm just not quite ready to be hurt again but hmu in a year maybe.

|

|

|

|

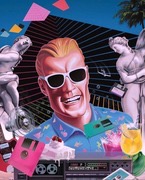

Klyith posted:Oooooooh this twitter response thread makes a ton of sense: I have a Samsung SM953 I got a few years ago on ebay and it's cool but the 28110 form factor is too long for most onboard slots so it sits in a PCI-E slot adapter. Also, it's not super fast compared to today's NVMe drives. This isn't my picture, but look at all those caps:

|

|

|

|

BlankSystemDaemon posted:What's loving dumb is that even NVMe SSDs still rely on behaving like disks, when they fundamentally speaking aren't disks, and even the disks we have nowadays aren't the kind of disks we had when the specifications we're using were designed. I believe the main problem with Open Channel was that wear leveling and dealing with stuff like read disturb was largely left for the host side, with the FW basically only sending notifications that it needs to be done soonish. With ZNS that's brought back to the drive, though the overall structure is somewhat similar (if you squint enough). If I remember correctly Bjorling was originally involved with OCSSD too, before moving to Western Digital and Zoned Namespace stuff. EDIT: Also from what I can quickly check, the pblk driver was removed from the Linux kernel, so Open Channel is probably dead (though still very neat to check out, because it really lays out how NAND drives behave). mmkay fucked around with this message at 16:21 on Feb 23, 2022 |

|

|

|

Chilled Milk posted:I was so hype for Corral but I dutifully waited a bit. Finally broke down, installed it, got everything migrated and happy, and then within a week it was canned.. Fukkin exact same lol

|

|

|

|

If I want to skip magnetic storage in my next PC, can I get away with NVMe for the system and SATA for the bulk data like music/video? Am I leaving a lot of speed on the table (or long-term reliability given that SSDs don't fail any way other than totally)?

Shumagorath fucked around with this message at 02:37 on Feb 26, 2022 |

|

|

|

Shumagorath posted:If I want to skip magnetic storage in my next PC, can I get away with NVMe for the system and SATA for the bulk data like music/video? Am I leaving a lot of speed on the table (or long-term reliability given that an SSDs don't fail any way other than totally)? NVMe has a much greater peak bandwidth and allows for significantly deeper queues. For bulk data on a home PC the latter is almost irrelevant and the former only matters if it's bottlenecking something else. There is no difference in reliability between the two interfaces. I still have spinning rust in my desktop but I'm doing a similar thing: NVMe for the OS and my main modern games, cheap SATA SSDs for other modern games, and then my downloads folder and older games are on the hard drives. Works great.

|

|

|

|

Sorry for reliability I meant spinning discs. I've only ever had one fail on me without warning and it was an 00's Maxtor. The one SSD I had die on me was instant, total failure. I pay for business-class remote backups with a local option so maybe I'll get a NAS somewhere down the line if gigabit internet doesn't cut it.

|

|

|

|

Oh yea, comparing hard drives to SSDs my experience has been the same. The only immediate total hard drive failures I've experienced have been related to external events like physical damage or power surges, any that just failed on their own did so in a more gradual manner where some data might be corrupted but the rest can be read, where SSDs if you're lucky you might get a period of significantly reduced performance before it just ceases to exist. That said I've had exactly one major name brand SSD fail with no obvious external cause in all the machines I'm responsible for. All the other failures have been no-name or stuff like Microcenter's Inland brand. I personally wouldn't worry about it for the sorts of things I consider "bulk files". Important and irreplaceable stuff exists in multiple places, everything else is either unimportant or easily replaceable.

|

|

|

|

there have been some incredibly dumb firmware bugs in both harddrives and SSDs where the firmware starts crashing because for instance the power-on hours counter overflows and becomes a negative number, which is extra fun if you built a raid solution using identical drives and it happens to all of them at once. another reason to always have independent backups of data you can't afford to lose.

|

|

|

|

Shumagorath posted:If I want to skip magnetic storage in my next PC, can I get away with NVMe for the system and SATA for the bulk data like music/video? Am I leaving a lot of speed on the table (or long-term reliability given that an SSDs don't fail any way other than totally)? Bulk media is absolutely ok on SATA. This is also a totally acceptable purpose for cheaper QLC SSDs, for example a Crucial BX500 or WD green, that are generally not recommended for system drives. QLC can be have a big performance penalty on writes, but for this job it'll be annoying exactly once, when you're copying a TB of data over from HDDs. If you're going all-solid-state IMO it's not worth raids or such that cost capacity, better to just have a solid backup system. Shumagorath posted:Sorry for reliability I meant spinning discs. I've only ever had one fail on me without warning and it was an 00's Maxtor. The one SSD I had die on me was instant, total failure. I still haven't had a SSD die, including an ancient OCZ that should be dead by now, but yeah I'd generally not expect a warning. If they're dying from actual wear then you probably will get warning, but wearing out a SSD is not a thing that happens in PC use. However I've definitely had HDDs die without warning, or at least inadequate warning to do anything useful. More often than not in fact.

|

|

|

|

wolrah posted:I still have spinning rust in my desktop but I'm doing a similar thing: NVMe for the OS and my main modern games, cheap SATA SSDs for other modern games, and then my downloads folder and older games are on the hard drives. Works great. That�s what I do but I�m getting real close to pulling the trigger on moving all the spinning rust stuff to the spinning rust on the NAS, gigabit isn�t the bottleneck in that situation anyways, but alas

|

|

|

|

So I aborted my new TrueNAS build when the seller sent me a Lian-Li case that had weird silver plastic wrap all over the black aluminum. Deal was definitely too good to be true. But... I found a great deal on a Sans Digital 8 bay eSATA JBOD enclosure for $40, also seems too good to be true since the Chia miners have scooped most of these up. But if it works I am going to use it for a new 8-disk pool on my current TrueNAS box which is an HP Prodesk with a Pentium G4560 and 16GB Ram. Is it as easy as just adding in the PCI-e card, connect to the enclosure, and create pool? Anyone have experience with these JBOD enclosures with TrueNAS? On further searching it seems like this case can be upgraded to be compatible with newer LSI cards per this thread: https://forums.serverbuilds.net/t/guide-8-bay-mini-das/1106/2 Smashing Link fucked around with this message at 19:57 on Feb 28, 2022 |

|

|

|

I don�t have any truenas experience but yeah JBOD just gets passed to the OS like a bunch of normal rear end disks.

|

|

|

|

Smashing Link posted:So I aborted my new TrueNAS build when the seller sent me a Lian-Li case that had weird silver plastic wrap all over the black aluminum. Deal was definitely too good to be true. I actually took a regular computer case with an 8088 to 8087 adapter and hooked the drives up to that, used a psu tester to turn on the power supply and fans and put an external HBA in my truenas server and connected via a pair of 8088 cables. Worked perfectly. Was able to add eight disks that way. I just turn on the external box before turning on the server first. This has been running for over a year without any problems. Ended up building a second one for my other truenas server. Now I have two 16 port external HBAs ready for when I want to expand the servers again.

|

|

|

|

Nulldevice posted:I actually took a regular computer case with an 8088 to 8087 adapter and hooked the drives up to that, used a psu tester to turn on the power supply and fans and put an external HBA in my truenas server and connected via a pair of 8088 cables. Worked perfectly. Was able to add eight disks that way. I just turn on the external box before turning on the server first. This has been running for over a year without any problems. Ended up building a second one for my other truenas server. Now I have two 16 port external HBAs ready for when I want to expand the servers again. HBA prices seem to have gone up! 16 port externals are $50+ now, and there are rumors of knockoffs being sold if you listen to Reddit. Smashing Link fucked around with this message at 15:22 on Mar 1, 2022 |

|

|

Smashing Link posted:HBA prices seem to have gone up! 16 port externals are $50+ now, and there are rumors of knockoffs being sold if you listen to Reddit.

|

|

|

|

|

Another one? Or is this still Chia, or whatever the gently caress.

|

|

|

|

Just Chia afaik. Finally was able to get some cheap Intel SAS expanders thanks to the crash!

|

|

|

|

Nulldevice posted:I actually took a regular computer case with an 8088 to 8087 adapter and hooked the drives up to that, used a psu tester to turn on the power supply and fans and put an external HBA in my truenas server and connected via a pair of 8088 cables. Worked perfectly. Was able to add eight disks that way. I just turn on the external box before turning on the server first. This has been running for over a year without any problems. Ended up building a second one for my other truenas server. Now I have two 16 port external HBAs ready for when I want to expand the servers again. I was thinking of doing something similar to this. I have a shallow depth network cabinet, so I can't toss in a normal length disk expansion shelf. Once I have to expand again, I was thinking about doing some hillbilly setup with a rackmount shelf, external psu, and a few of those 4x 3.5" hotswap 5.25 bay dealios.  Who knows though, with increasing drive sizes, I may be able to just replace one of the vdevs with larger disks down the road.

|

|

|

|

Trip report for the HP S01-PF1013W discussed some pages ago. Grabbed one for like $110 shipped on a whim to see if it would work for my 16 drives in a SAS expander currently hooked up to a 4-port LSI in a Fractal Design 804 frankenstein PC. If it doesn't work out I've got something I can donate to some needy folks here with my old webcam and monitor. It's rather small! It's smaller than the eGPU Thunderbolt chassis I was intending to replace for pure file serving duties. The Celeron G5900 is basically the same performance characteristics as the ancient Haswell i3-4130 that's going to go, but for 7w for the whole business I think it's worth a shot (still higher than my i7-8559U NUC but once you add my Thunderbolt chassis for the SAS card it all goes out the window). Amazing enough, the official HCL lists a 10700F as compatible. I don't know how it'll get properly cooled with this heatsink shroud combo, but it's going to accept it at the hardware layer separate from the mechanics at least, so that's an exercise for another year. The PSU is a 180w gold PSU which is another factor for how the power usage is so low. So what's the catch? There's 2 PCI-e slots available and they're both half height slots. I forgot to check my HBAs and it looks like the only cards I've got that can work with half height are my LSI cards with only two internal SFF-8087 connectors and given there's no internal space for any meaningful number of drives (16+) they'd need to be routed to the outside somehow which kind of rules out the SFF-8087 to SFF-8088 adapter based option without some creative case modding and sheet metal cutting. Another option would be to buy two different HBAs to handle the 16 SATA drives I've got in a DAS (that's 4 total SFF-8088 connectors). Given I already have way too many LSI HBAs from various NAS projects and I have serious doubts I can get daisy chaining to work given these are SATA drives rather than SAS that would be another $100 in HBAs. Another option is to buy a low profile 4 port LSI card but due to physical dimension restrictions this can only work in a half height slot if the HBA uses SFF-8644 slots. SFF-8644 cables on any end are 2-3 times more expensive than the SFF-8088 cables I already have. So in the end you'd spend more on cables and cards than this machine whatever approach taken - this is kind of a duh given $110 for a computer is pretty drat cheap frankly. I would recommend this machine for lower spec larger disk arrays in theory but this implies one is already going to buy something like a Supermicro CSE836 which has the mount points for a motherboard and negate the point of all of this external drive tomfoolery and likely come out to be more power efficient anyway given that comes with at least 800w gold+ PSUs so you'd need around 100w sustained load to still wind up with a loss of another 12w+ due to PSU efficiency loss. In terms of cost efficiency unless one manages to sell the PSUs the CSE836 units come with you're looking at needing to shell out more for the 400w PSUs and the energy savings might not add up with the up-front costs anyway. My primary concern over power usage is with excessive heat in my basement when there's enough issues with mold and dampness in the area I don't need to exacerbate. TL;DR - probably not worth it for dedicated NAS purposes, make sure that your intended expansion cards are compatible with half height single slot width PCI-e slots

|

|

|

|

Looking for a sanity check as I go to add another four drives to my ZFS setup. Here is the current setup: code:code:code:Also before I totally lock myself in with this, is there any strong reason I would not want to add another 4x12TB Z1 vdev to my existing one?

|

|

|

|

I just talked myself and someone into selling me a pair of Mellanox ConnectX4 cards for less than $200 combined because they're OEM branded by HPE; one is FlexibleLOM form-factor that fits in my big iron, and the other is a half-height daughterboard that fits in my low-power server. This made me think of the posts Combat Pretzel (I think?) made regarding RDMA for iSCSI on FreeBSD, and I think maybe I and/or Combat Pretzel need to look into iser(8). wolrah posted:Looking for a sanity check as I go to add another four drives to my ZFS setup. Yes, I'm pretty sure that's an artefact of the dry-run, as the regular vdev nomenclature is a result of internal numbering in ZFS that you can also see with zdb(8) if memory serves. There may be reasons why you don't want to, but they're use-case dependent; are you adding disks that are bigger than the ones in your pool? If so you might consider enabling autoexpand documented in zpoolprops(7). If you're not completely out of space, can you wait until raidz expansion lands? Other than that, subsequent writes after the vdev has been added should favour being written to the empty vdev, until they balance each other out (which should happen before the pool reaches 80% capacity, if memory serves). Props for using dry-run but if you're in doubt with these kinds of things even after doing dry-run, remember zpool-checkpoint(8)! BlankSystemDaemon fucked around with this message at 06:34 on Mar 2, 2022 |

|

|

|

|

BlankSystemDaemon posted:There may be reasons why you don't want to, but they're use-case dependent; are you adding disks that are bigger than the ones in your pool? I had a 3x12TB Z1 previously, then bought five more drives, built a 4x12 pool to move everything to, and now this is the original three drives plus the last new one. quote:If so you might consider enabling autoexpand documented in zpoolprops(7). If you're not completely out of space, can you wait until raidz expansion lands? quote:Props for using dry-run but if you're in doubt with these kinds of things even after doing dry-run, remember zpool-checkpoint(8)!

|

|

|

|

I�m thinking of updating my DrivePool server�s disks and rebuilding it as a UnRAID server. Below is what I currently have, what I�m planning on doing, and a few questions I�ve had. Does it seem like a viable plan, or am I missing something? My current setup:

What I�m thinking of doing

Also, I have a few old 2.5� SSDs from other machines � usually I would use them as a temporary download location, so the main data pool is largely static. In UnRAID, would one of those work well as a cache disk? I see that if it dies before it copies to the pool that I�d lose the data on it � I�m more curious if there are reasons other than total failure for why I shouldn�t use an old SSD as a cache disk? Also, can I create a 2nd pool with one of them for the purpose of a download location for something like a SABNZBD docker instance? Is BestBuy a good source for disks? I don�t trust Amazon�s Frustration Free packaging to ship them securely or be new drives, and I�ve heard NewEgg has issues too depending on who sells/ships the disk through them. P.S. the DL380 has a HP p420i raid controller in it; the original documentation for it says it's limited to 4TB drives. Does anyone know if the latest firmware can support 8TB SATA drives? Due to the redundant power supplies, dual CPUs, and 128GB of Memory I'm wondering if should look more into how use that host for UnRAID instead. The biggest issue I can think of is that unlike with the DrivePool disks I wouldn't be able to plug the DL380's 3TB disks into another machine to get the data off. Any thoughts on all of that would be appreciated. thanks Waffle Conspiracy fucked around with this message at 16:24 on Mar 2, 2022 |

|

|

wolrah posted:Same exact drives across the board, all shucked WD 12TBs. You can't up the redundancy level without doing negating the copy-on-write and checksumming principles that ZFS is designed with, without implementing Block Pointer Rewrite, and as I've mentioned before it's such a complicated thing that it'll have to be the last thing ever added to ZFS. And no, you don't get partial double-redundancy - data is striped across multiple vdevs. Yeah, zpool checkpoint is a really amazing feature - it works by making ZFS' nine on-disk uberblocks read-only, and creating an append-only log on a separate part of the disk, so it should be used sparingly but is fantastic for administrative commands because the best-case scenario involves absolutely no downtime and the worst-case allows you to revert basically any fuckup that you can conceive of. I think it's also a (Open)ZFS-exclusive feature?

|

|

|

|

|

BlankSystemDaemon posted:And no, you don't get partial double-redundancy - data is striped across multiple vdevs. Pretty sure they mean that each vdev can sustain a single catastrophic disk failure without any data loss. "Double redundancy" is probably the wrong word, but it's certainly more fault-tolerant than a single 8-disk raidz vdev.

|

|

|

|

BlankSystemDaemon posted:And no, you don't get partial double-redundancy - data is striped across multiple vdevs. In hindsight what I probably should have done is build a five-drive Z2 out of the new disks which would have allowed me to copy over all of the existing data and then expand in the three older drives when that option became available. Now that I'm thinking about that I'm wondering if I know anyone local I could borrow a 12+ TB disk from to temporarily use in doing something like that....

|

|

|

|

I recently put together an 8 drive raidz2 pool. I would recommend anyone considering the same thing to understand the impact of different topologies on iops. It's fine for bulk storage, but you might be disappointed by the performance if it is used for any active workloads. I'm seriously considering breaking things apart into two pools: Raidz1 for media collection and mirrored pool for other stuff that gets more random read/write. CopperHound fucked around with this message at 18:04 on Mar 2, 2022 |

|

|

|

|

| # ? Jun 3, 2024 22:42 |

|

BlankSystemDaemon posted:This made me think of the posts Combat Pretzel (I think?) made regarding RDMA for iSCSI on FreeBSD, and I think maybe I and/or Combat Pretzel need to look into iser(8). 200bux for a pair of ConnectX4 seems nice, if they're faster than 10Gbit. Combat Pretzel fucked around with this message at 18:04 on Mar 2, 2022 |

|

|