|

Hubis posted:You're going to run into signficant z-buffer precision problems leading to Z-fighting for things that are near one another (within one exponential precision value from one another) unless they are drawn in strict back-to-front order, including sub-draw call triangle order. Thanks. That won't be a problem, the way my data's organised, nothing but simlar size polys at similar distances.

|

|

|

|

|

| # ? Apr 26, 2024 01:29 |

|

magicalblender posted:Also, am I crazy, or has the page for glutKeyboardUpFunc disappeared from the Opengl documentation? I could swear I was looking at it a couple weeks ago, but it isn't there now. Now i need to see if i can compile OpenGL 0.57.0 in ActivePerl CPAN. :/

|

|

|

|

What does GL do when you try to upload a non-pow2 texture, without using the non-pow2 extension? When I do this, it works just as a pow-2 texture does, but: (a) Does it upscale the texture to pow-2 internally, or leave it as it is (b) Is the behaviour in a) dependent on GL version or anything else? (c) Is there a way to get the width/height of an uploaded texture?

|

|

|

|

Depends on your graphics card. Mine (Quadro NVS 140M) just displays it as normal. Others, like a 5 year old ATI, show black polygons that are above everything in the z-buffer.

|

|

|

|

So I was trying to make a breakout clone in a day and I got most of it done, but i'm having this bizarre problem where unless it's running in the VS debugger, it doesn't create half the stuff that it should (like boxes for the ball to hit and the paddle) and I have no idea how to fix it, it's got quite a bit of quick hardcoding stuff I don't usually do so I don't really know what's causing it at all. Here's it in the debugger:  And out of it:  Here's the project file and whatnot, ignore the name of the project it was just an old project already set up for OGL/SDL. Thanks in advance if anyone can help or has any idea what's causing it

|

|

|

|

I presume that it also runs in plain debug mode, rather than only in the debugger? If this is the case, then it could be a whole number of things. My money's on uninitialised variables - see this page for more info.

|

|

|

|

magicalblender posted:What's better, drawing pixels directly, or using textures? Poking around the OpenGL documentation, I see that I can put pixel data into a raster and glDrawPixels() it onto the screen. I can also put the pixel data onto a texture, put the texture on a quad, and put that onto the screen. If I'm just doing two-dimensional imaging, then which is preferable?

|

|

|

|

ultra-inquisitor posted:I presume that it also runs in plain debug mode, rather than only in the debugger? If this is the case, then it could be a whole number of things. My money's on uninitialised variables - see this page for more info. I would agree. Looking through the code, you have no initialization for m_bIsEnabled for BreakoutBox. You also never call your Enable function. But you do check IsEnabled in BreakoutApp in your Update function. That'd be where I would start with.

|

|

|

|

I have a question about DirectX9: I am trying to read vertex data from a mesh loaded from a file. My issue is that I don't know what the vertex data looks like aside from the fact that it has position data and a normal. My knowledge of DirectX isn't that extensive, so I'm sorry if the answer is obvious, but I haven't come across it. code:

|

|

|

|

Luminous posted:I would agree. Ha I spent two days after reading ultra inquisitor's post looking for something uninitialised and completely missed it, that's sorted it, thanks fellas.

|

|

|

|

Eponym posted:Ideally, I'd like to do something like D3DVector3 normal = pVert[index].Normal. My issue is that I don't know what to define struct VERTEX with or what order in which to define its elements. How do I find out the vertex format? http://msdn.microsoft.com/en-us/library/bb172630(VS.85).aspx Use CreateVertexDescription to create an instance of it http://msdn.microsoft.com/en-us/library/bb174365(VS.85).aspx Use SetVertexDescription to bind it http://msdn.microsoft.com/en-us/library/bb174464(VS.85).aspx Use SetStreamSource to assign the vertex buffer to a stream slot http://msdn.microsoft.com/en-us/library/bb174459(VS.85).aspx

|

|

|

|

Ok another question for you dapper fellas, how do I copy a texture from one handle to another in openGL without copying the pixels manually? I want to create a faux-motion blur effect by taking the previous frame and doing a simple fragment shader blend with the current one, I can get the current framebuffer using the framebuffer EXT and it's stored in an image handle but that handle is linked to the FBO so whenever the FBO is drawn to it's overwritten. I know I can detach the current image and attach a different one but that doesn't seem like a particularly good way to go about it but I could be wrong, would using an alternating color attachment each frame do the job? Any help would be fabbo.

|

|

|

|

brian posted:Ok another question for you dapper fellas, how do I copy a texture from one handle to another in openGL without copying the pixels manually? I want to create a faux-motion blur effect by taking the previous frame and doing a simple fragment shader blend with the current one, I can get the current framebuffer using the framebuffer EXT and it's stored in an image handle but that handle is linked to the FBO so whenever the FBO is drawn to it's overwritten. I know I can detach the current image and attach a different one but that doesn't seem like a particularly good way to go about it but I could be wrong, would using an alternating color attachment each frame do the job? Any help would be fabbo. Using one framebuffer and alternating color attachments sounds very feasible to me. What i am not 100% sure about is whether you can read and write to the same texture inside the same shader call. If you could that would mean that you'd only need two textures to do the motion blur (previous frame texture and the current framebuffer texture. For each framebuffer pixel you read its value plus the "motion" equivalent from the old buffer and mix them, then write the result back on the same buffer) else, you'd need a third texture as a buffer. Mind you that you can also "fake" motion blur without saving the previous framebuffer. Just sample on the current frame framebuffer, in the same direction as you would normally, to get your "previous frame" value. Im pretty sure it works OK and it saves memory to boot.

|

|

|

|

shodanjr_gr posted:Using one framebuffer and alternating color attachments sounds very feasible to me. What i am not 100% sure about is whether you can read and write to the same texture inside the same shader call. Pretty sure a texture can't be bound and set as the destination simultaneously.

|

|

|

|

Well I got that working like that, but now i'm having a buttload of trouble getting GLSL to work properly to blend it, the GLSL code is fine (tested in shader designer) but applying it correctly is hurting my head, at the moment I get it displaying without any noticable blur, with the alpha channel not working and there being a load of flickering, is there a way to blend the textures without using shaders because the whole glTexEnv stuff is hurting my already broken brain

|

|

|

|

brian posted:Well I got that working like that, but now i'm having a buttload of trouble getting GLSL to work properly to blend it, the GLSL code is fine (tested in shader designer) but applying it correctly is hurting my head, at the moment I get it displaying without any noticable blur, with the alpha channel not working and there being a load of flickering, is there a way to blend the textures without using shaders because the whole glTexEnv stuff is hurting my already broken brain Why are you using blending? Do something like this: gl_FragColor = vec4(texture2D(currentFrame, gl_TexCoords[0]).rgb * 0.8 + texture2D(previousFrame, gl_TexCoords[0] + motionVec * sampOffset).rgb * 0.2, 1.0); inside your blur shader. And even if you use alpha blending, its not hard to do. Set gl_BlendFunc(GL_SRC_ALPHA, GL_ONE_MINUS_SRC_ALPHA) and just regulate the alpha from side the shader to get the wanted result. If you are getting flickering, then you are (probably) doing some weird stuff with your framebuffer objects and/or your texture bindings and/or your clearing of color buffers. Cant tell you much more without looking at your code. shodanjr_gr fucked around with this message at 00:55 on Oct 10, 2008 |

|

|

|

Here's the main gl stuff, the ShooterWorld::draw() function just draws a series of sprites from the world, here's the sprite drawing code, I tried it with your fragment jobby but it just produces a pure black window because I assume i'm doing something wrong (I set the motionVec to (5,0) since that's the speed the camera and ship move but that's probably wrong too. I don't quite understand why it needs a direction if everything is moving anyway since the next frame will be further along the sprite than the second one thus causing the same effect right? Any help would be fabbo fella!

|

|

|

|

brian posted:Here's the main gl stuff, the ShooterWorld::draw() function just draws a series of sprites from the world, here's the sprite drawing code, I tried it with your fragment jobby but it just produces a pure black window because I assume i'm doing something wrong (I set the motionVec to (5,0) since that's the speed the camera and ship move but that's probably wrong too. I don't quite understand why it needs a direction if everything is moving anyway since the next frame will be further along the sprite than the second one thus causing the same effect right? Ill look into your code later, but for the moment, if you are getting a totally black picture there are a few things that may be going on: a) Your previous and current frame textures are blank, or they are not bound/initialized properly b) You are clearing your before and after buffers before you actually do the blur pass (so the textures are blank when you try to read them) c) You are using a wrong alpha value inside your blur shader (probably zero) d) You have enabled backface culling during your post processing blur pass (and your quad gets culled because it faces the wrong way) e) You have enabled Z-culling and your quad gets culled (although this isnt too likely, since you are only rendering one primitive) f) You are doing your transformations wrong. If you want to do a post processing pass, just set your modelview and projection matrices to identity, throw up a quad from -1,-1 to 1,1 at some Z-value, and it should work. Ill check your code later

|

|

|

|

Well it works (not correctly and really oddly) if I do a simple gl_FragColor = texColor1 + texColor2 type thing but then again I have no idea what i'm doing wrong, i've rejigged it so many times just trying to get it to work that i've lost any clue on what i'm doing and the whole file is a mess, it was just to quickly test it before I clean it up but it's turned awry! There's an issue where if I have the FBO go to one color attachment the other one gets turned to pure black despite nothing being done to it, which could be the cause but that wouldn't explain why it works the aforementioned simple way. I'm so horribly confused I don't usually do alot of graphics programming beyond simplistic sprites and particles so my understanding of the GL state system is spotty and I just tend to try things until it works instead of thinking about it correctly, that said I can't seem to wrap my head around the processes involved with FBOs and shaders when it comes to textures because of the whole removing the fixed function pipeline jobby.

|

|

|

|

brian posted:Well it works (not correctly and really oddly) if I do a simple gl_FragColor = texColor1 + texColor2 type thing but then again I have no idea what i'm doing wrong, i've rejigged it so many times just trying to get it to work that i've lost any clue on what i'm doing and the whole file is a mess, it was just to quickly test it before I clean it up but it's turned awry! There's an issue where if I have the FBO go to one color attachment the other one gets turned to pure black despite nothing being done to it, which could be the cause but that wouldn't explain why it works the aforementioned simple way. I'm so horribly confused I don't usually do alot of graphics programming beyond simplistic sprites and particles so my understanding of the GL state system is spotty and I just tend to try things until it works instead of thinking about it correctly, that said I can't seem to wrap my head around the processes involved with FBOs and shaders when it comes to textures because of the whole removing the fixed function pipeline jobby. Post your shader code here if you can. Also, nothing has been removed so far from the fixed functionality as far as i know. I think some stuff became deprecated in OpenGL 3.0, but its still there. An FBO is something OGL can render to. It can have multiple color attachments (for Multiple render target rendering), a depth attachment, and i think one more type (which eludes me now). If you want, you can generate a texture (as normal) and attach it to a color attachment point of an FBO. So for instance, you can generate an FBO, create a GL_DEPTH_COMPONENT texture and attach it to the GL_DEPTH_ATTACHMENT point (so it becomes the FBOs Z-buffer) and an GL_RGBA texture and attach it to the GL_COLOR_ATTACHMENT0 point. Remember to call glDrawBuffers() or something like that, to specify which color attachments are active for the current FBO. Make SURE that both your textures have exactly the same height and width. OpenGL does NOT support different render target sizes for the same FBO, if you do it, your FBO wont initialize. If you have set only one color_attachment as the target for an FBO, inside your shader, use gl_FragColor to save the result. Else you will have to use gl_FragData[0/1/2/4...]. After that, when you want to write to the FBO, you can just "bind" it (glFramebufferEXT(GL_FRAMEBUFFER_EXT, yourNewFBO)), and use it as you would use your normal framebuffer, with the exception that instead of rendering to your screen, you render to a texture, which you can also use later on for whatever you need. Another goon had posted an FBO tutorial a few pages back (it might also be in the Game Development MEgathread...im not sure). Go look at it if you can, it will help you out.

|

|

|

|

Actually the FBO on its' own works fine, if the shaders aren't enabled I can get a mini viewport of the same scene as the main viewport and it works great, when I do two color attachments and alternate frames on which they're assigned by glDrawBuffer(), at any one frame one will have the correct frame while the other will be blank (pure black), as if by not assigning it means the texture info gets deleted. Anyhoo here's my crappy shader code: test.vert: code:code:code:As for the fixed function pipeline I was under the impression by using any vertex or fragment shader it goes around the usual processes that gl performs (like having to transform vertices with ftransform() or modelview * vertex). Anyhoo I wasn't really expecting the motion blur to work as much as it just to be the correct image blended with a black image but it doesn't work correctly at all. edit: Pictures: Shader on, both using gl_MultiTexCoord0  Shader on, using gl_MultiTexCoord0 and gl_MultiTexCoord1  Shader off, showing FBO working fine

brian fucked around with this message at 00:16 on Oct 11, 2008 |

|

|

|

brian posted:Actually the FBO on its' own works fine, if the shaders aren't enabled I can get a mini viewport of the same scene as the main viewport and it works great, when I do two color attachments and alternate frames on which they're assigned by glDrawBuffer() quote:test.vert: quote:

I think the way you throw your postprocessing quad is wrong. The coordinates should be code:quote:As for the fixed function pipeline I was under the impression by using any vertex or fragment shader it goes around the usual processes that gl performs (like having to transform vertices with ftransform() or modelview * vertex). Yes that's true.

|

|

|

|

brian posted:As for the fixed function pipeline I was under the impression by using any vertex or fragment shader it goes around the usual processes that gl performs (like having to transform vertices with ftransform() or modelview * vertex). Also, keep in mind that blending, stencil, depth, and alpha test (for now) are not currently handled by shaders.

|

|

|

|

brian posted:Ok another question for you dapper fellas, how do I copy a texture from one handle to another in openGL without copying the pixels manually? I want to create a faux-motion blur effect by taking the previous frame and doing a simple fragment shader blend with the current one, I can get the current framebuffer using the framebuffer EXT and it's stored in an image handle but that handle is linked to the FBO so whenever the FBO is drawn to it's overwritten. I know I can detach the current image and attach a different one but that doesn't seem like a particularly good way to go about it but I could be wrong, would using an alternating color attachment each frame do the job? Any help would be fabbo. Uh, you realise you can do that in about 4 lines using the accumulation buffer, right? (and it'll be faster than doing it manually, too) Like this: float q=0.7f; glAccum(GL_MULT, q); glAccum(GL_ACCUM, 1.0f-q); glAccum(GL_RETURN, 1.0f); Where q is the amount to blur, naturally.

|

|

|

|

Well the quad positioning is fine, it's still using the gluOrtho2D I set up earlier, I mean if it wasn't positioned right it wouldn't show at all, I mean the FBO texture on its' own displays fine but for some reason the shader stuff messes up the alpha and whatnot as seen in the pictures above. If it's not too much trouble can one of you fellas look through the source, i'm using pretty much copy and pasted shader set up stuff from examples. Note that the same behaviour happens without the glTexEnv functions being called unless you change the mode from GL_BLEND in which case it goes back to just being a blank screen. http://pastebin.com/f70e2d9a9 edit: i'll totally give the Accumulation stuff a try thanks! brian fucked around with this message at 01:58 on Oct 11, 2008 |

|

|

|

The accum stuff is pretty nifty. brian, if you need more info, here's a pretty good read on it: http://www.cse.msu.edu/~cse872/tutorial5.html

|

|

|

|

Don't most consumer graphics cards do accumulation buffers in software, punching your framerate in the face?

|

|

|

|

Is there something I need to set to turn the accumulation buffer on? Even when doing the tutorial with its' sample project and doing the exact thing it says I get absolutely no visible change, yet it works on another example using GLUT. I've just spent the last 2 hours searching through GLUT's source code which is about the most circularly frustrating thing ever, is it graphics card dependent or something? Also in other news the whole one being blank when the other one was drawn to was entirely down to me being an idiot and putting the clear color buffer bit in the wrong place, so if I can get this whole accumulation razmatazz working it should be fine. Ideally i'd like to do it with shaders if accumulation buffers are going to be an issue but for now i'd just like to get it working. edit: Apparently it's to do with windows window creation so i'm looking into that. edit2: Got the accumulation buffer working! Now I just need to get the blur working correctly, thanks fellas for all your help! edit3: Totally works great, the only problem is that the main ship doesn't really move around the screen much so it looks oddly clear when everything else being blurred, maybe I can fake it somehow with a different texture or something. brian fucked around with this message at 23:17 on Oct 11, 2008 |

|

|

|

OneEightHundred posted:Don't most consumer graphics cards do accumulation buffers in software, punching your framerate in the face? Hrm, reading up it seems that support for it is indeed a little iffy. Maybe using FBOs would be a better idea overall... it's a neat trick when you need a quick blur though.

|

|

|

|

I honestly don't know if this question should go here, its own thread, or some other thread that I have missed. It is a setup question. I use vs2008, and I just installed the directx sdk (august 2008). This set the environment variable, DXSDK_DIR. I had to manually point visual studio to the include and lib directories (tools > options > projects and solutions > vc++ directories). I would have thought the sdk installer would have done that itself, but oh well. So, now I just wanted to check out some of the samples (as well as verify that the sdk, did, in fact install correctly). So, I install a couple test projects (specifically, Direct3D10WorkshopGDC2007 and PIXGameDebugging were my quick tests), build the _2008 solutions, and am just greeted with unresolved externals. Not the most unresolveds I have ever seen, but at least in those cases I knew the system well enough to figure out what was wrong. Here I am a little stumped simply because I was expecting an installed project to work straight off the bat, and because I do have the lib and includes pointed to their proper spots. I have spent time trying to google this, but damned if I can find just basic, complete install directions - preferably from microsoft themselves (and watch me have missed it in a completely obvious location). The only cases I found were people asking for help as well, although their solution (adding the pointers to the lib and include directories) has obviously not worked for me. So, my question is simply: what am I missing? Please tell me I have just missed something completely minor so that I can get along. Below is a sampling of the errors, if it helps. code:

|

|

|

|

Luminous posted:So, my question is simply: what am I missing? Please tell me I have just missed something completely minor so that I can get along. Below is a sampling of the errors, if it helps. Right-click the project, select Properties, go to the Linker subsection, select Input, and then check the Additional Dependencies line. You need d3dx10d.lib for the debug build and d3dx10.lib for the release build.

|

|

|

|

OneEightHundred posted:Did you include the D3DX libs in your project? Yes. These are just the samples, which already have the necessary libs included in the properties. But yes, I had double checked and they are listed.

|

|

|

|

Due to finally being able to compile OpenGL 0.57 in CPAN, i have now access to a workable example of vertex arrays in Perl. If i look at this correctly, it means that it can draw a single triangle in exactly one call, as opposed to the 6 needed in immediate mode. This would mean a massive speed-up since Perl has a horrible penalty on sub calls. However i'll still need to use display lists, since 180 calls per frame is a lot nicer than 46000. What are good practices when combining display lists and vertex arrays and what should i pay attention to?

|

|

|

|

Mithaldu posted:Due to finally being able to compile OpenGL 0.57 in CPAN, i have now access to a workable example of vertex arrays in Perl. If i look at this correctly, it means that it can draw a single triangle in exactly one call, as opposed to the 6 needed in immediate mode. This would mean a massive speed-up since Perl has a horrible penalty on sub calls. there are no real concerns with combining them, as vertex arrays are very commonly used and self-contained. Just don't go overwriting buffers the driver is still using and you should be ok. Also, I'm still confused as to why you're issuing only one triangle at a time -- are you really completely changing state every draw?

|

|

|

|

Hubis posted:there are no real concerns with combining them, as vertex arrays are very commonly used and self-contained.  Luckily that shouldn't be too much of a problem as it only forces me to do some refactoring that i should've done a while ago already. (Construct display lists for all the basic elements and then make the big ones by calling the basic lists instead of constructing the big lists by drawing them all in immediate mode.) Luckily that shouldn't be too much of a problem as it only forces me to do some refactoring that i should've done a while ago already. (Construct display lists for all the basic elements and then make the big ones by calling the basic lists instead of constructing the big lists by drawing them all in immediate mode.)Hubis posted:Just don't go overwriting buffers the driver is still using and you should be ok.  Hubis posted:Also, I'm still confused as to why you're issuing only one triangle at a time -- are you really completely changing state every draw? code:code:

|

|

|

|

I'm rendering a bunch of different line segments in a straight line on the iPhone using glDrawArrays and GL_LINES on a 2d projection. They look fine, but when I scale the screen using glScalef some of the lines (on the same y axis or x axis, depends on the scale level) seem to move up or down one pixel. It seems completely dependent on the scale level (they ones moving change as I zoom in and out). I've tried enabling smooth lines, however, the version of OpenGL ES on the iPhone simply ignores it. The next thing I tried to do was draw my lines using gl_triangle_strips. This was slow as hell and at certain scale levels some groups wouldn't draw at all. So here are my questions. Why do the lines shift up or down slightly as I scale? Should I be scaling the screen (to zoom) using glScalef? This is my first time using opengl so please be kind.

|

|

|

|

I got a small question. Suppose i want to render slices in front of my view volume for something (a volumetric effect for instance) and save them to texture. The obvious way to this would be to set (Zfar - Znear) to something small, then constantly keep pushing my the near clipping plane forward, capturing more and more of my volume as i go (and saving to a different texture each time). However, this seems really slow, especially considering that id have to reissue my geometry a number of times equal to the number of slices. Is there some way to make this faster? I remember reading something about an instancing extension, googling seems to indicate that this is about instancing as in rendering multiple pieces of geometry using the same vertex data etc etc). Any ideas?

|

|

|

|

Got a question about z-fighting issues. I'm rendering layers of roughly 80*80 objects, with roughly 20-40 layers stacked atop each other at any time. There is no computationally non-expensive way to cut out invisible geometry that i am aware of, so i pretty much have to render everything at all times. This leads to the z-buffer getting filled up pretty fast, resulting in rather ugly occasions of polys clipping through other polys. I've already restricted the near and far clipping planes as much as possible so the geometry has the full z-buffer to play with. Would it help the clipping issues to render the geometry (when looking at it from the top) by cycling through the z-layers bottom-up, drawing everything in them and calling glClear( GL_DEPTH_BUFFER_BIT ); inbetween every z-layer? If so, would it also help to do a full reset of the projection like this, or would that be unnecessary extra work? code:

|

|

|

|

Mithaldu posted:Got a question about z-fighting issues. I'm rendering layers of roughly 80*80 objects, with roughly 20-40 layers stacked atop each other at any time. There is no computationally non-expensive way to cut out invisible geometry that i am aware of, so i pretty much have to render everything at all times. This leads to the z-buffer getting filled up pretty fast, resulting in rather ugly occasions of polys clipping through other polys. You could try disabling Z culling altogether, as it seems like it's very easy for you to render from nearest-to-furthest order. To save shading work, you can use the stencil functionality to write the current layer number to the stencil buffer, and to only output a given pixel if the current layer has nothing written to it already (i.e. == 0). Rendering top to bottom in this manner and using the stencil buffer to avoid overdraw should work. That being said, I'm kind of surprised you're having trouble with Z precision like that. Your z-clear trick would probably work, though I'd reccomend rendering from top down and using the stencil technique mentioned above as well if you go that route.

|

|

|

|

|

| # ? Apr 26, 2024 01:29 |

|

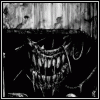

Disabling the depth buffer is not an option, as the z-culling needs to be kept functional for each z-layer. Sounds like a pretty neat approach though. (Although i'm confused by what you mean with shading work.) To make things a bit more visual, some screenshots. The first one shows a normal view. You are looking at 5x5 blocks of 16x16 units each. Each unit can be a number of terrain features, as well as a building (red) or a number of items (blue). Currently i'm rendering all items, even if that means they overlap each other, as sorting out already rendered ones causes a performance impact on the cpu side that slows poo poo down. As such, there's probably 3000-6000 items rendered there (visible and underground), all in all coming together to a fuckton of polys.  The landscape blocks are rendered as one display-list each, with the coordinates for all vertices pre-calculated. The buildings and items are rendered as individual 0,0,0-centered display lists that get moved into place by being wrapped in gltranslate commands. Additionally, each block has not only the top-faces of each landscape unit rendered, but also the no/so/we/ea faces of the edge units, since leaving them out would leave holes in the geometry. These are the first problem factor that i noticed: When zooming in, the begin to clip through the top faces. Additionally the red building blocks are rarely problems and manage, again when zoomed in, to clip through the top faces *entirely*. Lastly, when just doing screenshots, i noticed that sometimes the stones on the ground that are part of the landscape display lists clip through the blue items. The dimensions are such that each landscape unit is exactly 1x1x1 units big, speaking in floating point vertex coordinates. (Would it maybe help if i increased that?) Currently the clipping planes are being done by defining a bounding box around all visible content (since it's a neat rectangle) and selecting the two points that are furthest and closest from the screen-plane, when projected along the camera vector and using their distances as parameters. I am using gluLookAt, with the target being the turquoise wireframe. If the camera is moved towards the target or away from it the clipping planes get adjusted to match the distances. If the near plane falls under 1.0, i adjust it to stay at 1.0. And that's where my problem comes in. Looking at it all from afar is good and works, but when i zoom in by moving the camera closer, this happens:  Note how the rocks in the bottom left corner clip in front of the blue things, which should be straigh above them. Also note the side-faces at the block edge clipping through the top faces in the line in the bottom-right quarter as well as in the top middle on the dark ground. I mitigated it a bit by switching from zooming by moving the camera to zooming by changing the fov, but even that doesn't fix it completely (and things tend to jerk and jump around a lot when moving the camera):

|

|

|