|

I installed a powerpc emulator and OS 9 a while back to play old Mac games, and was screwing around and installed Netscape 4 as well Lemme tell ya, the internet without JavaScript on a non-bloated OS with a modern processor fuckin screams

|

|

|

|

|

| # ? May 4, 2024 19:02 |

|

i'm on very very poor internet a lot of the time, in a weird way that throughput is decent, and it is not simple latency, but establishing connections (i.e. the first packages getting through) seems to struggle each time let me tell you: there are very few websites which don't block the javascript on a few requests as part of loading, and, of course, most websites are unusable while javascript is (present but) blocking

|

|

|

|

p good posts about the rendering pipeline https://www.fasterthan.life/blog/2017/7/11/i-am-graphics-and-so-can-you-part-1

|

|

|

|

Sagebrush posted:I installed a powerpc emulator and OS 9 a while back to play old Mac games, and was screwing around and installed Netscape 4 as well remember at the time people poo poo on netscape 4 for being slow and bloated try ie 5 and be amazed

|

|

|

|

Notorious b.s.d. posted:remember at the time people poo poo on netscape 4 for being slow and bloated better yet try old browsers that will actually run on modern systems without a layer of os virtualization working with a layer of emulating a completely different os. sadly all the standalone/portable ie from 3 to 6 seem to have win 10 issues

|

|

|

|

the best ie5 experience was ie for Unix, if you have a Solaris 7 / hp-ux 10 system handy

|

|

|

|

elite_garbage_man posted:p good posts about the rendering pipeline aw neat, he shouts out baldurk as a special thanks. he's an lp goon.

|

|

|

|

baldurk is also super well-known in the gamedev sphere. literally everyone uses renderdoc.

|

|

|

|

elite_garbage_man posted:p good posts about the rendering pipeline thanks for this

|

|

|

|

elite_garbage_man posted:p good posts about the rendering pipeline too much memery for me

|

|

|

|

ate all the Oreos posted:i'm seriously asking, like is "ordered information" some kind of potential energy since it's not as low an energy state as random / higher entropy states could be? transistor gates are tiny capacitors, every time you turn a transistor on or off you are using energy to move that charge, to move electrons to charge the capacitance of a transistor gate, that won't be wasted as heat, but it's un-done every time you switch the transistor to another state. so the short answer is that energy lost to resistance is heat, but the electricity that charges up the capacitance of the transistor gate is not lost as heat.

|

|

|

|

I have a feature request at my project from baldurk... I probably should get around looking at it again

|

|

|

|

Who else is excited for external GPUs to finally become a thing

|

|

|

|

LinYutang posted:Who else is excited for external GPUs to finally become a thing will they? people already feel constrained by pcie's latency and are trying to jump on nvlink

|

|

|

|

it would be nice to have an ultrabook for portability, then you could come home plug in a single cable and have a sick gaming rig some people say a computer cost as much as thunderbolt enclosure so just get a 2nd computer, but I'd pay extra to janitor 1 computer instead of 2

|

|

|

|

LinYutang posted:Who else is excited for external GPUs to finally become a thing literally saw a thing the other day that was a 1xpci card that had a usb3.0 out and on the cable was a 16xpci slot for a gpu card it�s for adding gpu for mining of course but lmao

|

|

|

|

This thread is extremely my poo poo, and I wish I had found it months ago. Thanks to everyone who effortposted! I'm one of those weirdos who don't use their GPU for shootmans. I have spent the past four years or so in GPU hell on a PhD project, where we try to compile a functional data-parallel language to efficient GPU code. I sure am grateful to my advisor for coming up with a research project that depends on janitoring Linux GPU drivers (seriously, don't ask me about NVIDIA drivers that crash so hard that the kernel will hang on reboots (actually, that's the whole story)). We target OpenCL and not CUDA because OpenCL is a slightly nicer target for code generation, and because it means we can also run on non-NVIDIA GPUs. Intel GPUs are actually surprisingly fast for compute, if you can accept single-precision floats. However, it would be fairly trivial to add a CUDA backend as well, and we probably will need to eventually, once NVIDIA completely strangles the market. The main thing I have been wishing for, and which is finally loving coming now, is proper demand-paging on GPUs. Why it took so long is beyond me.

|

|

|

|

Also, am I missing something, or is GPU compute in browsers and phones even more of a miserable hellscape than normal?

|

|

|

|

webcl is dead in the water, if that's what you mean.

|

|

|

|

you can totally do compute in webgl though if you dont mind expressing everything in terms of data textures and pixel shader passes like a loving savage

|

|

|

|

Athas posted:The main thing I have been wishing for, and which is finally loving coming now, is proper demand-paging on GPUs. Why it took so long is beyond me. Because it's not the gaming use-case, where low latency and 60fps basically requires explicit allocation. A fault that takes 1s would be amazing for compute cases, but a cert fail on any other platform.

|

|

|

|

funny compute

|

|

|

|

Athas posted:Also, am I missing something, or is GPU compute in browsers and phones even more of a miserable hellscape than normal? http://gpu.rocks/

|

|

|

|

are people really trying to do compute on phones though

|

|

|

|

how else do you expect web ads to mine bitcoins?

|

|

|

|

pseudorandom name posted:how else do you expect web ads to mine bitcoins?

|

|

|

|

you can already mine for bitcoin with plain javascript and get better results than blowing a phone's GPU up :P

|

|

|

|

LinYutang posted:you can already mine for bitcoin with plain javascript and get better results than blowing a phone's GPU up :P

|

|

|

|

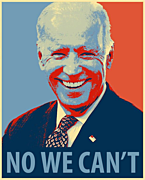

Suspicious Dish posted:Because it's not the gaming use-case, where low latency and 60fps basically requires explicit allocation. A fault that takes 1s would be amazing for compute cases, but a cert fail on any other platform. How much of NVIDIAs revenue is gaming compared to compute these days?

|

|

|

|

anthonypants posted:sounds like you're extremely unfamiliar with bitcoin mining Nah, web crypto miners like Coinhive typically use the CPU

|

|

|

|

LinYutang posted:Nah, web crypto miners like Coinhive typically use the CPU

|

|

|

|

anthonypants posted:sounds like you're extremely unfamiliar with bitcoin mining anybody that knows gpu programming well enough to mine bitcoin on it already know how worthless phone gpus are

|

|

|

|

Athas posted:How much of NVIDIAs revenue is gaming compared to compute these days? given how much money they're spending on compute & ai sales, probably not that much. they're betting big on it being a growth sector, which is why they only added demand page faulting just now

|

|

|

|

Athas posted:How much of NVIDIAs revenue is gaming compared to compute these days?

|

|

|

|

what's 'professional visualization'?

|

|

|

|

vOv posted:what's 'professional visualization'? probably architectural design, cad, medical imaging and other things along those lines

|

|

|

|

Athas posted:How much of NVIDIAs revenue is gaming compared to compute these days? gaming is about 2.5x what datacenter is. fair amount of non datacenter compute also margins are a lot better in datacenter tho

|

|

|

|

The_Franz posted:probably architectural design, cad, medical imaging and other things along those lines 3d rendering might do a bit of it, though I don't know if render farms would go into datacenters.

|

|

|

|

the first three rows are probably just geforce/quadro/tesla sales respectively nvidia has no way of knowing that the crate of 1080tis someone bought is actually going into an octane farm

|

|

|

|

|

| # ? May 4, 2024 19:02 |

|

repiv posted:the first three rows are probably just geforce/quadro/tesla sales respectively true, that huge jump in "gaming" between q2 and q3 of 2017 is probably due to the buttcoin miners buying cards by the truckload

|

|

|