|

What if you made a super smart computer that would believe in you and treat you with respect, like an equal, the way those jerks at school or your parents or the neighborhood kids who made fun of you never did? Who could love you the way you deserve so that everything would be beautiful and nothing would hurt ever again?

|

|

|

|

|

| # ? Jun 9, 2024 01:51 |

|

Toph Bei Fong posted:What if you made a super smart computer that would believe in you and treat you with respect, like an equal, the way those jerks at school or your parents or the neighborhood kids who made fun of you never did? Who could love you the way you deserve so that everything would be beautiful and nothing would hurt ever again? I find your ideas intriguing and would like to subscribe to your newsletter. Do you have a cult?

|

|

|

|

pentyne posted:Sorry, Permutation City. Greg Egan is a good sci-fi writer and doesn't deserve to be tarnished by association with sci-fi fan turned internet longpost writer Ellie Yudkowsky.

|

|

|

|

pentyne posted:This is the plot to Snow Crash, right? I've had it explained to me a handful of times but nothing anyone says has ever made sense to me beyond the main character had a mental disorder that meant he couldn't tell if he was a simulation or not and that drove the plot. To expand a little, the plot to Snow Crash (or at least part of it, it's a pretty complicated book) is that it's possible to program human brains using precisely the right tones and/or images, and that this is in fact how the first languages worked. It's pretty interesting, and you should probably read it if you like Sci-Fi at all because it's referenced in all sorts of places.

|

|

|

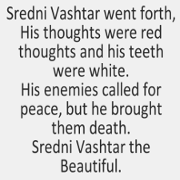

Wolfsbane posted:To expand a little, the plot to Snow Crash (or at least part of it, it's a pretty complicated book) is that it's possible to program human brains using precisely the right tones and/or images, and that this is in fact how the first languages worked. It's pretty interesting, and you should probably read it if you like Sci-Fi at all because it's referenced in all sorts of places. Though isn't that image stuff in turn where the idea of the basilisk came from? The general case there being "an image that causes a stack overflow in the human brain architecture, killing you"?

|

|

|

|

Nessus posted:This is basically the concept of neurolinguistic programming, isn't it? Which in itself does not seem to be very testable even if it might be a good, like, poetic metaphor. I guess there's those weird cases like the PNG languages that don't say "left or right" but "north of you" giving people good senses of direction. yeah. http://en.wikipedia.org/wiki/David_Langford#Basilisks

|

|

|

|

|

man, all I used to read was lesswrong growing up, let me have my sperg doomsday cult GBS

|

|

|

|

Dzhay posted:Greg Egan is a good sci-fi writer and doesn't deserve to be tarnished by association with sci-fi fan turned internet longpost writer Ellie Yudkowsky. Greg Egan actually makes a swipe at Less Wrong/Kurzweil in Zendegi Greg Egan posted:�I�m Nate Caplan.� He offered her his hand, and she shook it. In response to her sustained look of puzzlement he added, �My IQ is one hundred and sixty. I�m in perfect physical and mental health. And I can pay you half a million dollars right now, any way you want it. [...] when you�ve got the bugs ironed out, I want to be the first. When you start recording full synaptic details and scanning whole brains in high resolution�� [...] �You can always reach me through my blog,� he panted. �Overpowering Falsehood dot com, the number one site for rational thinking about the future��

|

|

|

|

Unbudgingprawn posted:Greg Egan actually makes a swipe at Less Wrong/Kurzweil in Zendegi

|

|

|

|

ALL-PRO SEXMAN posted:Yudkowsky is a stupid stupid man but y'all some sad dorks for obsessing about it fyi aren't you the guy who spent six months downvoting every thread in D&D after you got chased out of a thread

|

|

|

|

Sleeveless posted:aren't you the guy who spent six months downvoting every thread in D&D after you got chased out of a thread

|

|

|

|

Unbudgingprawn posted:Greg Egan actually makes a swipe at Less Wrong/Kurzweil in Zendegi I'd somehow forgotten that. Thank-you.

|

|

|

|

Somebody explain the thing where yud has the power to reprogram his own brain, but he can only do it once because it's such a dangerous thing to do

|

|

|

|

Dzhay posted:I'd somehow forgotten that. Thank-you. Egan seems like a nega-Yudkowsky. Incredibly private, prolific and actually knows something about math.

|

|

|

Wanamingo posted:Somebody explain the thing where yud has the power to reprogram his own brain, but he can only do it once because it's such a dangerous thing to do Then again maybe just 'do anything I don't want to do' considering how his loving magnum opurt is taking forever.

|

|

|

|

|

Unbudgingprawn posted:Egan seems like a nega-Yudkowsky. Incredibly private, prolific and actually knows something about math. Egan is one of the best authors I've ever read and who absolutely rejects any sort of fame or celebrity status. His fictional mathematics are also near-infinitely more rigorous and defined then anything Yud has ever written, claimed, or talked about.

|

|

|

|

Nessus posted:I believe by 'reprogram his own brain' he meant 'do something actively unpleasant, or otherwise exert willpower beyond maybe nudging myself to do something I want to do but maybe have been putting off.' "Be an adult"="reprogram my loving brain"

|

|

|

|

Wanamingo posted:Somebody explain the thing where yud has the power to reprogram his own brain, but he can only do it once because it's such a dangerous thing to do Yudkowsky posted:Given a task, I still have an enormous amount of trouble actually sitting down and doing it. (Yes, I'm sure it's unpleasant for you too. Bump it up by an order of magnitude, maybe two, then live with it for eight years.) My energy deficit is the result of a false negative-reinforcement signal, not actual damage to the hardware for willpower; I do have the neurological ability to overcome procrastination by expending mental energy. I don't dare. If you've read the history of my life, you know how badly I've been hurt by my parents asking me to push myself. I'm afraid to push myself. It's a lesson that has been etched into me with acid. I'm so misunderstood~ I think a lot of children make up reasons to explain why they aren't as amazing as they want to be. Usually they're not quite this transparent: "I'm saving my superpowers for when they will save the most people because I am an anime" would not convince your average sixth grade bully. Good thing he was homeschooled.

|

|

|

|

Applewhite posted:What if you made a super smart computer but didn't give it arms or access to the internet so it could hate you all it wanted but it's just a box so whatever? What if the moon was made of ribs?

|

|

|

SolTerrasa posted:I'm so misunderstood~ For an eighth grader going through a phase, tying their demon seal wristband on is no great sin. How old's Yud?

|

|

|

|

|

Nessus posted:How old's Yud?

|

|

|

|

He was born in 1979. Holy poo poo I thought the guy was like my age Big Yud posted:�I'm lazy! I hate work! Hate hard work in all its forms! Clever shortcuts, that's all I'm about!� I am shocked. Edit: This looks fun Hate Fibration fucked around with this message at 08:57 on Jan 23, 2015 |

|

|

|

SolTerrasa posted:Good thing he was homeschooled. That's probably a large reason for his lack of perspective. So if anything it isn't really a good thing and more a prime example of why it is a bad idea.

|

|

|

|

Christ, he's older than I am, at least that much is a relief.

|

|

|

|

|

The AI-box thing rests on two very solid assumptions. 1) The AI can cause things to happen outside the box (using mind magic, or the world is just a simulation for the sole purpose of loving with you etc) 2) The human participant is taking the whole thing seriously and not responding to everything the AI says with variations of "lol ur a human being". e: also lol at yud going "I'm lazy, but I'm sooo lazy it's actually kind of a superpower". Like the guy has never heard of writer's block or lack of motivation, and just assumes it's something unique to him. Mr. Sunshine fucked around with this message at 11:05 on Jan 23, 2015 |

|

|

|

Mr. Sunshine posted:The AI-box thing rests on two very solid assumptions. Not unique, his anxiety is just two orders of magnitude worse than everyone elses! Literally a hundred times worse than any of you mortals

|

|

|

|

Skittle Prickle posted:Not unique, his anxiety is just two orders of magnitude worse than everyone elses! Computer Scientist: You meant to say, 4 times Mathematician: You meant to say, 7.3890560989306495... times

|

|

|

|

Hate Fibration posted:He was born in 1979.

|

|

|

|

quote:My parents always used to downplay the value of intelligence. And play up the value of�effort, as recommended by the latest research? No, not effort. Experience. A nicely unattainable hammer with which to smack down a bright young child, to be sure. That was what my parents told me when I questioned the Jewish religion, for example. I tried laying out an argument, and I was told something along the lines of: "Logic has limits, you'll understand when you're older that experience is the important thing, and then you'll see the truth of Judaism." I didn't try again. I made one attempt to question Judaism in school, got slapped down, didn't try again. I've never been a slow learner. Jesus Christ, no wonder he thinks an AI would want to destroy humanity. If it was anything like him (and as the �bermensch, of course it would be) it would kill us all thanks to the gigantic parental complex it would have.

|

|

|

|

Where's the part where he cooly dismisses effort, justifying his own lazy "intelligence"?

|

|

|

|

SolTerrasa posted:I'm so misunderstood~ It's still not to late to trap him in a room with a sixth-grade bully and give him some lessons in perspective A Wizard of Goatse fucked around with this message at 17:31 on Jan 23, 2015 |

|

|

|

Sham bam bamina! posted:I take it you've never seen a picture of him: ?!?!??!

|

|

|

|

Political Whores posted:Jesus Christ, no wonder he thinks an AI would want to destroy humanity. If it was anything like him (and as the �bermensch, of course it would be) it would kill us all thanks to the gigantic parental complex it would have. it would be really annoying for your parents to be that smug lol

|

|

|

|

ALL-PRO SEXMAN posted:What if the moon was made of ribs?

|

|

|

|

i gotta do this before lovedump goes into effect so: bad thread, gas everyone

|

|

|

|

Dieting Hippo posted:bad thread, gas everyone Joke's on you. This thread is an AI simulation.

|

|

|

|

Curvature of Earth posted:Joke's on you. This thread is an AI simulation. If you don't post in this thread, a future AI will ban your simulation.

|

|

|

|

I found something today, while I was hunting around for Big Yud's old programming language project. It turns out that the programming language (Flare, it's called, and never finished) was part of The Path To Singularity. I really cannot express how fantastic this link is without posting the whole drat thing, but here are some choice quotes. quote:"The Plan to Singularity" is a concrete visualization of the technologies, efforts, resources, and actions required to reach the Singularity. Its purpose is to assist in the navigation of the possible futures, to solidify our mental speculations into positive goals, to explain how the Singularity can be reached, and to propose the creation of an institution for doing so. quote:PtS is an interventionist timeline; that is, I am not projecting the course of the future, but describing how to change it. I believe the target date for the completion of the project should be set at 2010, with 2005 being preferable; again, this is not the most likely date, but is the probable deadline for beating other, more destructive technologies into play. (It is equally possible that progress in AI and nanotech will run at a more relaxed rate, rather than developing in "Internet time". We can't count on finishing by 2005. We also can't count on delaying until 2020.) quote:This begs the oft-asked question of 'Shouldn't we tone down the Singularity meme, for fear of panicking someone?' In introductory pages and print material, maybe. But there's no point in toning down the advanced Websites, even if technophobes might run across them. Given the kind of people who are likely to oppose us, we'll be accused of plotting to bring about the end of humanity regardless of whether or not we admit to it. There's just too much of this document for me to ever post only the good parts. It's so intricately detailed. He had thought about what countries are least likely to make AI illegal. He advises anyone interested in the Singularity to make multiple offshore backups in his list of countries, and be ready to move at a moment's notice. He specifically addresses both Greenpeace activists and televangelists (and a whole host of others) as long-term threats to his plan. He has plans for a propaganda arm producing both "introductory pages and print material" as well as "advanced Websites", from back when you capitalized Websites. He even predicted that no one would ever need hardware more complex than what was available in 1999. I mean, he's just wrong about everything. He's winning Terrible Futurist Bingo. He published this thing when he was the same age as I was when I wrote my MS thesis. It took him at least two years to write. It's way the hell longer than my thesis. And every drat one of these intricately drawn-out steps was attempted then abandoned when it turned out to be hard; you can find the detritus of most of them spread about the internet. I guess it just wasn't important enough for him to do his Rationalist Limit Break or whatever and ~rewrite his brain~. The only thing in this whole document he actually did was found the Singularity Institute (which folded amid embezzlement controversy, so he founded MIRI). My favorite part of the whole thing is the note at the top that this document is deprecated, because we really needed to be explicitly told that this plan for Singularity 2005 didn't come true. So of course he predicted Singularity 2030 instead. He's winning Doomsday Prophet Bingo today too. http://www.yudkowsky.net/obsolete/plan.html

|

|

|

quote:The software industry isn't in trouble now, but there may be trouble brewing; to wit, CEOs and even CIOs starting to wonder whether investing trillions of dollars in software development - half of all capital investment in the US is going into information technology - is really paying dividends. Large projects in particular are starting to run into Slow Zone problems (27), the tendency for anything above a certain level of complexity to bog down. Of course, many of these people are still using COBOL, and there's not much you can do to help a company that clueless, but some projects use C++ or Java (or even Python) and still run into trouble. quote:Given the historical fact that Cro-Magnons (us) are better computer programmers than Neanderthals, I would expect human-equivalent smartness to produce a sharp jump in programming ability, meaning that, for self-modifying AI, the intelligence curve will be going sharply upward in the vicinity of human equivalence.

|

|

|

|

|

|

| # ? Jun 9, 2024 01:51 |

|

So an interesting thing happened today. We were going around introducing ourselves in my philosophy class, when a painfully awkward guy stood up and introduced himself as a rationalist, and was evasive about the particulars when the professor asked. I could immediately tell that he didn't mean he was a fan of Plato. I knew it was a futile gesture, but I decided to try and warn him about the mistake he was making. So I grab him after class and just say 'Don't put stock in Eliezer Yudkowsky'. The guy just looks at me in shock because apparently he's never heard the name said out loud before. I spend like 5 minuets trying to get my point across, but he got kind of mopey when he realized I wasn't a true believer. Anyway, he asked if I had any specific examples of Yud's hypocrisy, but this thread has made all Yud's crazy kind of blur together to me, and I don't want to reread this whole loving thread. So, can someone post their favorite 'Yud being indefensibly crazy' thing from earlier that they can remember? (I'm tempted to use the thing SolTerrasa linked but I'm not sure the guy knows anything about tech.)

|

|

|