|

So the inevitable question becomes which of the cast members is secretly a robot. My bet's on Phi. She had to know who Sigma was somehow.

|

|

|

|

|

| # ? Jun 12, 2024 04:12 |

|

I think the question is less "Which characters are robots" and more "Which characters aren't robots."

|

|

|

|

The Sigma we see in the game is a robot being controlled via morphogenic fields by the real Sigma, who is somewhere else completely but unaware of this fact. Or maybe everyone is a robot controlled in this fashion. Incidentally, this all seems to be a logical extension of the whole computer/terminal conversation with Lotus from 999. Whereas in that game it acted as more of a hint towards the nature of morphogenic field without being directly related, it seems the whole relationship is going to be explored in a more literal sense here. Or it could just all be a red herring

|

|

|

|

So, someone in the group is a GAULEM, eh? I wonder if that extends to the "dead" old woman in the infirmary, or just the living cast.

|

|

|

|

I'm going to go out on a limb and theorize that everyone is a robot. Some (or all) of which are based on the memories of actual people. As in when the normal people were kidnapped, they were mindscanned or something. Which would mean that their bodies feeling different was just a side effect of them all being in robot bodies now. And each one is backed up, to run this game forever. Hence why they are able to solve puzzles forever, maybe even that number is relevant to the amount of times that have gone through. Starting with the blank slate. But not entirely. There's some 'leakage'. Phi has some of it. Clover might even have some of it. She could have won a game, but got stuck back in. And it was a red herring to make us think she was upset at being stuck in yet another Nonary game. When she meant she was stuck in this nonary game again. And Quark simply realized that essentially everyone has to die. Even if they win. Since the simulation/robot thing gets reset, and thus everyone 'dies'. Another possibility is it's entirely virtual, though not really sure how possible that is. It would make sense if every single detail gets reset, such as GOL-M not being dead. Although him being 'rogue' might actually be part of the game, and his 'death' was just faked.

|

|

|

|

Dr. Stab posted:I think the question is less "Which characters are robots" and more "Which characters aren't robots." Obviously K is the only human there. The suit is a form of camouflage.

|

|

|

|

K is the robot, and is also Killerman.

|

|

|

|

I figured it was the robot. It was perceptive of Sigma to realize that G-OLM shouldn't have known Alice's name. The existence of Gaulems could mean that the body could have been moved into K's elevator while everyone else was busy.

|

|

|

|

If this game ends with Sigma having to kill a Zero Gaulem via Snatcher-style gun-shooting minigames then I will buy ten copies of it

|

|

|

|

RentCavalier posted:So, in addition to a death game, somebody in the group may very well be an android and won't even know it. That's a spooky thought. Yep! So, now we're in a game with a murderer, an android, a potential axe murderer, psycho Quark, and a mysterious robot man. Assuming none of those are overlapping people, this is very spooky!

|

|

|

|

As somebody who was pretty sure early on that K was a robot, the revelation that at least one person in the group is probably a robot leads me to believe that K is absolutely human. My money's on Phi being a robot, for super jumping powers.

|

|

|

|

Super Jay Mann posted:If this game ends with Sigma having to kill a Zero Gaulem via Snatcher-style gun-shooting minigames then I will buy ten copies of it Sigma is actually a junker meant to find the real bad robots! YOU GET EM SIGMA

|

|

|

|

This scene would have been perfect if I didn't have an irrational hatred towards the Chinese Room being taken seriously. Same with Schr�dinger's cat.

|

|

|

|

Dr. Stab posted:I think the question is less "Which characters are robots" and more "Which characters aren't robots." Y'know, I was thinking about 999 and how there was that pretty cool dual-screen twist at the end of the game, (Top screen is June's view and lower is Junpei's, I think it was?) and how I was kind of disappointed that they couldn't pull a trick like that again. But now that they're going on about Chinese box and convincing flesh robots.. Could they? A 3DS or Vita makes a pretty good Sigma-terminal... I'm beginning to wonder if Sigma is human after all.

|

|

|

|

When I listen to G-OLM, all I can hear is Scott McNeil as Merrick from DoW2: Retribution. I've got no evidence beyond that, though.

|

|

|

|

Green Intern posted:When I listen to G-OLM, all I can hear is Scott McNeil as Merrick from DoW2: Retribution. Certainly sounds like it. A bit of google search has turned up nothing so far.

|

|

|

|

tiistai posted:This scene would have been perfect if I didn't have an irrational hatred towards the Chinese Room being taken seriously. Same with Schr�dinger's cat. Why does the Chinese Room bother you?

|

|

|

|

Poulpe posted:I'm beginning to wonder if Sigma is human after all.

|

|

|

|

tiistai posted:This scene would have been perfect if I didn't have an irrational hatred towards the Chinese Room being taken seriously. Same with Schr�dinger's cat. I'm curious as to your thoughts on it! Personally I find it interesting that the whole point of the Chinese Room Argument (In John Searle's original paper) in the essay it came from was to show that AI can never understand natural language and thus strong/sentient AI is not possible - the crux of it being that "I could run a program for Chinese without thereby coming to understand Chinese." - thus suggesting that programming for Chinese does not lead to an understanding of Chinese. There's a gap between how and why a native speaker understands Chinese and how the program goes about it. It's however interesting that the Chinese Room comes up in a scenario where there IS intelligent AI, thus meaning the Chinese room argument fails. Finally, the pain thing gave me shades of Wittgenstein (as much as it sounds pretentious to say so) - and how talking about pain is kind of meaningless when it comes to other people. What does pain even mean to a cockney robot! Is it really pain, or an analogue? We probably cant even talk about it - but that makes the exchange interesting still (if it's purpose driven or sensation driven). Either way, this section and the accent was awesome! VVVVVV Oh, that makes some more sense. So to complete the analogy the mainframe is the entity that 'writes' the Chinese translation books, by providing instruction on the meaning behind words and concepts and how to interact with humans. The Golem can only 'read the books' as it were - having limited learning/autonomy capacities but not a true grasp of natural language, whereas the mainframe and Zero III could be a Strong/Full AI. PlaceholderPigeon fucked around with this message at 23:49 on Jun 19, 2013 |

|

|

|

PlaceholderPigeon posted:It's however interesting that the Chinese Room comes up in a scenario where there IS intelligent AI, thus meaning the Chinese room argument fails. Even though I've played this game, I promise I've forgotten how/if Chinese Room comes into play to the story proper. I read it as GOL-M is a form of distributed AI (not really, it's the closest comparison I can think of). His explanations and reactions to the group are an example of Chinese Room. If GOL-M lost his connection to the "mainframe", he'd be "autonomous" in the sense he could still give pre-determined responses to experienced stimuli (that his creators/the master AI had already given him), but he's not sentient, nor could he react to completely new stimuli without the "mainframe" or whatever. So it's sort of an extension of "I guess I feel pain, but only in the sense that I know how I should respond to it." edit: Distributed AI is completely wrong. I can't think of the technical terms. Maybe something where the GAULEMs download instruction sets from the mainframe. edit2: More broadly, I think I missed your point that Chinese Room was an argument used to defeat AI theories in general, so never mind. slowbeef fucked around with this message at 23:45 on Jun 19, 2013 |

|

|

|

Considering G-OLM's spiel about the Chinese Room, the subconscious, and feeling kind of like humans do, I think it's safe to say any robots in the group aren't aware they're robots. (Unless they are also Zero Of course, knowing the twist from the first game, I'm sure all this information will come back to bite us in the rear end in new, terrifying ways we didn't even consider.

|

|

|

|

Assuming none of the information the cast has told us so far was a lie, that would probably rule out Quark and Tenmyouji, and likely Clover. Probably rule out K simply because of it being too obvious, so that would leave Phi, Dio, Luna and Alice. I'd go with Dio simply because he's the most dapper and it would be funny if someone programmed him to be a jerk.

|

|

|

|

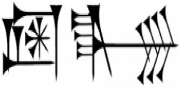

I've heard of the Chinese Room Argument used in terms of philosophy. Basically stuff relating to consciousness and minds/the minds of others. So it's not entirely out of place. And it's probably a big part of why the game is bringing it up. Kind of a 'what makes a human a human' thing. Is it just me or is the girl in the Chinese Room Argument look somewhat like Luna? Similar hair color/style. The 'vest' kind of clothing. Similar kind of built, at least I think so. Kind of hard to tell with the picture. It's gotta be more than a coincidence.

|

|

|

|

slowbeef posted:Even though I've played this game, I promise I've forgotten how/if Chinese Room comes into play to the story proper. That's pretty much the gist of the concept as VLR presents it. GOL-M himself is practically a guy (or well, robot guy) in a Chinese Room, with Sigma, Luna and Alice acting as the people communicating with him through the door. They talk to him, he processes their reactions and simulates a human-like response. The idea of the Chinese Room as presented in VLR is that all people can, in a sense, be considered to be inside a Chinese Room. The girl in the room made the people she was communicating with think she knew Chinese because she was simulating the knowledge using a dictionary to send back messages. But because she never told anyone on the other side that she doesn't actually know Chinese, they're convinced that she does know Chinese. GOL-M proposes that humans pick out how they are going to react, usually on a subconscious level, and that isn't all too different from the girl simulating her knowledge of Chinese to those outside the door. Basically the idea is that humans and AIs are not too far apart if a robot can simulate human behavior. And if a robot can properly convince everyone on the other side of the door that they're human, then what makes it different from a real human? Coupled with the fact that GAULEMs can be fitted to look human, it suddenly becomes clear that as long as there is no visible difference the GAULEM can pass for a human. And if it talks like a human, acts like a human, and looks like a human, then it may as well be a human.

|

|

|

|

Kgummy posted:I've heard of the Chinese Room Argument used in terms of philosophy. Basically stuff relating to consciousness and minds/the minds of others. So it's not entirely out of place. And it's probably a big part of why the game is bringing it up. Yeah, the crux of the Chinese room argument is that understanding natural language is something that only a human can do and that it cannot be programmed for. There being a core difference between being able to hold a conversation in a language one doesn't understand via following instruction and actually understanding what a particular word means in relation to the world or our perceptions. (But assuming the Robotic Player is a Strong AI, then they would understand langage anyways!) Also significant is the relation between the Chinese Room argument and the Turing Test, since both deal with the idea of 'holding a conversation' and what it means to be a sentient AI. If we're dealing with someone being a robot, I imagine the latter's going to show up! I'm going to hazard a guess that there's going to be one of those coincidental conversation things like 999's Ice-9/Titanic/Crystals/Alice chain that leads to something substantial in the later game, if it concerns a robotic player. Also: Can the Robotic Player die via the poison in the bracelet when they reach 0 BP? And due to this, do their win or loss condition change? I wonder... As for my guess on the robot, Luna or Tenmyouji are my two guesses. Luna's nondescript and the change would be a interesting twist, and an old looking robot seems like an interesting throwoff to me. Edit: Yeah, that's true - there's a lot of dualism/philosophy of mind stuff that is hinted at by the problem, and the hard problem of consciousness, etc. I suppose I'm partly doing the same thing as the robot is, musing because I'm bored. PlaceholderPigeon fucked around with this message at 00:17 on Jun 20, 2013 |

|

|

|

I'm seeing a lot of people sounding off about G-OLM's Chinese Room story being about AI (as was the argument in the original paper), but G-OLM (and the Hidden File) seems to be pretty clear that he's using it as more of a commentary on personal identity and the Mind/Body Problem than on AI. It is, as has been noticed, functionally an extension of Lotus's spiel in 999 about the wireless monitor; how would - how could - we tell whether our thoughts are going on in our own brains or simply being transmitted thereto from some other mechanism? We can't really think about thought because we'd have to use thoughts to do it, which is what we're thinking about in the first place and argh. All we're really aware of is stimulus -> response and that there's a causal link of some kind (this is the basis of that whole "I think therefore I am" thing, which is, in a way, where this whole mind/body discussion as we know it today started), but we don't know the details of how we get from one to the other. How can we investigate this? And what would it change, one way or the other? As the hidden file asks, what does this mean for ideas like Free Will? And so on. This is all just in response to Sigma asking him how he can feel pain, although it ties in with his point about how you can no more analyze your own seat of consciousness than someone else's. Basically, G-OLM is just waxing philosophical because he's bored.

|

|

|

|

G-OLM's voice sounds like Yangus from Dragon Quest 8, but that might just be because they're both doing ridiculous accents. I'm an American, I can't tell the difference between two guys with the same accent.

|

|

|

|

I wonder what natural language pundits would say about Watson, the computer that understands natural language well enough to beat the best human players at Jeopardy. The question of our bodies being controlled by some external force, the "soul" if you will, has already been tested and debunked based on the total dependence of the mind on the physical brain. Alter the physical brain and you alter the mind. One fun example was a split brain patient who had two separate personalities - one that controlled the mouth and one that communicated through the left hand. They were different enough that one personality was Christian and one was an atheist.

|

|

|

|

The fact that G-OLM's voice actor is uncredited makes me think it's one of the credited voice actors playing a second role. I can't prove anything, and I'm afraid of spoiling myself if I dig any deeper, but my money is on J.B. Blanc, the voice of Tenmyouji. He grew up in Yorkshire and he plays a lot of brash, working-class characters, but I haven't found an example of him doing a cockney accent specifically. Golem's information about the mainframe makes me wonder about Zero. Zero III asserts that he was programmed by someone else, and the other characters appear to be operating on the assumption that the real Zero is human, but what if that's not the case? What if Zero III is an AI programmed by another AI, who in turn was programmed by someone who may not even be alive anymore? What if it's robots all the way down?

|

|

|

|

Added Space posted:I wonder what natural language pundits would say about Watson, the computer that understands natural language well enough to beat the best human players at Jeopardy. The Chinese Room argument (reversed) would ask how you know that Watson itself is producing the answers, and not some remotely-located human who can understand the questions and is somehow providing the responses. The point is, as PlaceholderPigeon says, sort of a deconstruction of the Turing Test, which asks a human to converse with one other human and one non-human intelligence, then decide which is the human. The Turing Test suggests that if the non-human intelligence is sufficiently capable of acting as a human does to fool some number of humans, then it can be considered a humanlike intelligence. The Chinese Room argument compares an intelligence (human or not) to a non-intelligence, and posits that the two are capable of providing exactly the same set of responses to a given set of stimuli. If the responses can't be distinguished in any way, then how can the observer distinguish between an intelligence and a non-intelligence? I recall a story I read ages ago as an example of absurdism that featured a man who, among other things, had memorized the answer to every mathematical problem in existence. He couldn't actually do any math and frankly didn't understand how numbers worked, but given any problem, he could supply the correct answer. Anyone who tested him without asking how he came up with the answers would think he was a brilliant mathematician. Same principle, in the end.

|

|

|

|

I don't think everyone's in a robot in this case, mainly because of the wording "In the middle of-", which I think was going to end with something along the lines of "In the middle of this very group are X number of robots just like me!"

|

|

|

|

Nidoking posted:The Chinese Room argument (reversed) would ask how you know that Watson itself is producing the answers, and not some remotely-located human who can understand the questions and is somehow providing the responses. The point is, as PlaceholderPigeon says, sort of a deconstruction of the Turing Test, which asks a human to converse with one other human and one non-human intelligence, then decide which is the human. The Turing Test suggests that if the non-human intelligence is sufficiently capable of acting as a human does to fool some number of humans, then it can be considered a humanlike intelligence. The Chinese Room argument compares an intelligence (human or not) to a non-intelligence, and posits that the two are capable of providing exactly the same set of responses to a given set of stimuli. If the responses can't be distinguished in any way, then how can the observer distinguish between an intelligence and a non-intelligence? I think a large problem with the whole 'Chinese Room' metaphor is learning capability - it's explicitly a fixed, pre-made library. It breaks the moment someone asks tells it something and then asks them to recall what they said later. Even if you handwave it as a magic library that has stored all possible conversations, what if that person tried to teach the Chinese Room to speak English? Suddenly the whole thing falls apart.

|

|

|

|

RoeCocoa posted:The fact that G-OLM's voice actor is uncredited makes me think it's one of the credited voice actors playing a second role. I can't prove anything, and I'm afraid of spoiling myself if I dig any deeper, but my money is on J.B. Blanc, the voice of Tenmyouji. He grew up in Yorkshire and he plays a lot of brash, working-class characters, but I haven't found an example of him doing a cockney accent specifically. But NONE of the English voice actors are credited. That's the problem.

|

|

|

|

I might be wrong, but I though G-OLM was voiced by Nolan North.

|

|

|

|

So some member of the cast has got to be a secret robot to justify all this foreshadowing... unless the robot is a fakeout like Alice was last game, and we won't get to even meet the robot till the end credits, but that seems silly. The obvious one to not suspect is K, but what if he really is the robot? Imagine if we unlock K's suit and everyone makes a big deal about how he's free now, and we get to see what he really looks like, etc. etc. It's so interesting that it just gets burned into everyone's heads that he's obviously a human after all, so they'd never suspect the guy in the robot suit was actually a robot in a human suit. Maybe that's why he didn't think the robot suit felt unnatural! And maybe that's why he has amnesia, to see if he can even fool himself into thinking he's human. Or to cover for the fact that he doesn't have any memories of a human life.

|

|

|

|

Waffleman_ posted:But NONE of the English voice actors are credited. That's the problem. Somehow I missed that. In any event, unless G-OLM has more lines elsewhere, I think it makes sense for this minor character to be a secondary role for one of the known voice actors. Or Nolan North.

|

|

|

|

Added Space posted:I wonder what natural language pundits would say about Watson, the computer that understands natural language well enough to beat the best human players at Jeopardy. It's basically a smarter search engine. Albeit one that is closer to being able to understand language the way a human does. I'm going to predict that if we get a computer to understand language enough to be indistinguishable from humans, we'll have done one of these two things: 1) We will have already figured out how our minds work, and created something based on those theories. At the very least much better understanding then we currently have. 2) We end up creating it accidentally, via a combination of things. Most likely via some sort of machine learning setup. Presumably with a wider goal. This will lead to us understanding how our minds work. Machine learning, as far as I understand it, is kind of like an advanced Chinese Room. You show it input, show it what the output should be. Repeat this a few times to get a 'baseline'. Then give it new input that it hasn't seen, have it respond. Then you tell it if it got it right or not. Well, that's one way of going about it; I suppose there's a few other ways. Someone who knows more about it will probably point out where I got it completely wrong, if I did. Somewhat related is the AI/computer they made schizophrenic a few years back, to test the 'hyperlearning' theory. DISCERN was what it was called.

|

|

|

|

Let no one say I'm not working every angle:quote:Fedule @Fedule

|

|

|

|

Why is it such a top secret piece of information?

|

|

|

|

|

| # ? Jun 12, 2024 04:12 |

|

Obviously this piece of information was kept secret from normal means to test whether that information can be transmitted to us through morphogenic fields.

|

|

|