|

|

| # ? Jun 8, 2024 22:06 |

|

Freakazoid_ posted:The economic implications of certain advanced technologies, such as automated cars, are very drastic. There are some 3.6 million transportation jobs in the US that could be phased out by this technology with no obvious job creation aspect, especially for the low-income workers it will displace. I work in an industry that is very technologically advanced, and safety conscious to a degree not found outside of medical device testing. Do you think what I said earlier is simply neo-luddism? Solkanar512 posted:I would certainly feel a great deal more confident about the " innovations" coming from our Silicon Valley overlords if the majority of the things being worked on were more than just me-too copy cat apps trying to get bought out before the VC money runs out.

|

|

|

|

Regulations are very Economy 1.0, IMO.

|

|

|

|

How are u posted:Regulations are very Economy 1.0, IMO. also what enron said so they could have power plants shut down to drive the price of electricity up in the newly deregulated CA power industry back in the 90's.... https://www.youtube.com/watch?v=nWCtKqYnLXA

|

|

|

|

for the thread's benefit, here is a video of a quality consumer product sold by goog: https://www.youtube.com/watch?v=BpsMkLaEiOY more good google hardware: http://thewirecutter.com/reviews/best-wi-fi-router/#competition

|

|

|

|

uncurable mlady posted:child runs into road, terrified father runs after waving at arms to stop traffic If the car isn't stopping when someone runs in front of it then we have bigger problems. You wouldn't want automated cars to stop because someone waved their hands in a particular way for the same reason we don't currently want automated cars to smash the brakes because of plastic bags. The vehicle should be making the proper decisions about objects that cross its path without any form of signaling, sensor-based or otherwise. The issue that was being discussed was the ability for the vehicle to recognize a temporary traffic signaling situation like a cop directing traffic, which is an obvious case where some kind of signaling device makes sense. Your scenario is just really weird because stopping when something gets in front of them is the kind of thing automated cars will absolutely, 100% be better at than humans. The bigger problem is getting them to recognize when they don't have to stop because the thing in front of them is a bag blowing across the road. Paradoxish fucked around with this message at 09:14 on Sep 11, 2015 |

|

|

Cicero posted:This is pretty much always true though, isn't it? I mean, as farming employment numbers went from "almost everybody" to "almost nobody" due to technological progress, there wasn't a direct link from, say, improvements in mechanized tractors to new jobs. The answer to "where did those people go for jobs" was just "everywhere else", as more efficient farming meant lower prices meant more consumer spending for other parts of the economy.

|

|

|

|

|

Paradoxish posted:If the car isn't stopping when someone runs in front of it then we have bigger problems. You wouldn't want automated cars to stop because someone waved their hands in a particular way for the same reason we don't currently want automated cars to smash the brakes because of plastic bags. The vehicle should be making the proper decisions about objects that cross its path without any form of signaling, sensor-based or otherwise. The issue that was being discussed was the ability for the vehicle to recognize a temporary traffic signaling situation like a cop directing traffic, which is an obvious case where some kind of signaling device makes sense. sensors are indeed good at telling when something is in front of them. so good that $60 of electronics can completely foil the LIDAR and create phantom peds or obstacles! http://spectrum.ieee.org/cars-that-think/transportation/self-driving/researcher-hacks-selfdriving-car-sensors quote:Petit was able to create the illusion of a fake car, wall, or pedestrian anywhere from 20 to 350 meters from the lidar unit, and make multiple copies of the simulated obstacles, and even make them move. �I can spoof thousands of objects and basically carry out a denial of service attack on the tracking system so it�s not able to track real objects,� he says. Petit�s attack worked at distances up to 100 meters, in front, to the side or even behind the lidar being attacked and did not require him to target the lidar precisely with a narrow beam.

|

|

|

|

Main Paineframe posted:B-b-but I might see a poor person on the bus! I hate buses and love public transport. Buses are slow, because of traffic and having to stop every 50 feet to let on passengers. Letting on passengers takes longer than trains because they tend to get blocked in by traffic so it takes forever to pull off. They are also huge, so they can't zip in and out of traffic like private cars, so they get stuck in any congestion. Basically, they are the worst of all forms of transport combined. Nothing to do with "the poors".

|

|

|

|

BarbarianElephant posted:I hate buses and love public transport. Buses are slow, because of traffic and having to stop every 50 feet to let on passengers. Letting on passengers takes longer than trains because they tend to get blocked in by traffic so it takes forever to pull off. They are also huge, so they can't zip in and out of traffic like private cars, so they get stuck in any congestion. Basically, they are the worst of all forms of transport combined. Nothing to do with "the poors". Actually streetcars are worse but thankfully only idiots want to bring those back.

|

|

|

|

buses are p nice in paris, not as nice as the metro obviously, but if you have to transfer twice on the metro the general wisdom is to just take a bus instead. they're pretty needs suiting IMO maybe they only work in places that aren't actively sabotaging their public transport

|

|

|

|

Dedicated busways and buslanes help massively, as does plenty of large bus terminals (instead of a bunch of stops). A good train network kind of needs a good bus network anyway.

rudatron fucked around with this message at 15:54 on Sep 11, 2015 |

|

|

|

uncurable mlady posted:sensors are indeed good at telling when something is in front of them. so good that $60 of electronics can completely foil the LIDAR and create phantom peds or obstacles! I seriously have no idea what you're arguing with or what you think I'm saying. It doesn't matter how well LIDAR works, you still wouldn't solve the problem of "how do I stop this car for a pedestrian running in front of it?" by detecting someone waving their arms around. The car should always be stopping for objects that cross its path and never (without user intervention) for some random guy waving his arms around on the side of the street.

|

|

|

|

Nessus posted:This seems to be the "Might as well not make any laws at all, then" argument again. Like you seem to be saying "If the people being abused can't afford enforcement, why bother making it illegal?" Do you feel laws should only be structured to protect the wealthy, like, as a design feature? Uh, no, you completely missed the point. There's a big difference between a cop exercising selective enforcement of a speeding law or something, and a law that literally requires you to be rich or have connections before you can attempt to have it enforced. I don't think it's a coincidence that laws that protect workers tend to work like the latter.

|

|

|

|

Paradoxish posted:I seriously have no idea what you're arguing with or what you think I'm saying. It doesn't matter how well LIDAR works, you still wouldn't solve the problem of "how do I stop this car for a pedestrian running in front of it?" by detecting someone waving their arms around. The car should always be stopping for objects that cross its path and never (without user intervention) for some random guy waving his arms around on the side of the street. gonna have to disagree with you here. seeing as sensors, software, and hardware are not infallible, it would behoove us to have an automated car be able to take cues like certain hand/arm signals from nearby humans as a signal to make an emergency stop. it acts as a redundancy measure that can improve safety for pedestrians and safety in general. there is the capacity for abuse of course, but just like with fire alarms and other warning signals we should punish abusers rather than prevent the public from accessing such safety measures.

|

|

|

|

Condiv posted:gonna have to disagree with you here. seeing as sensors, software, and hardware are not infallible, it would behoove us to have an automated car be able to take cues like certain hand/arm signals from nearby humans as a signal to make an emergency stop. it acts as a redundancy measure that can improve safety for pedestrians and safety in general. You're kind of missing the point, though, in that there's no redundancy in what you're talking about. The car would detect someone waving their arms around using the same data that allows it to detect the fact that there's an object there at all, but with the added complexity of having to make a determination beyond stopping for an obstruction. The simplest, safest solution is to have the car stop when an object is in its path. The problem, which has already been brought up in the thread, is actually the risk of the car being too sensitive and stopping suddenly for things like shopping bags. Edit- It's worth pointing out that there are several already in production technologies intended to detect obstructions and warn drivers. I think you're going to see attitudes towards this stuff change rapidly as technology like that is integrated into new cars and drivers realize that their car is warning them about obstacles they were still completely unaware of. Paradoxish fucked around with this message at 17:44 on Sep 11, 2015 |

|

|

|

First, we develop sensors that can tell the difference between someone about to run into traffic from the sidewalk from someone waving hello to a friend on the sidewalk. Then we simply develop the strong ai to distinguish between the implications of these different behaviors on potential roadway impacts. I'd say this will all come within *spins wheel* 18 months. Those who disagree with this well established analysts hate technology and probably puppies.

|

|

|

|

Just train squirrels to drive cars.

|

|

|

|

D&D'ers will be happy to see this:quote:Employees of staffing companies are starting to revolt, however, ushering in what may be a new era of higher labor standards in the famous tech hub. These workers are organizing, voting for union membership and the power of collective bargaining, and publicly exposing their working conditions�namechecking the companies that contract their services�in the hopes of stirring up popular support. �Adecco� may not mean anything to the average consumer, but �Google� certainly does�and many Google customers care about corporate accountability. If workers succeed, they could have a huge influence on newer tech hubs like New York and Chicago. quote:Asked for comment on workers� claims of labor problems and union-busting, an Adecco spokesperson said, �There have been a number of false claims made throughout this election process; we're not able to comment on active legal matters but objections were filed with the NLRB around the election process. � We will fully cooperate with the NLRB�s investigation and the upcoming hearing.� She added that the company is �supportive of any direction freely chosen by our associates� but �believe[s] that our associates are better off directly dealing with us as their employer rather than involving a union.� I have no idea what this actually means though: quote:Already, software engineers write the bulk of new code, while a large pool of programmers act more like assembly line workers, snapping together lines of pre-existing code.

|

|

|

|

Bob James posted:Just train squirrels to drive cars. Heh that reminds me of pigeon powered homing missiles: wikipedia posted:During World War II, Project Pigeon (later Project Orcon, for "organic control") was American behaviorist B.F. Skinner's attempt to develop a pigeon-guided missile.[1] https://en.m.wikipedia.org/wiki/Project_Pigeon

|

|

|

|

Cicero posted:

no, it's pretty common. by pre-existing code they just mean like templates or chunks of code which lower-paid people then pull out of a box when needed. i'm not a programmer but i'm code literate and it's not uncommon for me to send sample or test code to customers that someone else has written, or ask one of our developers to write something which i then plug in somewhere to solve a problem and maybe tweak if necessary i can write maybe a simple javascript thing. but i can see where in our software a customer is having a problem, solicit a fix from a dev, then implement that fix - the developer spends maybe a half hour on the task boner confessor fucked around with this message at 18:57 on Sep 11, 2015 |

|

|

|

Popular Thug Drink posted:no, it's pretty common. by pre-existing code they just mean like templates or chunks of code which lower-paid people then pull out of a box when needed. i'm not a programmer but i'm code literate and it's not uncommon for me to send sample or test code to customers that someone else has written, or ask one of our developers to write something which i then plug in somewhere to solve a problem and maybe tweak if necessary quote:i can write maybe a simple javascript thing. but i can see where in our software a customer is having a problem, solicit a fix from a dev, then implement that fix - the developer spends maybe a half hour on the task edit: \/\/\/ yeah "you can totally mess with this car by shining a laser at it!!" doesn't seem like a real strike against self-driving cars. Cicero fucked around with this message at 19:28 on Sep 11, 2015 |

|

|

|

uncurable mlady posted:sensors are indeed good at telling when something is in front of them. so good that $60 of electronics can completely foil the LIDAR and create phantom peds or obstacles! We are indeed fortunate that human drivers are immune to laser dazzling weapons. Perhaps, in the future, intentionally using laser weapons to blind a driver will be illegal.

|

|

|

|

Paradoxish posted:You're kind of missing the point, though, in that there's no redundancy in what you're talking about. The car would detect someone waving their arms around using the same data that allows it to detect the fact that there's an object there at all, but with the added complexity of having to make a determination beyond stopping for an obstruction. The simplest, safest solution is to have the car stop when an object is in its path. The problem, which has already been brought up in the thread, is actually the risk of the car being too sensitive and stopping suddenly for things like shopping bags. no not necessarily. for example, take the current LIDAR sensor's inability to properly detect potholes. now imagine that a person has been hit by a car and is wounded. would the LIDAR, which already has trouble detecting low level obstacles like potholes be able to detect an injured person lying in the road? you're also taking detection and classification of obstacles by a neural network, a probability based system, as foolproof. remember that we are talking about a technology that even at its best can never detect 100% of obstacles correctly. even small changes that you would think wouldn't make a difference in detection, can very well cause a false negative. that's just one reason why adapting these cars to be able to take human communication into account is important. the detection of such things would of course not be 100% either, but if the sensors have a 5% chance of misclassifying a person injured in the road as not an obstacle it needs to stop for, and a 4% chance of not recognizing human communication such as hand signals to stop, you have a 99.8% chance of either of those inputs being recognized and stopping the vehicle as opposed to a 95% percent chance of stopping when needed. that is the definition of redundancy. as i have mentioned before, neither formal verification or bughunting is particularly useful with neural network thanks to their nature. in the google car's case, it is most likely the equivalent of a million+ variable function with weights and biases applied to every variable and generated entirely by a computer. it is incredibly difficult for us to verify such systems. as such, the best method of verification of neural networks is the incorporation of as disparate a set of training data as possible, and trying to maximize the neural nets response to be correct for as high of a percentage of that data as possible. even with that, the accuracy rate you end up with is a lab accuracy rate only, on real world data accuracy tends to decline by a variable percentage based on the peculiarities of your neural network kernel. in any case, it is difficult to the point of impossibility to have a neural network properly classify all inputs 100% of the time. that's why you should not presume that an automated car based on one would detect an obstacle that a person on the side of the road was trying to warn it about if it could detect that person's movements. edit: part of the reason i keep harping on the lack of applicability of formal verification to neural networks is because it is one of the big software development techniques that need to be used in life-critical systems. formal verification is mathematically proving that for all acceptable inputs to a function, it will behave as expected. formal verification of software generally means the software will not fail as long as the hardware and operating system do not. Condiv fucked around with this message at 20:04 on Sep 11, 2015 |

|

|

|

Paradoxish posted:I seriously have no idea what you're arguing with or what you think I'm saying. It doesn't matter how well LIDAR works, you still wouldn't solve the problem of "how do I stop this car for a pedestrian running in front of it?" by detecting someone waving their arms around. The car should always be stopping for objects that cross its path and never (without user intervention) for some random guy waving his arms around on the side of the street. What about bicyclists, who typically use hand signals to indicate what they're about to do? Should the car comletely ignore a bicyclist who's about to change lanes, until they enter the car's lane, at which point it will come to a sudden and complete stop in the middle of the road?

|

|

|

|

It's kinda weird how self-driving cars need to have a billion scenarios ready in case someone is "lying injured in the road" and yet if a human driver hit someone lying in the road, nothing would happen to them legallyMain Paineframe posted:What about bicyclists, who typically use hand signals to indicate what they're about to do? Should the car ignore them completely unless they veer in front of it, at which point it will come to a sudden and complete stop in the middle of the road? The car should ignore them unless the bicycle is predicted to intercept its path, kinda like how human drivers do. BTW I live in a very bicycle heavy area and I'd say less than 10% of bicyclists signal, ever.

|

|

|

|

Radbot posted:It's kinda weird how self-driving cars need to have a billion scenarios ready in case someone is "lying injured in the road" and yet if a human driver hit someone lying in the road, nothing would happen to them legally https://en.wikipedia.org/wiki/Therac-25 "doctors screw up and accidently kill people all the time, so it's totes ok that engineering incompetence caused this machine to irradiate a bunch of people to death"

|

|

|

|

Radbot posted:It's kinda weird how self-driving cars need to have a billion scenarios ready in case someone is "lying injured in the road" and yet if a human driver hit someone lying in the road, nothing would happen to them legally hm yes, we should determine if we can find machines criminally liable for an accident -or- there's a high chance the driver would face criminal charges

|

|

|

|

Popular Thug Drink posted:hm yes, we should determine if we can find machines criminally liable for an accident If you can find me a single case, anywhere in the US, where a driver has been successfully prosecuted for not seeing someone lying in the road and running them over, I will PayPal you $10 right now. Condiv posted:https://en.wikipedia.org/wiki/Therac-25 I guess I don't see the big difference between human and engineering error, and why we accept human error like it's nothing. I agree though that our attitude towards human incompetence is pretty shameful.

|

|

|

|

Cicero posted:What? I think the person who wrote this doesn't understand how programming works. They don't know what engineering means, so that's kind of a given.

|

|

|

|

Main Paineframe posted:What about bicyclists, who typically use hand signals to indicate what they're about to do? Should the car comletely ignore a bicyclist who's about to change lanes, until they enter the car's lane, at which point it will come to a sudden and complete stop in the middle of the road? quote:As for cyclists, the car�s technology recognizes and differentiates them specifically, and is familiar with typical rider behavior. For example, its sensors pick up commonly used hand signals from riders, allowing it to react within ample time and distance to the anticipated movements made. That seems like a much more common and easy-to-understand case than "frantically wave arms because someone ran into the street" though. Cicero fucked around with this message at 20:20 on Sep 11, 2015 |

|

|

|

Radbot posted:I guess I don't see the big difference between human and engineering error, and why we accept human error like it's nothing. I agree though that our attitude towards human incompetence is pretty shameful. we can minimize machine error through best practices and good engineering. human error is a constant. failing to do your best to minimize machine error in life or death situations is negligence

|

|

|

|

Radbot posted:If you can find me a single case, anywhere in the US, where a driver has been successfully prosecuted for not seeing someone lying in the road and running them over, I will PayPal you $10 right now. do they have to be already lying in the road motionless and without injury from some other pedestrian-vehicle collision and the driver also perfectly sober and alert because i want to make sure i pass all of your hopeless pedant filters before i just google "driver convicted for killing pedestrian" which anyone is capable of doing ironically a pedestrian was struck and injured outside of my apartment yesterday but they were standing up so i guess it's all ok!

|

|

|

|

Condiv posted:we can minimize machine error through best practices and good engineering. human error is a constant. failing to do your best to minimize machine error in life or death situations is negligence

|

|

|

|

Popular Thug Drink posted:do they have to be already lying in the road motionless and without injury from some other pedestrian-vehicle collision and the driver also perfectly sober and alert because i want to make sure i pass all of your hopeless pedant filters before i just google "driver convicted for killing pedestrian" which anyone is capable of doing I'm not sure what a pedant filter is, but again, you'll find yourself $10 richer if you can find a case that is even similar to the one condiv talked about. Condiv posted:human error is a constant. Citation loving needed on this one.

|

|

|

|

Cicero posted:We can minimize human error through good (traffic) engineering too. We just usually choose to give more priority to speed/traffic flow. There's been some headway on this with the recent hype around Vision Zero. i meant it more in the sense that humans will always make mistakes, but yeah, with good engineering you can reduce the harm said mistakes cause and reduce the chances of a mistake resulting in death or injury

|

|

|

|

Humans will always be more lenient with other humans compared with machines. It's why people say that AI has to understand your commands 100% of the time to be effective even though people need stuff clarified all the time.

|

|

|

|

Humans will always make the same mistakes at the same rate, regardless of anything done to change their behavior. Makes sense.

|

|

|

|

computer parts posted:Humans will always be more lenient with other humans compared with machines. It's why people say that AI has to understand your commands 100% of the time to be effective even though people need stuff clarified all the time. Actually this is true and a point I made earlier and it's going to push back automated cars by an extra decade at least. People won't tolerate machines (programmed by corporations) running over their children. The standard for automated cars is going to be higher than for human drivers.

|

|

|

|

|

| # ? Jun 8, 2024 22:06 |

|

Condiv posted:in any case, it is difficult to the point of impossibility to have a neural network properly classify all inputs 100% of the time. that's why you should not presume that an automated car based on one would detect an obstacle that a person on the side of the road was trying to warn it about if it could detect that person's movements. The reason I keep jumping on this is because "frantically waving at a car from the side of the road" is actually a really stupid thing to do with human drivers, and trying to get an automated car to interpret that kind of signal just seems foolish and backwards. If someone is gesturing wildly at me while I'm driving down the street, all they're really doing is taking my attention away from what's in front of me and whatever it is they're trying to warn me about. It's already a thing that humans have a desperately hard time dealing with (watch how often people gently caress up when a cop is directing traffic), and it's an attempt to solve a problem (the limited ability of a person to focus on all thing at once) that autonomous vehicles wouldn't even have. The same goes for a person lying down in the road or whatever other scenario you want to imagine. No matter how hard it might be to get the car to detect a person lying there, it's still an easier problem to solve than trying to interpret a hand signal pointing at something the car can't even see in the first place. Paradoxish fucked around with this message at 20:30 on Sep 11, 2015 |

|

|

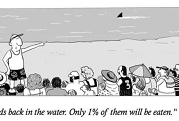

I can't sit next to those people!

I can't sit next to those people!