|

they probably are making Vega11 a GDDR5X card, but curiously that might be released later than V10.

|

|

|

|

|

| # ? Jun 3, 2024 21:03 |

|

On UK ebay I'm seeing more and more titan x on buy it nows for the same kinda price as new 980ti go for. I mean I'm still not spending £500+ on a card, but it's nice to see them dropping.

|

|

|

|

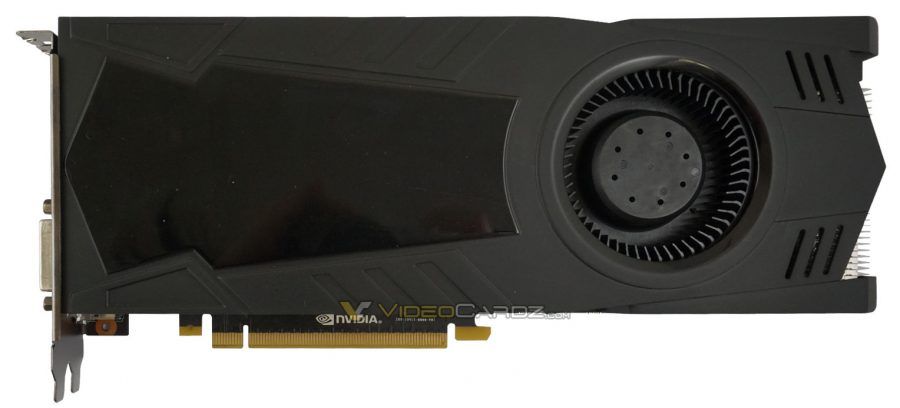

Waiting for the firtst custom 1080? Wait no more......   No wait, wait more

|

|

|

|

froody guy posted:Waiting for the firtst custom 1080? Wait no more...... I hope all the "custom" cards at the MSRP level aren't all poo poo like that

|

|

|

|

gently caress yah, bring back crazy boxes.

|

|

|

|

xthetenth posted:They do the next best thing and sell a version with a block. EVGA actually does precisely this, and explicitly states that they will honor the warranty if you swap the cooler so long as you do not physically damage anything during the swap. It's the prime reason I buy them over other vendors, even if they might be a few bucks more at times: I can slap my AIO on it and still be covered. I mean, I'd imagine they'd still probably have something to say if you literally cooked your chip, but frankly you're a lot more likely to damage it via overvolting too far (which is cooler independent anyhow) than through actual heat build up.

|

|

|

|

|

|

|

|

froody guy posted:Waiting for the firtst custom 1080? Wait no more...... I've never heard of Galax before. I now know why.

|

|

|

|

I was under the impression that they were a pretty ok brand, but mostly operated in the EU.

|

|

|

|

OhFunny posted:I've never heard of Galax before.   (Their reputation isn't brilliant though, there's a reason they aren't called Galaxy anymore and I think that's the reason they don't operate in the US)

|

|

|

|

Yeah Galax is pretty awesome, made some really great 9xx like the HOF but also the others had one of the best pcb and performnces in general. Really one of the best brands. Untill now. But I'm sure they'll have more to say. Actually I think nvidia paid them to pull this one out so that everybody runs for the Scammers Edition.

|

|

|

|

That card is brilliant. Basically has an epower classified integrated as apposed to having to do this. I think they even sold a limited edition one without a cooler. edit: yep. the GALAX GTX 980 Ti HOF LN2 Edition was limited to a few hundred units and no stock cooler.

|

|

|

|

I know they sold a version with a water block on it at least. However all the HOF stuff is likely roughly equivalent to the EVGA classy (sans binning) and MSI lightning. EDIT: Oh god that thing. Now I remember the LN2 version. xthetenth fucked around with this message at 23:26 on May 10, 2016 |

|

|

|

Bleh Maestro posted:I hope all the "custom" cards at the MSRP level aren't all poo poo like that Lol probably , the consistently cheapest 9 series cards were like that too. Although notably they were very quickly cheaper than msrp in a lot of brands (msrp is msrp afterall) Unless there is any good reason to believe otherwise the good stuff will be 20-30 over msrp simply because that's how its been since at least the 600 series I remember somebody buying that hof card here and had to cancel it when he found out in this thread it had no cooler

|

|

|

|

THE DOG HOUSE posted:Lol probably , the consistently cheapest 9 series cards were like that too. Although notably they were very quickly cheaper than msrp in a lot of brands (msrp is msrp afterall) I mean I don't care if it's $20-30 more but if the "founders edition" $100 more actually becomes the floor price for the actual good partner cards, that would suck (for me)

|

|

|

|

Given the fact that I'm listening to one source, this could easily be faulty information but... AdoredTV put out a video examining the 1080 and 1070, and in relative terms it actually seems like these cards are performing about where you could have reasonably expected them? Which gives me hope that AMD will be competitive this time around, in the areas they decide to compete? I just want to use the freesync in this monitor

|

|

|

|

Bleh Maestro posted:I mean I don't care if it's $20-30 more but if the "founders edition" $100 more actually becomes the floor price for the actual good partner cards, that would suck (for me) If there is one reason (I mean there's not but....) for the Founders to cost 100bux extra is not to create too much competition with the partners ones so I'm pretty sure they'll cost less. Nvidia cashes in first and ride the hype wave, then they let the others roll the ball. Not that they don't know how to milk us back and forth mright? froody guy fucked around with this message at 00:30 on May 11, 2016 |

|

|

|

froody guy posted:If there is one reason (I mean there's not but....) for the Founders to cost 100bux extra is not to create too much competition with the partners ones so I'm pretty sure they'll cost less. Nvidia cashes in first and ride the hype wave, then they let the others roll the ball. Not that they don't know how to milk us back and forth mright? The reason is that they are doing a near-paper launch with engineering samples of GDDR5X to try and steal AMD's thunder, but they can't say openly that there's hardly going to be any stock. And yeah, they cash in on the hype wave, because people will pay it. And best of all they raise the launch price without appearing to do so, since AIBs will launch lovely cards for them in a month or three when supplies stabilize. People would be pissed if the reference card launched at $700, but this isn't reference, it's the Again, "not wanting to undercut their partners" has nothing to do with it. They happily undercut AIBs all the time, most conspicuously the Titan X launch where they were $100-200 cheaper than AIBs. Paul MaudDib fucked around with this message at 01:39 on May 11, 2016 |

|

|

|

Paul MaudDib posted:Again, "not wanting to undercut their partners" has nothing to do with it. They happily undercut AIBs all the time, most conspicuously the Titan X launch where they were $100-200 cheaper than AIBs.

|

|

|

|

Wasn't sure if this was already posted: http://videocardz.com/59808/amd-vega-gpu-allegedly-pushed-forward-to-october

|

|

|

|

PerrineClostermann posted:I just want to use the freesync in this monitor If your video card puts out frame rates in games higher than your monitor's refresh rate, freesync won't even be needed.

|

|

|

|

Nfcknblvbl posted:If your video card puts out frame rates in games higher than your monitor's refresh rate, freesync won't even be needed. This isn't true, even if your card puts out 90 fps on a 60 hz monitor it still tears constantly (or if you limit to 60 too). Even if you dont think you notice it, you will notice it for sure when you see the same thing with freesync or gsync. Now the fact the refresh rate can truly be variable without tearing below your refresh is a huge deal too and much easier to point out as a demonstration but, its positive for both ends of the spectrum

|

|

|

|

THE DOG HOUSE posted:This isn't true, even if your card puts out 90 fps on a 60 hz monitor it still tears constantly (or if you limit to 60 too). Even if you dont think you notice it, you will notice it for sure when you see the same thing with freesync or gsync. If you can do a solid 90FPS on a 60Hz monitor you can just enable vsync

|

|

|

|

DrDork posted:If you can do a solid 90FPS on a 60Hz monitor you can just enable vsync You're still getting a wider variation between when the frame starts rendering and when it's displayed with *sync, but it's going to be lessened.

|

|

|

|

DrDork posted:If you can do a solid 90FPS on a 60Hz monitor you can just enable vsync Yeah and its pretty close, I just dislike the extra load on the gpu and lag (and its not near as perfect as actually matching the refresh with the cool tech). But, frankly, even when I notice it I dont even care that much lol. I will dig it a lot more when it costs almost the same as normal monitors and I know this sounds like heresy but I wouldn't mind normal priced 60 hz monitors with this feature. A perfect 60 hz is great for me, I put a lot more value in resolution, but I doubt I'll see one of those anytime soon. But even with vsync if you do frame time graphs its clearly not gsync or freesync, and that weird feeling you get when you see one of those monitors working is when those frames are actually perfect imo.

|

|

|

|

THE DOG HOUSE posted:Yeah and its pretty close, I just dislike the extra load on the gpu and lag (and its not near as perfect as actually matching the refresh with the cool tech). But, frankly, even when I notice it I dont even care that much lol. I will dig it a lot more when it costs almost the same as normal monitors and I know this sounds like heresy but I wouldn't mind normal priced 60 hz monitors with this feature. A perfect 60 hz is great for me, I put a lot more value in resolution, but I doubt I'll see one of those anytime soon. I'm with you in hoping that freesync just becomes a baked in feature of every monitor on the market, even if it's not great it's iirc not a particularly expensive addition and it's especially nice to have when you're shaving pennies off with cheaper parts.

|

|

|

|

fozzy fosbourne posted:Wasn't sure if this was already posted: The question now is this because of GP104, hedging predictions on supply, a paper launch and/or everyone rushed to conclusions?

|

|

|

|

FaustianQ posted:The question now is this because of GP104, hedging predictions on supply, a paper launch and/or everyone rushed to conclusions? If I disappear from the thread it's because I've taken so many things with a grain of salt that I have hypertension.

|

|

|

|

I amazes me that a GTX 1080 + 6700k uses less than 300 watts.

|

|

|

|

DrDork posted:If you can do a solid 90FPS on a 60Hz monitor you can just enable vsync Is vsync a thing I should enable? I have no idea what tearing is to be honest and my monitor is 60 Hz.

|

|

|

|

PerrineClostermann posted:Given the fact that I'm listening to one source, this could easily be faulty information but... AMD is releasing their low to mid range parts first so we'll see how it works out.

|

|

|

|

Really hope Polaris delivers close to 1070 performance at the $200 price point.

|

|

|

|

Boris Galerkin posted:Is vsync a thing I should enable? I have no idea what tearing is to be honest and my monitor is 60 Hz. As you probably know, your monitor renders the pixels in a frame line by line from top to bottom. When your graphics card isn't sending your monitor frames in time with its refresh rate, sometimes it'll render part of a frame, receive the data for the next one, and switch to it halfway through, causing a "tear" to appear for a split second as it renders half of one frame and half of another. It's particularly noticeable when you swing the camera around quickly since the difference between one frame and the next is going to be more striking, and it's unsightly enough that some people are willing to take a performance hit and some input delay to fix it. If it doesn't bother you then there's no particular reason to enable vsync. Freesync/Gsync apparently solve the performance/input lag problems while also reducing stuttering when you can't hold a framerate above your monitor's refresh rate - since your framerate keeps dipping, your monitor receives frames at uneven rates, but it can still space them out such that it looks smooth by changing its refresh rate to match the fps you're getting at any given time. So that might be worth looking into next time you're buying a monitor even if you don't care about tearing. HMS Boromir fucked around with this message at 09:41 on May 11, 2016 |

|

|

|

Should be pointed out that some monitors simply seem to not tear as much. I very rarely get tears on my monitor, most games run fine with vsync off. My dad's ancient 15" lcd on the other hand literally looks like someone split the screen in half when running around counterstrike with vsync off.

Truga fucked around with this message at 10:22 on May 11, 2016 |

|

|

|

Question: I'm going to build a new rig when the 1080 launches. Now my old setup uses a 660GTX and I thought about keeping it as a dedicated physX card. Now I searched for a lot of benchmarks online concerning dedicated physX and it seems to be a lot more complex than I originally thought it would be. Apparently a dedicated physX card only boosts frame rate if the card is not so far behind in power than your main card. Now my question is would a 660GTX likely bottleneck a 1080GTX? If it does would it perhaps make sense to get a 1070 or a future 1060 instead and run the 660 as a physX card with any of those instead?

|

|

|

|

teagone posted:Really hope Polaris delivers close to 1070 performance at the $200 price point. Really hope Vega delivers HBM2 mems onboard by October at $500

|

|

|

|

Mikojan posted:dedicated physX card. don't do this, there's like 0.2 games per year that will a) not run at 200fps regardless b) use physx

|

|

|

|

Mikojan posted:Question: Hey man, it's 2016 now.

|

|

|

|

Guess I'm behind the time  makes sense that all the stuff I see about dedicated physX are dated articles and videos makes sense that all the stuff I see about dedicated physX are dated articles and videosThanks for pointing it out to me!

|

|

|

|

|

| # ? Jun 3, 2024 21:03 |

|

Mikojan posted:Guess I'm behind the time I used a 660 with my 970 when it first came out and it made physx benchmarks give bigger numbers, but it was dumb so I sold it to my brother.

|

|

|