|

Avoiding O(N) factors is already useful enough. It's the log(N)'s you've got to watch. An O(log N) that hops through pointers or disk blocks is one thing, but if it hops through bits of a word, that's another.

|

|

|

|

|

| # ? May 14, 2024 16:08 |

|

Simulated posted:Summing the elements in a linked list and summing the elements in an array are both O(N) in theory; they should both execute around the same number of instructions. In reality the array will be faster and the larger your dataset (or the more spread out the linked list nodes) the faster the array will be. For a 1 GB dataset the array can absolutely kill the linked list, in some scenarios by an order of magnitude. How useful was knowing they are both O(N) if one takes 10x as long to execute? Now I'm wondering if there's some point at which the linked list starts to win out overall just because you don't have to allocated a gigantic contiguous block of memory for it. Assuming the task looks something like "create datastructure, load data into datastructure, perform O(N) operation on datastructure, release allocated memory". (And nevermind that in most cases you'd do the "perform operation" as you load data)

|

|

|

|

I've seen a heuristic for whether a linked list makes sense of: if you are adding and removing in the middle 10x as often as you are performing a full traversal, the linked list is better.

|

|

|

|

hobbesmaster posted:This is why a common interview question is to explain when you shouldn't use quick sort. I know a few reasons why you wouldn't use quicksort, even a modern, randomized, three way partition quicksort,(you want stability etc) but I don't know the answer to this interview question with respect to running time. My best guess is that you might know certain properties of the input that could make another approach(like the Timsort used by Java, iirc) more efficient, allowing for extra memory use. Am I on the right track? Fergus Mac Roich fucked around with this message at 02:16 on Oct 16, 2016 |

|

|

|

TooMuchAbstraction posted:Now I'm wondering if there's some point at which the linked list starts to win out overall just because you don't have to allocated a gigantic contiguous block of memory for it. Assuming the task looks something like "create datastructure, load data into datastructure, perform O(N) operation on datastructure, release allocated memory". (And nevermind that in most cases you'd do the "perform operation" as you load data) Here is a Swift library that uses B-trees. I attached his performance graph showing inserting into a sorted array at O(n^2) is an order of magnitude faster than a red-black tree at O(N log N) up to a certain size because the array is just so drat amenable to prefetch, branch prediction, and fits in L1/L2. It takes 100,000 items for the red-black tree to be faster. B-trees (not B+trees) are a reasonable compromise because each node's array holds quite a few items, but you don't need to allocate one massive array when the datasets get large and the concatenation performance isn't so degenerate. A side benefit for copy-on-write implementations is you can continue to share non-mutated parts of the tree.

|

|

|

|

UraniumAnchor posted:A bit old but it popped up in my Twitter feed today and it seems relevant: I'm liking the updates at the end. quote:Update: http://technicaltalkaboutit.blogspot.com.au/2016/10/what-to-expect-interviews-and-google.html a Goggle employee blog post successfully posted on LinkedIn with incoherent junk vocabulary starting from the middle of the text (probably aimed at lowering my post's rank in search engines) written by Marcia Pinheiro who looks like a Google Internet Shill (in orange, text related to me and Google but favoring Google, in red, the unrelated offending vocabulary) (all emphasis his)

|

|

|

|

Simulated posted:Here is a Swift library that uses B-trees. I attached his performance graph showing inserting into a sorted array at O(n^2) is an order of magnitude faster than a red-black tree at O(N log N) up to a certain size because the array is just so drat amenable to prefetch, branch prediction, and fits in L1/L2. It takes 100,000 items for the red-black tree to be faster. Neat, thanks for doing this analysis!

|

|

|

|

Axel Rhodes Scholar posted:I'm liking the updates at the end. Ok, the end of that is a little strange, but I take it that english is probably not her native tounge... also it's pretty funny that he's so mad that he has to load up his image editor and underline stuff. Someone mentioned that array problem... what he's doing is casting the pointer to a 64 bit integer a la *(uint64_t*), since the popcnt of 4 shorts is the same as one 64 bit integer, saving some movs I think. He didn't make it too clear to someone who probably doesn't write c code every day. And like someone else mentioned, popcnt is usually the right answer. (Unless it's a 68k, I'm bad at reading I guess.) Are modern C compilers able to infer this? I'm asking because I really don't know. Either way, I think I'm half this guys age, and I can probably answer all of those questions without making the interviewer want to hit me. (I didn't notice before, but he actually states what half his age is exactly ...) dougdrums fucked around with this message at 12:16 on Oct 16, 2016 |

|

|

|

On Linux I'd use ffs from Glibc, since it'll normally become the x86 instruction or a compiler builtin.

|

|

|

|

TooMuchAbstraction posted:Now I'm wondering if there's some point at which the linked list starts to win out overall just because you don't have to allocated a gigantic contiguous block of memory for it. Assuming the task looks something like "create datastructure, load data into datastructure, perform O(N) operation on datastructure, release allocated memory". (And nevermind that in most cases you'd do the "perform operation" as you load data) One time on a project I had to switch a structure from an array to a linked list because it was so big that there wasn't a single spot in the heap that could fit it all. (Well I assumed that was the problem, anyway. The allocation failed with std::vector but not std::list.)

|

|

|

|

Axel Rhodes Scholar posted:I'm liking the updates at the end. I've seen that Pinheiro person before, she's a regular linkedin spammer, and even a nominal bing.com search brings up her other blog: https://drmarciapinheiro.wordpress.com/about/ and she looks like some kind of crank.

|

|

|

|

rjmccall posted:I have no idea what you're trying to say here. Xarn said that walking a linked list is N log N; Fergus correctly pointed out that it's N log K, where K is the size of the address space; and I added that if you're including this "size of the address space" factor, you need to apply it to everything instead of singling out linked lists. Indexing into an array is O(log K). You can try to make a locality argument to drop this factor because of translation caches, but now you're talking about a sequence of operations, because reading a single array element at a random index does not in fact have some sort of inherent, magical locality. And if you're walking an entire array, you are still going to have to translate a linear number of pages at O(log K) per translation, even if you're only falling into that O(log K) expensive case 1/whatever-th as often as you would if you were walking a linked list. Yeah, I should've specified that random access in general is not O(1), not just for the case of lists. However I stand by the fact that sequential walk through array is O(N) (ok, N + K), because only the first access is random, where the other ones are easily predictable.

|

|

|

|

UraniumAnchor posted:A bit old but it popped up in my Twitter feed today and it seems relevant: I can sorta sympathize with getting an interviewer who checks answers by comparing them with the magic text on his paper being pain, but based on his writing I really, really wouldn't want to work with that guy.

|

|

|

|

Was the interviewing process bad? Yes. Did it successfully weed out an unqualified candidate? Yes. Seems good to me.

|

|

|

|

Coffee Mugshot posted:Was the interviewing process bad? Yes. Well I never said that the interviewee wasn't a horror too.

|

|

|

|

His only fault is that he's old

|

|

|

|

Volte posted:He doesn't even answer the quicksort question (merely states that the question is invalid) In fairness, "What's the best sorting algorithm" is a bad question for a Recruiter Bot screen, while being a potentially great question for a technical interview, because it depends on a lot. Most in-use general purpose sorting algorithms are hybrid sorts anyway. While quicksort-based algorithms are slightly more common (and avoid quicksort's nasty worst case), mergesort based ones are definitely not unheard of. In general, I'd expect both mergesort and quicksort to be a good off-the-cuff answer to the question, not just quicksort. Noting, of course, the standard caveat that this is just for blobs of arbitrary real world data and if you know the specifics of your data's format other algorithms may be more suitable. This is again assuming the interview was presented faithfully and such. Linear Zoetrope fucked around with this message at 10:04 on Oct 17, 2016 |

|

|

|

Jsor posted:In fairness, "What's the best sorting algorithm" is a bad question for a Recruiter Bot screen, while being a potentially great question for a technical interview, because it depends on a lot. Most in-use general purpose sorting algorithms are hybrid sorts anyway. While quicksort-based algorithms are slightly more common (and avoid quicksort's nasty worst case), mergesort based ones are definitely not unheard of. In general, I'd expect both mergesort and quicksort to be a good off-the-cuff answer to the question, not just quicksort. Noting, of course, the standard caveat that this is just for blobs of real world data and if you know specifics other algorithms may be more suitable. I think the point is that the interviewer had no knowledge and was just using the answers on the sheet, so unless the person said exactly what was on the sheet they failed. The questions are not bad, the way they were asked and the lack of understanding of the subject was the horror. From the article it was obvious that the interviewee was possibly overqualified for the position but the interview drone had no idea what he was saying just that it did not match the answer on his sheet.

|

|

|

|

hackbunny posted:His only fault is that he's old Hah yeah to be honest in 25 years I'll probably be ranting on my holoblog about how the interviewer at the TRUMP CyberRealm was just reading poo poo off of his padd even though I'm totally qualified for Prince of Engineering or whatever. I can't really blame him for it.

|

|

|

|

TheresaJayne posted:The questions are not bad, the way they were asked and the lack of understanding of the subject was the horror. From the article it was obvious that the interviewee was possibly overqualified for the position but the interview drone had no idea what he was saying just that it did not match the answer on his sheet. Yea, if that "returns an inode" thing had been on a college exam any decent moderator would have given the paper author a clip round the ear. Any wording error like that and people are going to think it's a trick question. Heck, when he said "count the bits" I couldn't help but think "um, you mean count the 1 bits, right? Otherwise you just multiply 10000 by 16.."

|

|

|

|

|

|

|

|

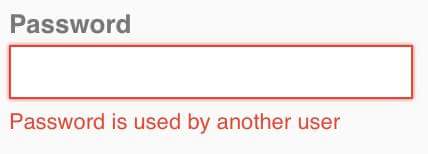

Name and shame.

|

|

|

|

I hope there's also a 7 character maximum for the password. And passwords may only contain lowercase letters and numbers. You know, like my bank

|

|

|

|

Volte posted:I hope there's also a 7 character maximum for the password. And passwords may only contain lowercase letters and numbers. You know, like my bank That sounds suspiciously like my own bank, except the password is set to 8 characters and is autogenerated for you. At least every single action requires 2FA from SMS I guess.

|

|

|

|

No way.

|

|

|

|

|

I'm going to guess someone's nephew set it up, after reading a tutorial on mysql put a unique constraint on the password field to OPTIMIZE FOR SPEED!!! Too bad they missed the tutorial on not storing plaintext passwords you idiot.

|

|

|

|

xzzy posted:Too bad they missed the tutorial on not storing plaintext passwords you idiot. You don't need to store in plaintext to check if another user is using the password.

|

|

|

|

xzzy posted:I'm going to guess someone's nephew set it up, after reading a tutorial on mysql put a unique constraint on the password field to OPTIMIZE FOR SPEED!!!

|

|

|

|

But then some other user could just be using a password that hashes the same!

|

|

|

|

I will continue to assume that anyone who requires a password to be unique among all users is not hashing them, because no one that dumb would even know the terms "hash" and "salt" apply to computers.

|

|

|

|

My bank disables paste on their password fields for maximum security

|

|

|

|

The thing that bugs me about bank security is how they can get away with so many bad password rules for retail banking sites while everything after that point (until it reaches some 45-year old COBOL program) is second in paranoia only to DoD standards for encryption and security. It seems extremely short-sighted and hypocritical to be so tight on security in places perhaps less susceptible to massive attack vectors.

|

|

|

|

They probably don't want to constantly have to deal with old people who forgot their online banking password, so they practically force it to be their son's name or something.

|

|

|

|

Sedro posted:My bank disables paste on their password fields for maximum security Too bad they haven't come up with a way to deal with the fact that the browser is under the control of the user. http://www.prioritized.net/blog/re-enabling-password-pasting-on-annoying-web-forms

|

|

|

|

xzzy posted:Too bad they haven't come up with a way to deal with the fact that the browser is under the control of the user. More effort than I should need especially when I'm using a probably more secure method than typing it from memory.

|

|

|

|

necrobobsledder posted:The thing that bugs me about bank security is how they can get away with so many bad password rules for retail banking sites while everything after that point (until it reaches some 45-year old COBOL program) is second in paranoia only to DoD standards for encryption and security. It seems extremely short-sighted and hypocritical to be so tight on security in places perhaps less susceptible to massive attack vectors. The thing that bugs me about bank security is how every bank's website I've ever seen is using plain HTTP. Not the customer portal, that's always HTTPS, and that's the part that counts, I guess. But why not just secure the entire operation from top to bottom?

|

|

|

|

from twitter this morning:

|

|

|

|

qntm posted:The thing that bugs me about bank security is how every bank's website I've ever seen is using plain HTTP. Not the customer portal, that's always HTTPS, and that's the part that counts, I guess. But why not just secure the entire operation from top to bottom? Apparently not true anymore because I just visited all the major banks I could think of and they all redirect to https.

|

|

|

|

Hah, just visited my bank's website through https and it redirected to http. It's a small bank in a 1k pop town though so I can't be mad.

|

|

|

|

|

|

| # ? May 14, 2024 16:08 |

|

Then again, most of the population has piddly little bits of cash in their accounts so maybe for retail banking it's more cost-effective to keep PEBKAC problems down than to risk millions of $150 balances to hackers. Most of the big credit card and bank heists have been conducted against their internal networks rather than Internet endpoints. A liberal interpretation of Jython I suppose.

|

|

|