|

part 2 of the chips n cheese teardown is out: https://chipsandcheese.com/2022/11/08/amds-zen-4-part-2-memory-subsystem-and-conclusion/ random thought-oid: on zen3, v-cache didn't change bandwidth directly, L3 bandwidth is still the same. You get higher hitrate which indirectly increases effective bandwidth (especially for large working sets) but it's just making the existing L3 bigger and the L3 is not any faster itself. RDNA3 supposedly moved in a direction where the infinity cache (L3) didn't get bigger, but it's higher-bandwidth (there are increases in L0, L1, and L2 as well of course). I wonder if AMD is going to do the zen3 thing, where it's the same cache structure just bigger, or whether they're going to move towards treating it as a L4 or side cache, with a separate/larger port to get more bandwidth out of it, to allow bandwidth improvements in L4. Or honestly they could probably build the chip to support both modes of operation and just assemble 2 different versions for different (server) applications. Skylake/Broadwell/Zen3 showed it doesn't have to be one or the other, the cache is very modular and amenable to plugging like that. Pick the one your task needs, bandwidth or working set. Or a double-high stack that does both, one underneath and one above, get a fast side cache as well as a big L3? would be funny. I have said a few times, I think AVX-512 workloads may benefit more than average from v-cache in general, both in the sense of vector workloads being a larger working set, as well as potentially liking more bandwidth/reduced average latency to memory/etc. But chips n cheese thinks the DDR5 bandwidth is not that great and not getting appropriate output relative to the theoretical limits, and I wonder if a bunch more cache (or faster cache) might help that by reducing trips to memory even for non-AVX workloads. Paul MaudDib fucked around with this message at 20:45 on Nov 8, 2022 |

|

|

|

|

| # ? May 30, 2024 11:22 |

|

mdxi posted:Got a weird one here. These are synthetic benchmark numbers, but they are backed up by what I see in real-world workloads:

|

|

|

|

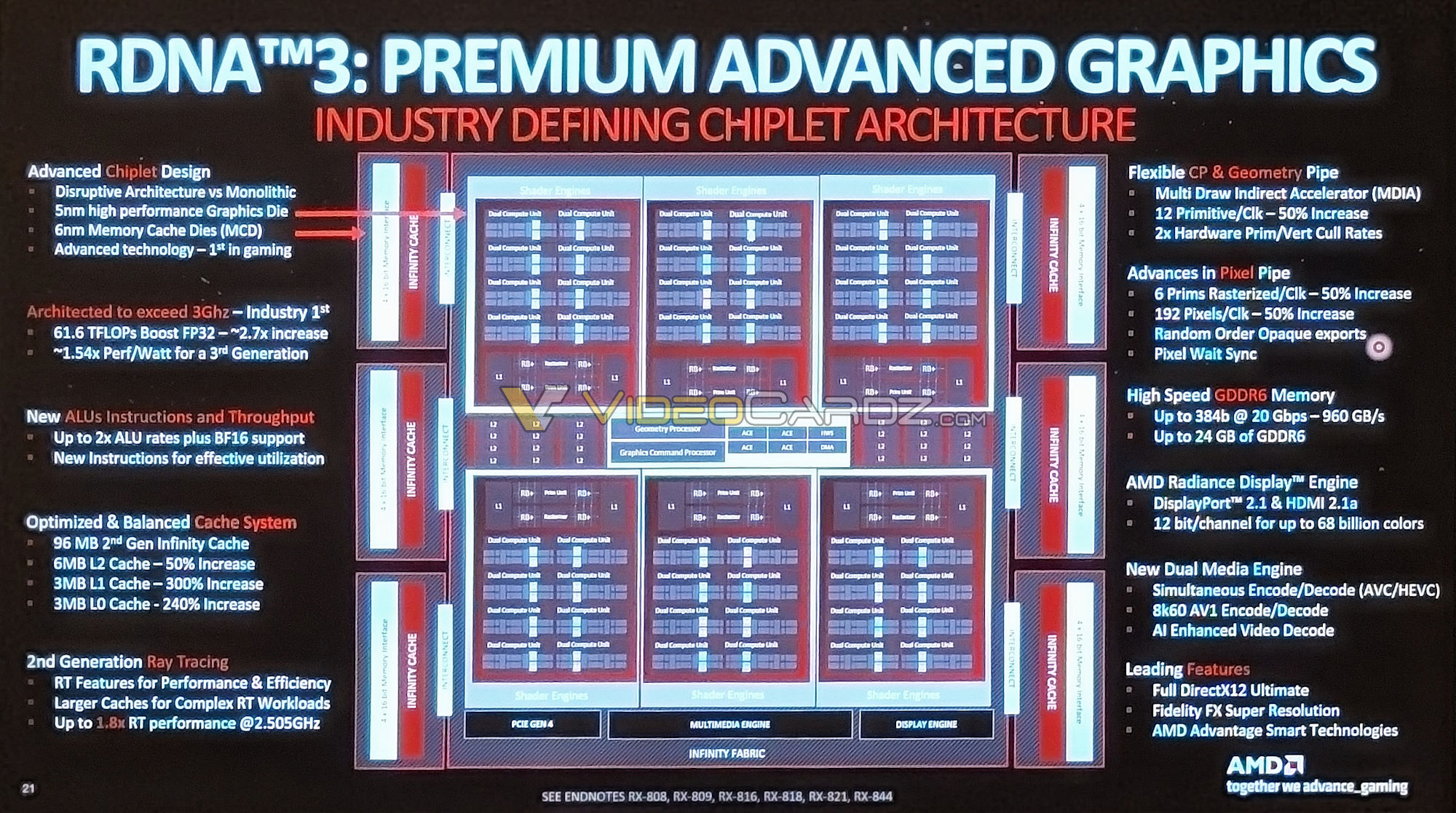

Paul MaudDib posted:part 2 of the chips n cheese teardown is out: https://chipsandcheese.com/2022/11/08/amds-zen-4-part-2-memory-subsystem-and-conclusion/ Since you mentioned L0, has AMD said what the L0 cache in RDNA 3 is? A cursory search didn't turn up anything.

|

|

|

|

ConanTheLibrarian posted:That's a good article. it was discussed last week in the GPU thread:

|

|

|

|

I saw that pic, but it doesn't show where the L0 is or what it's doing. The odd thing is that going by the percentage increase figures on the left, the L0 used to be bigger than the L1. Perhaps those little dual compute units have their own caches.

|

|

|

|

ConanTheLibrarian posted:I saw that pic, but it doesn't show where the L0 is or what it's doing. The odd thing is that going by the percentage increase figures on the left, the L0 used to be bigger than the L1. Perhaps those little dual compute units have their own caches. Yeah I think that's what it is, the RDNA2 reference says each WGP has its own L0 cache, a WGP being a pair of CUs

|

|

|

|

MH Knights posted:A 5800X3D would be a big upgrade if I currently have a 2600X correct? I did this exact upgrade 2 weeks ago, echoing others that it's a very noticeable upgrade for gaming. The thermals on this thing are MUCH warmer than my 2600x. Still deciding if my carryover Arctic 34 duo is going to be a permanent solution for cooling, might try undervolting before looking at AIOs.

|

|

|

|

homeless posted:I did this exact upgrade 2 weeks ago, echoing others that it's a very noticeable upgrade for gaming. I wouldn't worry about the temp too much as long as it isn't getting throttled or performing exceptionally under par.

|

|

|

|

homeless posted:I did this exact upgrade 2 weeks ago, echoing others that it's a very noticeable upgrade for gaming. FWIW, I am still using the same cooler (be quiet! Dark Rock Slim) I used with the 3600X I upgraded from, but due to thermals I upgraded my case (to a Corsair 5000D Airflow) and things have been fine since doing that. I still like my old case (Deepcool Macube 310) but it couldn't handle the 5800X3D and a 3080 12GB unless I removed the front panel, which turned it into a jet-powered dust vacuum. Now the Macube 310 houses my old 3600X and old 2070 Super and it does fine in the limited testing I've done.

|

|

|

|

homeless posted:I did this exact upgrade 2 weeks ago, echoing others that it's a very noticeable upgrade for gaming. A quick bit of googling says the 5800x3D has a peak draw of 105 watts, and the cooler you have can handle 210 watts. So that cooler should be able to handle that chip no issues. How much warmer? Are you maxing it out with light gaming? What's the temp at idle? It could be your case too, as CaptainSarcastic ran into. I'd first check and see if it is mounted well, and probably do a re-paste. If you do, take a look at the pattern of the paste on the CPU. Any high or low spots? Not evenly spread. It could be a slightly off mount, bad paste application, bad paste itself. A drop of thermal paste and a few minutes to re-mount the cooler is a lot cheaper than a new cooler.

|

|

|

|

The 5800X3D runs warmer than any other AM4 CPU due to the 3D v-cache obstructing thermal transfer from the cores to the IHS. It is not unexpected to see an increase to your operating temperatures after an upgrade if you're not also upgrading the cooler. Also, cooler ratings are often BS. There's no way an Arctic 34 Duo would do well with 210W. I would expect thermal throttling with that kind of CPU, depending on the type of load. Dr. Video Games 0031 fucked around with this message at 02:40 on Nov 9, 2022 |

|

|

|

Dr. Video Games 0031 posted:The 5800X3D runs warmer than any other AM4 CPU due to the 3D v-cache obstructing thermal transfer from the cores to the IHS. It is not unexpected to see an increase to your operating temperatures after an upgrade if you're not also upgrading the cooler. Yeah, forgot to mention that the 3D version will run hotter than the non. It was that they said it was running much warmer, hence the covering the bases. I will agree a bit on the rating. If it is some no-name cooler and they are spouting a number, I'd be hesitant. But Arctic has made a pretty good name for itself, and if they say it will handle 210 watts with a single tower heat pipe setup, I'd be inclined to agree with that. But the 5800x3D is half what the cooler "can" handle, hence the wondering of the said bases. E - For some reason I thought the Duo was dual tower, not dual fan. Oops. Koskun fucked around with this message at 03:53 on Nov 9, 2022 |

|

|

|

The Arctic 34 Duo is a single-tower cooler. I'd expect it to be able to cool a 5800X3D, but it will be warmer and louder-running than when it was paired with a 2600X, which uses less power and is less of a challenge to cool. I would also recommend double-checking the cooler mount and paste spread if the CPU is running dangerously hot, but we need more information than "much warmer."

|

|

|

|

Without a good AIO/giant heavy tower it�s not unexpected for the 5800x3d to sit near its thermal limit at 90C in all core workloads. In AVX workloads it�ll hit 90C even with a water loop. It�s just the way it is.

|

|

|

|

Thanks for the responses to my "what's up with these bench scores?" question. I should have been more clear that my concern wasn't that the score was X instead of Y, but rather that the 3950 was outperforming the 5950 in multicore when everything else was equal. (That includes, for the person who asked, the coolers. All are using noctua NH-C14S.) Still, y'all posting your scores and mentioning some other things led me to do a bit more lateral thinking. The most reasonable explanation that I can come up with is: while the 5950 can be goosed to higher clocks and has an IPC advantage, the 3950 simply has a better power/perf curve. Nothing in chip design is free, and since they're built on the same process node, the architectural improvements had to have a cost somewhere. I guess the power curve is where it ended up. This does make me look forward, even more, to the 7000 series, since the IOD will finally use less power. It's probably gonna be 3-5 months before I make that upgrade though.

|

|

|

|

Dr. Video Games 0031 posted:The Arctic 34 Duo is a single-tower cooler. I'd expect it to be able to cool a 5800X3D, but it will be warmer and louder-running than when it was paired with a 2600X, which uses less power and is less of a challenge to cool. I would also recommend double-checking the cooler mount and paste spread if the CPU is running dangerously hot, but we need more information than "much warmer." I have a 34 Duo on my 5800X and it peaks temps at just over 80C. Definitely check the mount because I mounted it well and I�m still uncertain about it half the time because its a pain to mount evenly.

|

|

|

|

how much do you folks tend to adjust chassis fans, intake/exhaust, compared to the cpu fan itself? i still don't know how much they correlate to cooling the cpu, especially when it's a case with good airflow in the first place

|

|

|

|

mdxi posted:Still, y'all posting your scores and mentioning some other things led me to do a bit more lateral thinking. The most reasonable explanation that I can come up with is: while the 5950 can be goosed to higher clocks and has an IPC advantage, the 3950 simply has a better power/perf curve. Nothing in chip design is free, and since they're built on the same process node, the architectural improvements had to have a cost somewhere. I guess the power curve is where it ended up. Since a whole lot of stuff for peak speed is now in the hands of the CPU itself and you are only guaranteed a minimum, you may just have a particularly good 3950X and a mediocre 5950X. (Also since you said "same mobo", do you mean literal same mobo as in you did an upgrade and these were your before and after scores? If so there are a couple tricky things that could be mucking up performance. One, did you do a full bios clear for the new CPU? Two, sometimes the heatsink needs a little time to work out the excess paste for best cooling.) kliras posted:how much do you folks tend to adjust chassis fans, intake/exhaust, compared to the cpu fan itself? i still don't know how much they correlate to cooling the cpu, especially when it's a case with good airflow in the first place I adjust case fans based on the mobo "system" temperature, except for the one right behind the CPU cooler which is tied to CPU temp but with max hysteresis. However my case is not a high airflow one. The goal for case fans is enough fan speed to keep interior temps low. With a mesh-front high-airflow tower case & adequate number of fans, you don't need a lot of fan speed to keep the ambient low. Static RPM is often just fine. You can get additional cooling by having so much air movement to turn everything into heatsinks -- that's why putting a box fan on the side of your case drops temps a lot. But that's silly. Also, the component that often gets the most benefit from case fan tweaks is the GPU rather than the CPU. The CPU heatsink tends to have plenty of free space around it, while the GPU can have some dead air because it's mildly boxed in on 5 of 6 sides. Having a case fan that is pushing air into that space under the GPU is a big help.

|

|

|

|

kliras posted:how much do you folks tend to adjust chassis fans, intake/exhaust, compared to the cpu fan itself? i still don't know how much they correlate to cooling the cpu, especially when it's a case with good airflow in the first place I have my front intake fans on one header, my exhaust fan on another header, and have them tied to CPU temp. To avoid the annoying spin-up during normal use I have the curve set to run a little higher than needed until the machine really is under load, then go to high RPMs pretty steeply. I made the CPU and system fan curves are all pretty similar. My GPU temps seem to remain decent with this setup, although I could probably dig into that a little further - I haven't had a pressing need to since upgrading my case, though. This case has a mesh front and top, very open back, and 1x140mm + 2x120mm fans in the front, and 1x120mm as exhaust.

|

|

|

|

Koskun posted:A quick bit of googling says the 5800x3D has a peak draw of 105 watts, and the cooler you have can handle 210 watts. So that cooler should be able to handle that chip no issues. Thought my reply was in the PC Building thread 5800x3D is idling ~46C, up from around 35C on my 2600X. Nothing else has changed. Gaming wise I never even heard my computer until I changed the processor, never exceeded mid 70s. However I don't have a direct comparison on games except for Overwatch 2 (no large difference), I did notice temps playing A Plague Tale: Requiem cranked up and it's gorgeous, and demanding so I get that. Touched 90C last night and my tower is doubling as a space heater. I have a Meshify 2 Compact with two 140mm fans on the front, one 120mm exhaust, all Noctuna. Will def try to repaste this weekend, thanks for the advice everyone. Time Spy comparisons 2600X - 5,850 CPU Score - 64C 5600X - 11,222 CPU Score - 80C

|

|

|

|

cinebench is a good quick way to see what your sustained max temps are likely to be to adjust your max fan setting for. low-mid 80s are fairly normal in it

|

|

|

|

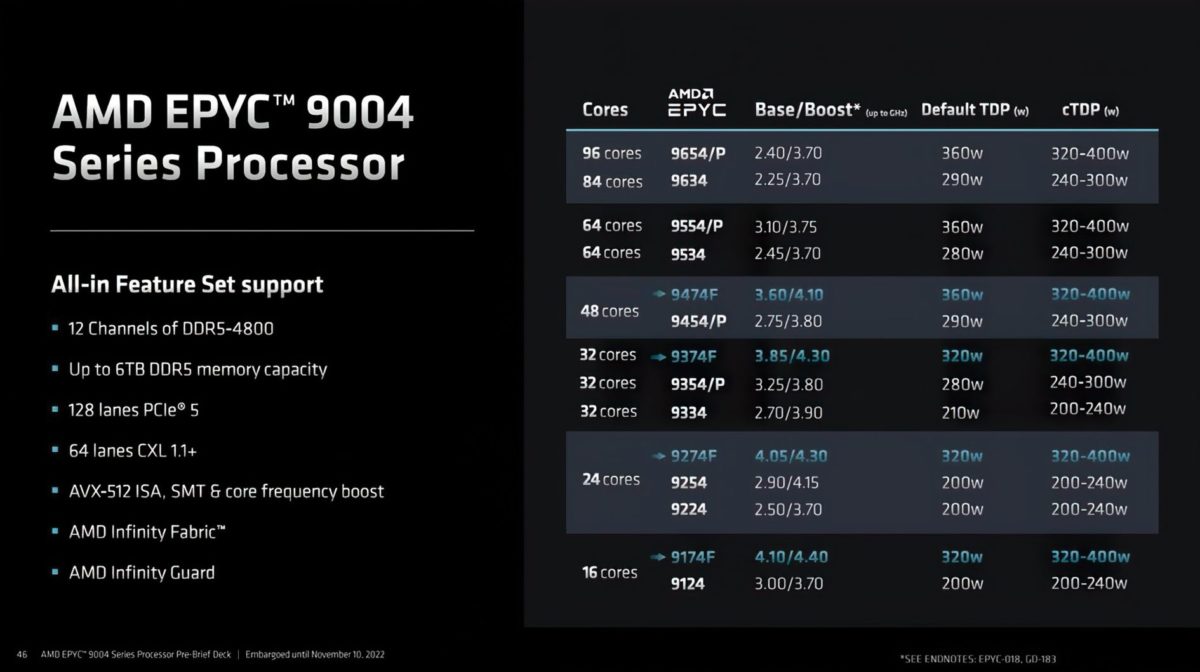

Epyc 9004 series reviews are out https://www.servethehome.com/amd-epyc-genoa-gaps-intel-xeon-in-stunning-fashion/ quote:The AMD EPYC 9004 series, codenamed �Genoa� is nothing short of a game-changer. We use that often in the industry, but this is not a 15-25% generational improvement. The new AMD EPYC Genoa changes the very foundation of what it means to be a server. This is a 50-60% (or more) per-socket improvement, meaning we get a 3:2 or 2:1 consolidation just from a generation ago. If you are coming from 3-5 year-old Xeon Scalable (1st and 2nd Gen) servers to EPYC, the consolidation potential is even more immense, more like 4:1.

|

|

|

|

homeless posted:Thought my reply was in the PC Building thread I did an underclock on my 3080FE to cut the temps on my 5800x3d, (this helped the most out my available options) but I had to put it back to stock until I have time to tinker with it again because folding@home was crashing on me, so I guess it's not stable anymore. I posted a guide in here awhile back if you are interested. Overclockers.com had a thread I follow and I also posted some helpful bios settings to get the temps down for about the same performance.

|

|

|

|

Malloc Voidstar posted:Epyc 9004 series reviews are out drat

|

|

|

|

homeless posted:Thought my reply was in the PC Building thread I checked my 5800X3D temps last night, and my idle is like 10 degrees cooler than yours, at least. Playing Far Cry 6 my highest temp was 85.5C, and I think that might have been during initial load because I reset the readings while playing, checked back, and the max temp never broke 80C while playing the game for a while after that. You probably do want to look into your cooling a bit more, if only because your idle temps seem high. I should probably dig further into my GPU temps, as I'm less familiar with those, but AFAICT my 3080 12GB is not absolutely frying itself.

|

|

|

|

Malloc Voidstar posted:Epyc 9004 series reviews are out 15% IPC, AND Frequencies gone up AND power consumption gone down? Literally down to 1 watt per core at this point.  Once again, it appears that the bulk of the power consumption remains the I/O die. I'm betting the entire APU stack is going to be monolithic again, if AMD could get their I/O die power consumption down, they'd be crowing it from the rooftops here. edit: Surprised we didn't see any CDNA compute poo poo at today's event, but maybe that would have been tipping their hand too much to Nvidia? SwissArmyDruid fucked around with this message at 22:59 on Nov 10, 2022 |

|

|

|

I had not heard of CXL before, seems cool as heck. Whole package looks like a game changer for AMD.

|

|

|

|

lamentable dustman posted:I had not heard of CXL before, seems cool as heck. Whole package looks like a game changer for AMD. Truga posted:speaking of ram

|

|

|

|

If you're having issues keeping the 5800x3d cool, look into pbo2 tuner. It seems like many chips can handle the max offset of negative 30. Obviously you need to deal with potential instability and tweaking if that happens, but I'm running the x3d with an old noctua 12s single fan and a 6900xt in a terrible cheap case and topping out at 80-82c degrees with near max frequency going

|

|

|

|

setting it up to be automated on startup is such a pain in the rear end though really wish we had better software for it

|

|

|

|

make a shortcut to it, add the underclock values in the "target" line in the shortcuts properties, stick it in "C:\Users\User\AppData\Roaming\Microsoft\Windows\Start Menu\Programs\Startup" i think that should work

|

|

|

|

Kevin Bacon posted:make a shortcut to it, add the underclock values in the "target" line in the shortcuts properties, stick it in "C:\Users\User\AppData\Roaming\Microsoft\Windows\Start Menu\Programs\Startup" I would guess the program needs admin privilege to do its thing. To run an admin app at startup, the best method is to use task manager and create a task for it. Tasks can be set to run escalated without popping a UAC.

|

|

|

|

https://twitter.com/harukaze5719/status/1591778411839909895?s=46&t=SEdC4YhDn2LPP0CU9uthCA If true, lack of a 7900x3d/7950x3d is a bummer. Guess they gotta price protect those GenoaX parts. The relative CPU stagnation for 2023 for both Intel and AMD matches other prior rumors.

|

|

|

|

Hmm that's unfortunate. I hope these rumor solidify pretty quickly, if true, so that I can go ahead upgrading. So far I'm not, because I'm waiting on a potential 7950X3D.

|

|

|

|

Don't give a poo poo, give me the APU parts already you bastarrrrrrrrds

|

|

|

|

That sucks if true, i was really wanting to upgrade from my 4960 non-k. I wanted to get in on that x3d wagon.

|

|

|

|

And out of curiosity, what would you do with a 7950X3D?

|

|

|

|

SlapActionJackson posted:And out of curiosity, what would you do with a 7950X3D? Eat into epyc sales?

|

|

|

|

I mean, I know that there are non-gaming uses for cache (not that I can identify any off the top of my head), but the X3D chips are primarily going to be aimed at gamers. So it makes sense to only do single CCD versions for now, considering the dual-CCD chips aren't the best for gaming.

Dr. Video Games 0031 fucked around with this message at 00:06 on Nov 14, 2022 |

|

|

|

|

| # ? May 30, 2024 11:22 |

|

v-cache is good for gaming and the 7900X and 7950X are completely pointless for gaming. not hard to understand why they're doing 7600X3D and 7700X3D/7800X3D instead of 7950X3D.

|

|

|