|

Get Odin Inspector. Otherwise your questions are hopelessly broad. There's a lot of assets on the store.

|

|

|

|

|

| # ? May 23, 2024 16:43 |

|

I'm being vague on purpose wrt to tools and such. I like to see what people have been leaning on. I got Odin Inspector (and Validator) already. Some other stuff: 1. NoesisGUI (although this has its own licensing junk so isn't really applicable to any kind of sale) 2. DOTween Pro 3. A* Pathfinding Project Pro 4. Editor Console Pro 5. Log Viewer 6. InControl I was curious about a human model asset that is easy to break apart and examine so I can get a take on what "professional" stuff is like and what I should be targeting with modeling and coding for animated stuff. I've been procrastinating on that and just been using placeholder boxes, and I think my resolution is to start actually doing something with some semblance of graphics.

|

|

|

|

Hello! I'm currently trying to implement a jittered version of the quasi-random R2 sequence in, as described in the linked article (the pseudo-code is way down at the bottom), in Godot. A simple method to construct isotropic quasirandom blue noise point sequences | Extreme Learning I've run into an issue where performance rapidly deteriorates as the input x and y values increase. In my use case these will reach into the thousands -- at which point everything has completely choked. This is what the function looks like: code:code:

|

|

|

|

anatomi posted:Hello! Is just doing the computation directly with (double-precision?) floating-point math functions not accurate enough? I didn't read the whole article. Replacing the entire function with `fmod(pow(1.0 + 1.0/x, y), 1.0)` seems to give close-enough results. But I assume it's being calculated in this weird way for a good reason? Edit: https://godbolt.org/z/E8e3j4TK3 (C++ but w/e) seiken fucked around with this message at 13:16 on Nov 26, 2022 |

|

|

|

seiken posted:Is just doing the computation directly with (double-precision?) floating-point math functions not accurate enough? I didn't read the whole article. Replacing the entire function with `fmod(pow(1.0 + 1.0/x, y), 1.0)` seems to give close-enough results. But I assume it's being calculated in this weird way for a good reason? You can use C++ in Godot for more performance-heavy code, so this might be preferable. https://docs.godotengine.org/en/stable/tutorials/scripting/gdnative/gdnative_cpp_example.html

|

|

|

|

Megazver posted:You can use C++ in Godot for more performance-heavy code, so this might be preferable. I assume you can call `pow` and `fmod` from godot and that's all the faster version does, so source language shouldn't really matter I think

|

|

|

|

anatomi posted:With x and y at 202 and 203 respectively the whole thing takes 1,200-1,600 milliseconds. I've pinpointed the problem to the subloop Using fmod and pow is guaranteed to break for precision reasons at considerably lower inputs than the numerical trickery approach does. The paper mentions failing at x * y > 70, and they're using python so presumably they mean with doubles. Even if it's floats you'll presumably just last to x * y > 140 instead. The main thing that strikes me is that you have an x value of 202. x should be the dimension of the noise + 1, if generalizing the 2D version in the paper. I'm not sure that the algorithm generalizes to 201-dimensional noise. It's almost certainly not tested that far. E: Also, as the paper mentions, if you want to make their jitter prng go faster then cache the previous result (or precompute a couple thousands) and just do the one new iteration instead of iterating over the entire array every time. Also saves you from allocating a bunch of arrays every time you compute a new sample. You can also use some other prng, like pcg or just a xorshift. It doesn't seem like the power fraction one offers any particular benefits for the jitter other than being somewhat mathematically neat. Xerophyte fucked around with this message at 16:22 on Nov 26, 2022 |

|

|

|

At the sizes you're talking about, (400,000 elements in the array), it should be single-digit milliseconds for each iteration of the inner loop. That's like, based on the physical limitations of how fast your CPU can do 400,000 integer divisions. So you can speed it up by a factor of 200 by caching your interim results and only doing one iteration of the outer loop for each call, maybe getting another 2x or 4x by writing highly optimized C or whatever, but you're not getting very much faster than that. Note that the code in the example uses very low values of X intentionally, to avoid this blowup. Having a 10000-element array when you're computing x=3,y=3000 is lightning fast compared to what you're trying to do.

|

|

|

|

Ah, sorry about that, I miswrote in my OP. x1 and x2 are 2 and 3 respectively, y1 and y2 are n+1. Thanks for y'all's help and input. I'm going to look into using a different prn.

|

|

|

|

is this for stochastic transparency or something? You're in the game dev thread so the question would be whether regular screen door is enough. Even otherwise the question is first 'okay, why?' just based on the dimensions you're giving. More specifically: Why do you need such uniform noise? Why do you need so many points of noise? Do you even need noise in the first place or just a uniform distribution of points? Are you expecting to generate this per frame? I just find it too easy to dig into the algorithm and miss the forest despite it being on fire. Ranzear fucked around with this message at 20:05 on Nov 26, 2022 |

|

|

|

Ranzear posted:is this for stochastic transparency or something? You're in the game dev thread so the question would be whether regular screen door is enough. Unlike the paper I'm just using 8 textures (stored in 2 x 4 channels), but it's still a lot of fiddly work, especially if you want to iterate. I wrote a TAM generator in Godot to that end. The reason I want to utilize a quasi-random sequence is to ensure that the tones and mipmaps will build upon each other in a nice-looking and predictive way. For my project I'm attempting stippling rather than hatching, and it seemed to me that something blue noise-ish would look organic (Sobol and Halton produced too many clusters, R2 was too structured). I'm sure I've made things needlessly complicated, and I know I'm using the wrong tools for the job (my brain and Godot).

|

|

|

|

If you're already packing it into textures and working with shaders, find/write a compute fragment to generate the noise instead?

|

|

|

|

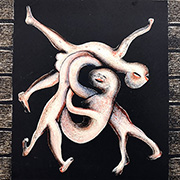

I had another thought that maybe I wasn't "actually" implementing a "random flood fill" properly just from randomly swapping between blobs, because ultimately they were still doing a breadth-first search just sometimes some blobs got to be greedy and take extra turns. I implemented a Heap/Priority Queue from Sebastian Lague's A* Pathfinding tutorial and made a simple object that just stores 2 ints, the ID of a given cell, and its "rank" which is a random number between 0 and 100 * the number of cells. This random rank basically will randomize how the items in the queue get popped. This seems to have given nicer results than before:  Next step is to generate my continents somehow, and then I can begin attempting to assign plate types and movement vectors to the plates. I haven't really thought much about how to figure out to go about taking this information and using it, but soon(tm).

|

|

|

|

Ranzear posted:If you're already packing it into textures and working with shaders, find/write a compute fragment to generate the noise instead?

|

|

|

|

Rocko Bonaparte posted:Are there any interesting assets in the unity store for tools? Also I would be interested in a high-quality model I could take apart and mess with to see how stuff is really done with animations. Unity has bought a lot of the high quality tooling and released it for free. Odin inspector is worth the price. I also like some of the custom log windows, but the base one has gotten a lot better since I thought they were a must have. Node canvas is good if you need behavior trees.

|

|

|

|

anatomi posted:I'm packing the textures in PS. But compute shaders do seem like fun... Oh man. My first rule of gamedev is don't do anything manually that isn't explicitly visible art, especially if it's just 2D noise. Hell, I don't even color sprites manually but instead use grayscale and masking so I can use the same sprites across different teams. Hell, I'm near enough to using strongly ramped normal maps to shade the sprites automatically too. You're in a weird mixup, though, where you want this to be faster to generate but are reusing the textures anyway? I'm missing something. Ranzear fucked around with this message at 20:56 on Nov 28, 2022 |

|

|

|

Haha. Well, I've automated the packing process in PS. Actions are useful. I stumbled into this rabbit hole because I make a lot of changes to the TAM. I'll change the brushes, or the size of the stippling strokes, or the levels of coverage... All of which requires me to recreate the texture sets, and in some cases generate new points.

|

|

|

|

Don't want to spam this too much, but figured some of you might be interested: We're running a game design jam. Itch.io page here. It's set up to be beginner-friendly so there's no obligation to create a demo: you can just as easily submit a pitch deck, a board or card game version of your concept, etc. Also have a few prizes available including tutoring hours to help people bring their concepts closer to reality.

|

|

|

|

Is the norm for an Xbox One controller's in a menu to have A select and B to cancel the menu? I'm disregarding the menu button and looking at the diamond button quadrant or whatever they call it.

|

|

|

|

Rocko Bonaparte posted:Is the norm for an Xbox One controller's in a menu to have A select and B to cancel the menu? I'm disregarding the menu button and looking at the diamond button quadrant or whatever they call it. Yes, at least in NA (and increasingly everywhere). Same thing on PlayStation, Cross is select and circle cancel. In Japan it typically used to be reversed, and Sony would make you respect that, but I don't think they make you anymore. Switch is A (east) select, B (south) cancel. But if you only care about PC, I'd say every controller should be treated like an Xbox controller. I usually call them the "face buttons" and try to call them by cardinal direction, so on an Xbox controller it's A South, B East, Y North, X West.

|

|

|

|

I should start calling buttons by their gravis gamepad color

|

|

|

|

leper khan posted:I should start calling buttons by their gravis gamepad color On Mortal Kombat we referred to the face buttons as 1/2/3/4, corresponding to what they mapped to on an MK arcade machine (W/N/S/E). Another thing to learn, but it removes ambiguity. The real answer of course is to call them O/Y/U/A

|

|

|

|

more falafel please posted:On Mortal Kombat we referred to the face buttons as 1/2/3/4, corresponding to what they mapped to on an MK arcade machine (W/N/S/E). Another thing to learn, but it removes ambiguity. Shameful. USB HID spec clearly states that 0/1/2/3 correspond to S/E/W/N for the right cluster. 4/5/6/7 are L1/R1/L2/R2 https://w3c.github.io/gamepad/#remapping I don't think I'm immediately aware of any controller that behaves this way.

|

|

|

|

poo poo I grabbed the web controller one not the usb one. Oh well, they're probably different

|

|

|

|

leper khan posted:Shameful. Yeah, these were just #defines that mapped to the platform-specific button code. A good portion of the codebase and most of the standards were established well before USB was a thing.

|

|

|

|

Thanks everybody. I'm using InControl in Unity. It looks like I for an Xbox One controller I can set submit to Action1 in it and cancel to Action2 in it and it would follow the conventions on that particular controller. I can't say how that extends to other controls, but Action1/Action2 is rather suspicious.

|

|

|

|

I'm learning armatures in Blender, and I've now exported about 40 skeletal mesh FBX attempts to hook up correctly to a control rig in UE. 0 for 40 so far. How can it be this difficult to get the scale / axes correct? And as with anything Blender related, there are 1000 videos with 1000 different hack fixes to figure this out.

|

|

|

|

Purely anecdotal, but I've never heard of a situation where exporting to FBX and importing that FBX into another tool worked, without writing a custom FBX exporter and/or importer.

|

|

|

|

lord funk posted:I'm learning armatures in Blender, and I've now exported about 40 skeletal mesh FBX attempts to hook up correctly to a control rig in UE. Oof, I had this workflow largely figured out for Blender to Unity3D, but I never learned it yet for Unreal, good luck!

|

|

|

|

more falafel please posted:Purely anecdotal, but I've never heard of a situation where exporting to FBX and importing that FBX into another tool worked, without writing a custom FBX exporter and/or importer. I spent a day or two figuring out the exact series of steps needed to get something from Blender to Unity, exhaustively documented my process, then wrote a script to automate it for me because it was ridiculously finicky. Notably, one of the steps for getting a rigged model to work in Unity was to rotate it 90 degrees about one axis and apply that transformation before exporting. Something about having an armature on the model makes it especially weird to work with.

|

|

|

|

Yeah this is so, so finicky. Like, you can't even leave the armature named 'Armature'. That magically breaks it (I'm not joking).

|

|

|

|

lord funk posted:Yeah this is so, so finicky. Like, you can't even leave the armature named 'Armature'. That magically breaks it (I'm not joking). https://github.com/xavier150/Blender-For-UnrealEngine-Addons There's still some stuff you need to slowly learn how Unreal 'wants it' but afaik for root motion it expects the root bone to be named "root". Otherwise this Addon will error check the Export for you and fix the scaling and the front axis and all the other semi-finicky things.

|

|

|

|

jizzy sillage posted:https://github.com/xavier150/Blender-For-UnrealEngine-Addons using their software? Jesus christ. edit: holy poo poo it's $18 a day to use Maya using tokens edit2: and they expire after a year?! jfc lord funk fucked around with this message at 02:55 on Dec 13, 2022 |

|

|

|

lord funk posted:

https://www.immersivelimit.com/tutorials/export-animations-from-blender-to-unreal-engine I've used this blog. It pretty much worked like a charm to get animations from Blender to Unreal. Edit: Added link

Mr Shiny Pants fucked around with this message at 21:17 on Dec 13, 2022 |

|

|

|

Thanks for the tips! Before I go further, I should probably ask if I'm actually doing the right thing... I'm trying to rig up some mech space ships for animation. The end goal is to make an animation blueprint in UE so moving / firing weapons /etc. makes the parts move. Something like these ships:  So I don't actually want any 'bendy' parts of the ship. All the components should stay rigid. Is creating a skeletal mesh the right approach here? I want to use Control Rig in UE to make my animations, which needs a skeleton I think. I haven't gotten far enough to see how I'm supposed to connect the meshes to the skeleton correctly.

|

|

|

|

lord funk posted:Thanks for the tips! Before I go further, I should probably ask if I'm actually doing the right thing... even if you have no actual bendy parts, in a lot of tools you get more options for animations using a rigged and skinned mesh. Just don't have any vertices with multiple weights, to avoid bending make sure each vertex has only one influence and you should be good.

|

|

|

|

Yeah, having an armature will make animating stuff a lot easier, compared to breaking the mesh out into separate meshes that you manually rotate/translate/etc. As Chainclaw says, as long as each vertex has a weight of 1 on one bone and 0 on all other bones, you'll get a good mechanical look to the motion. You'll probably also want to change your animation curves -- the default is usually "ease in + ease out", while mechanical motion is usually more like "linear in + ease out" (i.e. start motion at full speed, gradually slow to stop at the end).

|

|

|

|

Awesome awesome, thanks. The weighting thing makes sense.

|

|

|

|

That reminds me, has Blender improved its armature/rigging solver recently? I remember working on a rig and kept running into problems trying to make more realistic control rigs with the parenting causing cycle problems; attempts to use drivers to work around this were kinda slow etc. Blender's been through like a bazillion updates since when I last used it though which was back I think around 1.8 early 2 and now its like 2.7/2.8?

|

|

|

|

|

| # ? May 23, 2024 16:43 |

|

lord funk posted:Awesome awesome, thanks. The weighting thing makes sense. Make sure everything is parented to the armature otherwise Unreal will make it separate objects.

|

|

|