|

Agrikk posted:Oh I get it the whole overkill thing completely *side-syes 42U cabinet in garage* I haven't saturated a 10GB link, but get close if I'm doing orchestrated VM rollouts, max I've seen is maybe 7GB across a single link.

|

|

|

|

|

| # ? Jun 9, 2024 23:54 |

|

I'll see close to 10g when doing VM migrations from host to host in my homelab, and running e.g. CrystalDiskMark on a network VM disk will see up to 1GB/s sequential reads. The latency matters much more than the bandwidth though for the usability of network disks with VMs.

|

|

|

|

Twerk from Home posted:For what it's worth all the documentation tends to recommend 25gbit over 40gbit because 25gbit is lower latency. 40g is four 10g links muxed into one while 25g is natively 25. That�s why there is less latency.

|

|

|

|

So for your garage rack folks, what do you do for cooling? It's not like my garage has A/C, and it gets like 90+ with 80+% humidity around here neither of which seems positive for hardware health

|

|

|

|

Azhais posted:So for your garage rack folks, what do you do for cooling? It's not like my garage has A/C, and it gets like 90+ with 80+% humidity around here neither of which seems positive for hardware health My rack has the rear facing a window with a vent fan that pulls the air outside, and in the summer the ac cools the garage slightly.

|

|

|

|

I have a small ventilation fan set near the ceiling of my garage to exhaust hot air, but my garage basically stays at 50F all winter and 85-90F in the summer. I don�t sweat it because data centers run at around 85F these days. For me the big deal is dust. For whatever reason I have a dusty garage and so I only buy cases with filters and/or use dust screens on fans that I have to vacuum or blow out 1-2 times a month.

|

|

|

|

Where do people go for cheap used server memory? I'm looking to grab some 64/128GB DDR4 RDIMMs, I've typically used ebay for parts but are there other places I should be checking out?

|

|

|

|

I just use eBay

|

|

|

|

ebay or FB Marketplace, surprisingly.

|

|

|

|

See? Now I�m all interested in upping RAM in my three ESX hosts plus two trueNAS boxes because

|

|

|

|

Not sure if they've been posted here before, but there are US and UK private Facebook groups where IT pros sell off gear that their employers are retiring/they have swiped that sometimes have some great stuff at reasonable prices. US: https://www.facebook.com/groups/282932402562324/?mibextid=uJjRxr UK: https://www.facebook.com/groups/uk.itequipment.buyandsell/?mibextid=uJjRxr Kind of a pain to navigate if you're looking for something specific but all manner of cool poo poo pops up from time to time.

|

|

|

|

Having just spent some cash to upgrade my three ESXi boxes to Xeon E5-2667v2 chips with 128G of RAM each, I'm wondering if there's a better value than running ten year old processors on ten year old Supermicro boards with ten year old RAM. Sure, this kit has been bombproof and my 3 hypervisors to 2 SAN devices across 10g fiber work great, but I'm wondering if I've lived in vintage Supermicro land for long enough, especially considering that the E5 processor is not supported after ESX 7. Is there a more performant budget solution that I should be considering for my next upgrade? What is the homelab zeitgeist for server hardware?

|

|

|

|

Are you looking for a similar amount of capacity with lower power consumption? There are probably deals to be had on AM4 hardware at this point, e.g. Ryzen 3950x/5950x that can take 128GB RAM on an X570 motherboard, but you'll sacrifice PCIe lanes and niceties like BMC, and the unbuffered ECC still commands a premium. If you want more capacity then maybe look into previous gen EPYC gear.

|

|

|

|

For my hypervisors I only need one pci slot and a SATA port. As for the rest, I�m looking for better memory performance as most of my workloads are memory-intensive things like databases and in-memory caching. Lower power draw would be nice but not necessary. Same with OOB management as I�ve gotten used to Supermicro IPMI but a walk to the garage wouldn�t be the end of the world.

|

|

|

|

Those of you running SSDs on old-rear end hardware: How are you handling TRIM? I just got bit by a near-full disk turning into a persistent failure even after deleting stuff at the filesystem level because the stupid MegaRAID SAS 9361-8i card will not pass through TRIM in any way, shape, or form if it's in RAID mode. Had to pull the disk out into a JBOD and manually TRIM: https://www.redhat.com/sysadmin/trim-discard-ssds

|

|

|

|

Hardware RAID, man.

|

|

|

|

I'm not and never have. What is the issue you are solving with TRIM? I bit the bullet a few years ago and abandoned hardware RAID in favor of passthrough-mode-and-ZFS on TrueNAS and in neither case did I make any kind of allowance for it.

|

|

|

|

Agrikk posted:I'm not and never have. What is the issue you are solving with TRIM? Write amplification by not discarding empty bit from cells. Without trim you are up to the disk controller which usually does a poo poo job at it.

|

|

|

|

Agrikk posted:I'm not and never have. What is the issue you are solving with TRIM? After a 240GB intel SSD got pretty near full followed by me fixing that by deleting stuff and getting reported filesystem disk usage under 50%, I saw the machine become completely nonresponsive and report "StorageController0 access degraded or unavailable - Assertion" in its health log. I can't get any crash logs because the storage is unmounting, and was doing it within half an hour of whenever I rebooted it. Pulling the disk out and manually trimming it with `fstrim` seems to have resolved this. Edit: OK, by "pretty full" I mean /tmp filled up the entire disk and then logs about all the full filesystem and write errors packed up the last few bytes until it hit well and truly 100% full. Twerk from Home fucked around with this message at 00:19 on Jan 3, 2024 |

|

|

|

Agrikk posted:Having just spent some cash to upgrade my three ESXi boxes to Xeon E5-2667v2 chips with 128G of RAM each, I'm wondering if there's a better value than running ten year old processors on ten year old Supermicro boards with ten year old RAM. Sure, this kit has been bombproof and my 3 hypervisors to 2 SAN devices across 10g fiber work great, but I'm wondering if I've lived in vintage Supermicro land for long enough, especially considering that the E5 processor is not supported after ESX 7. AM4 has been mentioned above, you'll get better performance for less power. Used Supermicro H11 + Epyc + memory combos are pretty reasonably priced on ebay as well, but they're also not on the HCL for ESXi 8. What kind of storage are you running?

|

|

|

|

Wibla posted:AM4 has been mentioned above, you'll get better performance for less power. Used Supermicro H11 + Epyc + memory combos are pretty reasonably priced on ebay as well, but they're also not on the HCL for ESXi 8. Epyc processors aren�t on the HCl? What the poo poo? I have two trueNAS boxes in a combination of NFS shares and iSCSI luns- one crammed full of as many M2 sticks and SSDs I could fit, and the other has a bunch of 4TB spinning disks. PCIe bifurcation is a pain in the rear end to sort out I tell you what.

|

|

|

|

Do the Epyc offerings work as a server + gaming PC? Wouldn't mind consolidating down to one machine for both my homelab and gaming.

|

|

|

|

Blurb3947 posted:Do the Epyc offerings work as a server + gaming PC? Wouldn't mind consolidating down to one machine for both my homelab and gaming. I'd expect performance to be somewhere between mediocre and weird, given the higher latencies, many different NUMA domains, and generally low clocks. You also may run into games that crash or refuse to launch upon seeing that many cores.

|

|

|

|

Agrikk posted:Epyc processors aren�t on the HCl? What the poo poo? I was looking at the Supermicro list of motherboards. here Agrikk posted:I have two trueNAS boxes in a combination of NFS shares and iSCSI luns- one crammed full of as many M2 sticks and SSDs I could fit, and the other has a bunch of 4TB spinning disks. Ooo, You should look into 25 gigabit networking then

|

|

|

|

Wibla posted:Ooo, You should look into 25 gigabit networking then At the moment I am nowhere near 10g use, nevermind 25g. But don�t think for a second that I won�t end up there eventually.

|

|

|

|

Agrikk posted:At the moment I am nowhere near 10g use, nevermind 25g. It's not necessarily so much about the raw speed... but more about reduced latency. It matters if you already have NVMe drives in your storage solution

|

|

|

|

Can you tell me more about improving latency by switching to 25g? I�m already at 1ms pings and running jumbo frames on my storage VLAN. How does increasing the bandwidth ceiling improve latency if I�m not close to saturating the link?

|

|

|

|

Agrikk posted:Can you tell me more about improving latency by switching to 25g? 802.3by 25gbit Ethernet is faster than 10 gig, the delay to serialize a packet is lower because the actual rate of transmission is faster. 25gbit has 0.48µs serialization delay compared to 10g Ethernet over SFP's 1.2µs. if you're doing this over cat6 wiring both are slower. There are multiple kinds of 100gbit Ethernet, you can do it as 10x10 or 4x25. You can do stuff like NVMe over TCP now, so this would matter if you're trying to let NVMe storage over the network. https://nvmexpress.org/welcome-nvme-tcp-to-the-nvme-of-family-of-transports/

|

|

|

|

So I got the Netapp DS6600 running, finally found controllers that work on JBOD mode only:  Only weird issue - With one controller in, only the top 4 drawers showed up, once I put in a second one and daisy chained them together via SAS, the 5th drawer populated. Oh well.

|

|

|

|

Nice setup for backing up the internet.

|

|

|

|

This makes me want to repost the photo of my RPi homelab server that I mounted to the leg of a coffee table with twist ties.

|

|

|

|

What's the runtime on that UPS?

|

|

|

|

Cenodoxus posted:What's the runtime on that UPS? 16 minutes or so, its scripted to auto-shutdown the storage and the virtualization with a delay of about 4 minutes between them. Its largely because it backs the whole rack right now, I need to split up the smaller UPSs for the storage arrays and the main UPS.

|

|

|

|

Looking for some advice on homelab software/process/solutions rather than the actual hardware portion of it. Currently I run a few exposed to the web services for friends and family on my homelab hardware. Emby, Nextcloud, Bitwarden, etc. I want to keep janitoring of this part to a minimum and avoid breaking it catastrophically, it's OK if my homelabbing breaks it for a day or two. I have a pfsense router, Unifi switches, a pair of proxmox servers, some ubuntu VMs, an unraid NAS I'm looking to eventually move off of, and a couple of windows PCs 1 of which stays online consistently. It's small enough that I mostly manage, update, and setup new things manually. The only thing I really have running automatically are backups. I'm planning to setup ansible and semaphore and automate some pretty simple stuff with that. Create a backup and then update VMs, push software installs to windows PCs, spin up new ubuntu VMs with basic settings, user accounts and specific software installs done all ready to go. Just looking for general advice on setting any of that up, pitfalls to avoid, alternatives to consider, extra things I should take a look at implementing at the same time, etc.

|

|

|

|

THF13 posted:Just looking for general advice on setting any of that up, pitfalls to avoid, alternatives to consider, extra things I should take a look at implementing at the same time, etc. Stuff I've learned so far: Have a backup plan (SSH keys, etc) to access your systems if you automate yourself into the ditch. I use Ansible to manage the user accounts on all my non-AD-joined Linux hosts. I keep an SSH key locked away to get in as root in a break-glass situation. Think about inter-dependencies between your systems when adding new core services. I run my Domain Controllers in Proxmox and they handle the DNS zone for my local network. The VM disks are stored on Ceph. One time I had to do a cold start of everything, and my Proxmox hosts couldn't resolve each other to connect their Ceph daemons, so my DCs couldn't boot to serve the DNS requests. Circular dependency, whoops. Use LXC containers wherever you can, they're fantastic and very lightweight. No live migration option kind of stinks, but if you store the container images on shared storage they can still be cold-migrated between hosts in seconds.

|

|

|

|

The biggest thing by far is: Can the system (as in, set of devices) cold-boot without getting into a catch-22 where some service needs something else? Big butt providers like Amazon, Facebook, Google, and many others have found out the hard way that they made that mistake, so don't assume you can't make it.

|

|

|

|

|

My bootstrap devices for my homelab is a Pi on my network set to send out WOL packets to all my devices 5 minutes after boot (to ensure my home isn't experiencing brownouts still). It's set to always boot as soon as it's provided power. I also setup a backup vpn on it which I can use to ssh into my home network anywhere in the world to power cycle stuff if they somehow shut down outside a general power outage. Short of a hardware failure for the pi (or my internet provider changing my IP I guess since my DDNS isn't on my bootstrap device) something like this always be able to recover my homelab remotely. Nitrousoxide fucked around with this message at 01:28 on Jan 11, 2024 |

|

|

|

Nitrousoxide posted:My bootstrap devices for my homelab is a Pi on my network set to send out WOL packets to all my devices 5 minutes after boot (to ensure my home isn't experiencing brownouts still). It's set to always boot as soon as it's provided power. It also has a pair of mirrored disks which receives and stores all the logging information from every device, and which is used by Prometheus (in addition to all the direct exporters on the devices) to make Graphana work. Once everything else is shut down, the RPI (and the PoE switch that powers it) can be powered by the UPS for a few days - though the electricity grid here in Denmark is pretty good, so outages usually only last less than a day. BlankSystemDaemon fucked around with this message at 02:25 on Jan 11, 2024 |

|

|

|

|

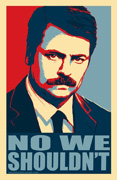

https://twitter.com/sysadafterdark/status/1747114461146620102 The sound of thousands of nerds grumbling at once, as free personal homelab ESXi is dead now.

|

|

|

|

|

| # ? Jun 9, 2024 23:54 |

|

I wonder if they're going to kill off VMUG and that subscription. Maybe it's time to build a proxmox box.

|

|

|