|

Bloody posted:i have a .net console app that i want to minimize to the system tray rather than the taskbar how do i do this if you don't care about windows <= vista/server 2008, you can use GetConsoleWindow to get the handle to the window, and hook it to detect when it gets minimized (see SetWindowsHookEx; you'll need a hook DLL and a hook that can catch the minimize event; WH_CBT is a good one and will send you HCBT_MINMAX events). then you can hide it and add your tray icon. this is by far the simplest way, not necessarily the best if you do care about windows vista and earlier, it's more complicated because in those versions console windows are managed by a system process and there is a limited amount of fuckery possible (in case of vista, next to none) hackbunny fucked around with this message at 15:54 on Oct 17, 2014 |

|

|

|

|

| # ¿ May 11, 2024 06:06 |

|

take all dance kill me yes e: going from memory e nw up ask technician about pin set switch to beach sit on couch wear clasp push button take glass give glass to sam again again again take glass put glass in replicator take pin down se e e e e wait wait wait wait  e2: if I actually got it right, I played that game too drat much hackbunny fucked around with this message at 09:27 on Oct 20, 2014 |

|

|

|

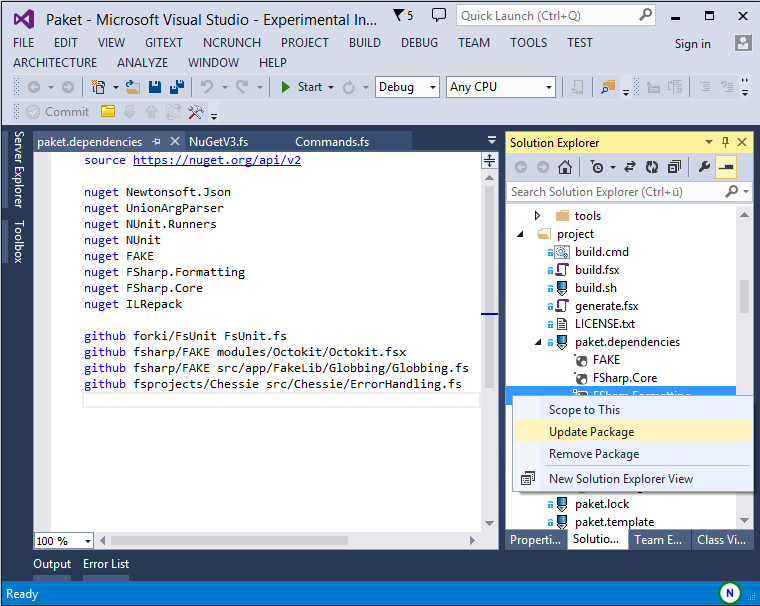

ninja: best build system y/y

|

|

|

|

FamDav posted:are these good or bad questions rotor i need your validation why are people so obsessed with data. most programming I do deals with states, data is incidental. even then the most important aspects of data in my day to day work are lifetime, ownership and mutable vs immutable, not format. I used to obsess over performance too but nowadays who cares, I'll just go O(n) and maybe some day ask a profiler if I should bother optimizing personally, when my boss asked for suggestions about interview questions, I told him to make them read code. explain what this code does, fix the bug in this code, implement this new feature, etc.

|

|

|

|

I like programming. Programming is not terrible, it is in fact cool. Capitalization suggests this post is unironic

|

|

|

|

my ideal of programming is the starship enterprise (NCC 1701 D). serious. I rewatched tng a few years ago and it honest to god made me rethink programming the enterprise's systems are so flexible! you can monitor, enable, disable, reroute any subsystem in real time! you can reconfigure everything, it's great. it's really hard to program like that, I realized, I just don't have the tools. one time I was so frustrated with multi-threading in C that I dreamed an environment where you'd spawn subprograms instead of threads, it just seemed a more natural model for C. inter-process communication would be absolute garbage of course, because C's type system is "let's give a name to this range of bytes in memory", so it would probably take a whole new language to have something better than UNIX where everything eventually has to fit in a pipe or file of some sort. thanks to octopus guy (sorry will never remember your nickname right) I found out that actually exists, as Erlang. I wish there were smaller scale versions of that, because a GUI application with background workers can get surprisingly complex! and I really wish sometimes I could just crash my workers (which each aggregate and transform data and events from several unreliable sources) and restart them. since I can't have that, I try to do the next best thing: comprehensive logging, even of impossible conditions (it may be impossible today, what about when someone changes the code, or the code of another component?), self-healing state (yes, it results in a lot of redundant code), and hidden ways in which user actions can automatically unfuck state I used to be all about assertions, obsessed with assertions, and assuming correctness to make "efficient" (and nearly impossible to debug) code ("if the code is in the correct state, then this pointer is actually a 16 bit variable stashed in the lower bits"). I'm still crazy for assertions but logging and self-healing state are the real stars of the show now

|

|

|

|

Kilroy posted:I'm Wesley you could be Geordi in that simulation, it could be worse e: the simulation where you sacrifice him to save the enterprise, not the one where he makes out with the enterprise's warp drive designer hackbunny fucked around with this message at 16:43 on Oct 20, 2015 |

|

|

|

I want to divert power to the shields in my programs, like mononcq does, drat it I'm envious

|

|

|

|

c++ makes you specify it explicitly and that's the only correct way

|

|

|

|

c++ is the light

|

|

|

|

Nitrocat posted:i believe the concept you are looking at is software coupling between programs instead of modules. can't exactly structure a mobile application in that way, can I. the iOS sandbox even specifically restricts you to a single process

|

|

|

|

don norman of "design of everyday things" fame has written a lot about it in terms of human-machine interface. machines, he says, are currently like infants in that all the communication they're capable of is crying (i.e. crashing, sounding alarms, etc.). there are many historical examples of this, sometimes deadly, like the autopilot that kept silently correcting a slight roll on a commercial flight, until it couldn't correct anymore and welp, automatically disengaged without notice, launched the airliner in a barrel roll and almost killed everyone. he argues machines should be more like work animals, the example he uses is horses, where you have really good two way non-verbal feedback between "operator" and "machine" douglas adams provided the perfect counter-example, with the automatic doors that don't just open for you, but feel pleasure doing it and verbalize the pleasure I'm also reminded of data from star trek and his quest to acquire feelings. except he already has feelings. android feelings, yeah, sure. why should he have human feelings. the other alien species don't have human feelings either. I could understand if his quest was to find or make more of his own, but no, it's to be more human

|

|

|

|

Soricidus posted:idk, turing's original proposal has lasted the entire history of ai so far without any significant shifting of goalposts? current consensus afaik is that it's a useless definition of AI

|

|

|

|

Dessert Rose posted:like a human child. but he never grows out of it. in the end, he never grows beyond his programming. he starts dreaming not due to self-improvement but because of a glitch; he even unlocks the cheevo where his dad's ghost compliments him on his new stage of evolution, but he (unintentionally) cheated. he literally installs feelings as an expansion module there's I think a total of two episodes where he's allowed to grow, one where he leads a revolution of sorts while marooned on a planet, to break a stalemate between two factions; and one where he is given his first command and has to gain the respect of a bigoted first officer (I loved that one btw, captain data best captain). and then bam, emotional chip ex machina (in machinam?) for being the second most important character on the show, he was written so lamely hackbunny fucked around with this message at 23:06 on Oct 23, 2015 |

|

|

|

I don't hate my job. I don't hate having a job. I need a job. I've taken some sick days and I'm about dying of boredom

|

|

|

|

echinopsis posted:dude how'd you get that av mild trolling in PYF

|

|

|

|

I've tried the alternative and it sucked

|

|

|

|

Symbolic Butt posted:pretty sure BSD has some kind of depression/sadbrains and is trying to rationalize it with the marxist approach I didn't want to say it first, but yeah. I got the strongest urge to get out of programming (and into more satisfying but far less lucrative jobs like teaching) when I felt the worst. then I got meds, therapy, but especially a live-in girlfriend (and a dog) and my perspective on money and work itself changed completely. I mean technically I'm still working for some dude (don't know about the yacht, but he rides a harley and plays golf), and the idea I'm really doing it for her (and the dog) is irrational wishful thinking, but I'm happy

|

|

|

|

Lady Galaga posted:it's good that you have something to distract yourself from the fact that you are going to be spending 1/4 of your week working for the next few decades i guess more like a good reason to not work even more than that (still at home sick, still dying of boredom)

|

|

|

|

man, tell me about it, before examinations at the uni I got gut cramps and nervous diarrhea. to study for Logic from absolute ignorance of the topic, I took a week off work which I spent in a 6 hours awake, 2 hours asleep cycle, and my waking hours in a 50 minutes study, 10 minutes play cycle. aced the test, immediately forgot everything

|

|

|

|

"make the buttons bigger" they told me, "they are hard to hit" so I made the icon bigger buttons still the same size nobody will notice

|

|

|

|

"can you make the text fisher-price big, too" sssure thing

|

|

|

|

"you nerds know nothing about UI design" -- a bigger nerd than I ever was or will be

|

|

|

|

DRAMATIZATION

hackbunny fucked around with this message at 17:06 on Nov 10, 2015 |

|

|

|

the ironic confrontational posting style doesn't seem to work well outside of SA not really blaming SA because it was usenet that made me the shitposter I am

|

|

|

|

just got into an incredibly stupid discussion on facebook (!) guy demonstrates floating point loss of precision with excel, subtracting 0.01 a hundred times from 1, result is not exactly zero. he's all insufferable nerd like "free ice cream to the first non-computer guy who can explain why in 10 words or less also smug about not using windows when I told him that the windows calculator can add and subtract 0.1, 0.01, etc. just fine (of loving course it uses rationals internally, except for irrationals which are stored as decimal fixed point with IIRC 64 decimal places), so I told him, try it with bc, in fact script it with bc and see if the result isn't 100% exact every single time, must be magic or some sci-fi-level symbolic calculator poo poo and not basically 9th grade arithmetic. but no it doesn't scale and can't calculate the fisher test for a large population in useful time so it must be some toy that's never used anywhere by anyone and not say a hugely popular software package old enough to drive, vote and drink BCD, loving seriously? he's an old so it's somewhat excusable but does he really think the world never moved on from there?

|

|

|

|

the point is you can choose at least in C and C++. you can use rationals, bignums, bigfloats, as large as you like, in base 256 or base 10, there are libraries for everything. I think GMP alone supports all those. there are libraries for more exotic representations. there are libraries to calculate all sorts of irrational functions to an arbitrary precision. there's not this immense gulf the guy is painting between IEEE floats and full-frontal symbolic math engines with nothing in the middle (save for mother-loving BCD)

|

|

|

|

tef posted:did you get fishmeched on facebook worse, I fishmeched someone on facebook and then shitposted when it got hopeless I just hope I didn't alienate a potential future employer  well at least I was reminded of this remarkable little library

|

|

|

|

shitposting wasn't very fishmech of me and I apologize

|

|

|

|

LeftistMuslimObama posted:imo, this is weak when poo poo like Visual Studio is just loving great out of the box nowadays. i'd only use vim if I was stuck targetting a platform visual studio didn't work with. seems visual studio is slowly adding support for like, all platforms. you can already use it for iphone and android development and I've read something about a gdb plugin

|

|

|

|

Arcsech posted:also, gently caress this bullshit: if I understand correctly, list nodes point to list nodes instead of whole objects - it's a common pattern (see LIST_ENTRY in Windows. this macro in particular is called CONTAINING_RECORD in Windows but it properly uses offsetof instead of pointer arithmetics with NULL which is undefined behavior and will make compilers scream bloody murder). it's so that objects embed the list nodes that "contain" them, compared to storing a pointer to the object in the node you save an allocation and an indirection, both things that are considered important in operating system code. another important advantage is that when you destroy the object you can simply unlink its nodes, which removes a source of dangling pointers. drawback is that you have to known in advance which lists are going to contain your object, to define all the necessary node fields in advance, but that isn't usually an issue in the kind of code you'll write (and you can always have an intermediate "object pointer" object that contains a list node and a pointer to the real object, but then you're on your own re. dangling pointers) no sarehu, this isn't an intrusive list because the list itself doesn't know poo poo about the objects it contains. the list manipulation routines operate on list nodes and for all they know the list is just nodes with no "contents". if anything, it's an "extrusive" (???) list for the standards of the Linux kernel this is a very straightforward macro btw

|

|

|

|

Jabor posted:It's an intrusive list because the list "intrudes" into the object but it doesn't really. the list doesn't contain objects, it contains nodes and nodes don't contain anything. it's like a do-it-yourself kit for an intrusive list but not an intrusive list by itself

|

|

|

|

Soricidus posted:Assertion Failed: Abort=Quit, Retry=Debug, Ignore=Continue it's from the debug build of the microsoft C runtime Soricidus posted:yes? that doesn't really excuse a crazy interface where the buttons have incorrect labels and the correct labels are given in the dialog title instead it's old code, really old, based on an even older function (MessageBox) which offers very little customization, but also the lowest overhead possible. specifically, it requires no GUI code in the caller aside from the call itself, which is as painless as calling say, Sleep. it's also the "most official" user interaction API available, headless machines can (could?) be configured to log the message and dismiss the dialog automatically without even showing it. it's also the only officially supported GUI available to service processes. it's a pretty sweet function actually, you can stick it anywhere for impromptu synchronous communication with the user, great for debugging and I miss it a lot on other platforms. I can live with fixed button labels

|

|

|

|

Soricidus posted:what i don't understand is why does something like that get used when my .net code fails an assertion when running within the visual studio 2015 debugger? you mean Debug.Assert shows this? maybe the BCL people just cargo-culted the code from the C runtime, they do that a lot for the low level stuff. let's look at the code... no, it uses a breakpoint or throws an exception. either the code on github is different from the code they ship, or the assertion is coming from a lower level, maybe a native library

|

|

|

|

Apocadall posted:whats a decent starter gui library in c++? Qt is alright

|

|

|

|

Luigi Thirty posted:what (legitimate) use case is there for inline assembly in vc++? doesn't the compiler output better assembly than any human short of noted hyperautist steve gibson? none whatsoever. the interactions between inline assembly and the rest of the compiler were never documented, so you were always taking risks. you want an external function implemented in assembly for things like manipulation of the program counter or stack - e.g. some compilers used to let you declare functions as __interrupt, and they'd return with iret instead of ret; it was a monumentally stupid idea and real actual operating systems use a trampoline written in assembly that calls a honest regular all-american C function passing things like saved registers as arguments. for direct access to SIMD or other specialized opcodes (FP state, cpuid, interrupt masking etc.) you use intrinsic functions, which have all the appropriate barriers, clobbers, etc. to let the compiler know how they affect the rest of the code. there is no other legitimate use of inline assembly, save for implementing intrinsics that are missing on your specific compiler version/platform GCC-style inline assembly, on the other hand, is a DIY kit for writing intrinsic functions so it's still 100% legit. GCC doesn't understand the assembly code itself, you have to tell it with annotations what registers are read, what registers are written, if memory is touched, etc. which you couldn't do with inline assembly in Visual C++. Visual C++ had to parse the assembly (with undocumented rules) and determine by itself (with undocumented rules) the impact on the surrounding code. now guess why inline assembly is only supported on x86 source: I reimplemented visual c++ intrinsics with GCC inline assembly Sagacity posted:One thing I never understood is why people would use that horrible at&t syntax for it, btw AT&T is unambiguous. no implicit instruction length, no confusion between immediates and addresses, it's much easier to write a compiler for (no clue why it swapped operand order though) and I guess its formality tickles the 'tism bone. it was also the standard on UNIX, where GCC was born of course knowing why it's like it is doesn't mean I don't hate it

|

|

|

|

Brain Candy posted:but if i don't sprinkle volatile everywhere it's not thread-safe volatile has a different meaning for asm(), it means the compiler must treat the block of inline assembly as sacred: can't hoist out of loops, can't move it around, can't delete it if the outputs are unused. some instructions need to be marked volatile (for example if they have side effects that you can't express with the available constraints), some don't (you can safely delete some complex SIMD operation if the compiler determines that it's a no-op) of course I was bitten in the rear end by this in that very header file, because volatile isn't granular enough for some cases and I had omitted from intrinsics that really should have been treated more carefully. I actually broke the kernel because they were super-critical kernel-only instructions for setting up virtual memory (IIRC) I was a huge knob back then, with torvaldsisms like that comment, draconian abe-like moderation of the IRC channel and starting a tradition of passive aggressive commit messages, but tbh I did do a huge amount of work to reverse-engineer all known x86 intrinsics, especially determining what functions had the equivalent of a "memory" clobber (i.e. "this touches memory so don't trust the values you think variables have") and what didn't (possibly erroneously). at the time I didn't know much about aliasing (still don't actually) so they're probably wrong anyway

|

|

|

|

Jeffrey of YOSPOS posted:vc++ really doesn't let you specify things like what registers are used in your inline assembly? How is that supposed to ever work? I'm the guy at microsoft who thought about it for like 3 seconds and decided "yeah, we'll parse the assembly and figure it out". no, it's not quite like that. let me explain. this is __cpuid, as implemented for gcc/clang: C++ code:C++ code:the beauty of GCC inline assembly is that the compiler doesn't even have to know what cpuid does, in fact it doesn't, it only operates on the inline code as a string with special format inserts. all the compiler needs to know is in the constraints: the inline asm takes "a" (eax) as an input, and overwrites "=a", "=b", "=c" and "=d" (eax, ebx, ecx and edx) Microsoft inline assembly is a toy for DOS-era programmers and little else (among the little else: competitor Borland had it too)

|

|

|

|

Soricidus posted:emacs is a modern gui application let's see a screenshot please and tia

|

|

|

|

|

| # ¿ May 11, 2024 06:06 |

|

NihilCredo posted:give paket a try maybe? it's basically "nuget, but a bit better" jesus not a single version number/git tag, just pull and hope

|

|

|